10 Jun 2026

Planet Python

Planet Python

Python GUIs: How to Set Row Background Colors in a QTableView — Use Qt's BackgroundRole to color entire rows based on your data

I have a QTableView table showing some data about connected devices. How can I highlight rows to give a visual indicator of the current status of the device?

When you're working with a QTableView and a custom model, it's common to want to highlight entire rows based on some condition in your data. For example, you might want to color a row blue when a device has a connected status, or red when something has gone wrong.

Understanding How data() Works

In Qt's Model/View architecture, the view calls your model's data() method for every cell in the table - and for each cell, it asks about multiple roles. One of those roles is Qt.BackgroundRole, which tells the view what background color to use for that cell.

The view asks for Qt.BackgroundRole on every single cell, not just one column. So if your data() method returns a color for Qt.BackgroundRole based on the row data (ignoring the column), the color will be applied to every cell in that row.

Let's build a working example.

A Complete Working Example

Here's a full example you can run directly. It creates a QTableView with colored rows based on the PRESENT_STATUS field in each row of data:

import sys

from typing import Union

from PyQt6.QtCore import QAbstractTableModel, QModelIndex, Qt

from PyQt6.QtGui import QColor

from PyQt6.QtWidgets import QApplication, QMainWindow, QTableView

class TableModel(QAbstractTableModel):

def __init__(self, data: Union[list, None] = None):

super().__init__()

self._data = data or []

self._hdr = self._gen_hdr_data() if data else []

self._base_color = {

"NewConnection": QColor("blue"),

"Registered": QColor("green"),

}

def _gen_hdr_data(self):

"""Build a sorted list of all unique keys across all row dicts."""

all_keys = set()

for d in self._data:

all_keys.update(d.keys())

return sorted(all_keys)

def rowCount(self, parent=QModelIndex()):

return len(self._data)

def columnCount(self, parent=QModelIndex()):

return len(self._hdr)

def headerData(self, section, orientation, role):

if role == Qt.DisplayRole and orientation == Qt.Horizontal:

return self._hdr[section]

def data(self, index: QModelIndex, role: int):

if not index.isValid():

return None

row_dict = self._data[index.row()]

state = row_dict.get("PRESENT_STATUS", "")

if role == Qt.DisplayRole:

col_key = self._hdr[index.column()]

value = row_dict.get(col_key, "")

return str(value) if value else ""

if role == Qt.BackgroundRole:

color = self._base_color.get(state)

if color:

return color

return None

class MainWindow(QMainWindow):

def __init__(self):

super().__init__()

self.setWindowTitle("Row Background Colors in QTableView")

data = [

{"IP": "192.168.1.10", "PRESENT_STATUS": "NewConnection"},

{"IP": "192.168.1.108", "FORMER_STATUS": "NewConnection",

"PRESENT_STATUS": "Registered"},

{"IP": "192.168.1.50", "PRESENT_STATUS": "Unknown"},

]

self.table = QTableView()

model = TableModel(data)

self.table.setModel(model)

self.setCentralWidget(self.table)

app = QApplication(sys.argv)

window = MainWindow()

window.show()

app.exec()

The method that Qt calls on the model is called data, so in the example above, the list is stored as self._data (with a leading underscore) to avoid this.

Run this and you'll see three rows. The first row ("NewConnection") has a blue background, the second row ("Registered") has a green background, and the third row ("Unknown") has no special coloring because it isn't in the _base_color dictionary.

How Colors are Set on Rows

To understand how the color is being set to the entire row, take a look at the Qt.BackgroundRole section of data():

if role == Qt.BackgroundRole:

color = self._base_color.get(state)

if color:

return color

Notice that index.column() isn't used here at all. The color decision is based entirely on the row's PRESENT_STATUS value. Since the view calls data() for every cell in the row - column 0, column 1, column 2, etc. - and each call gets the same color back, the entire row ends up painted.

If you only wanted to color a specific column (say, just the status column), you would add a column check:

if role == Qt.BackgroundRole:

# Only color the PRESENT_STATUS column

if self._hdr[index.column()] == "PRESENT_STATUS":

color = self._base_color.get(state)

if color:

return color

Making the Text Readable

One thing you'll notice with a dark background color like blue is that the default black text becomes hard to read. You can fix this by also handling Qt.ForegroundRole and returning a light text color when the background is dark:

def data(self, index: QModelIndex, role: int):

if not index.isValid():

return None

row_dict = self._data[index.row()]

state = row_dict.get("PRESENT_STATUS", "")

if role == Qt.DisplayRole:

col_key = self._hdr[index.column()]

value = row_dict.get(col_key, "")

return str(value) if value else ""

if role == Qt.BackgroundRole:

color = self._base_color.get(state)

if color:

return color

if role == Qt.ForegroundRole:

# If this row has a background color, use white text.

if state in self._base_color:

return QColor("white")

return None

Now blue and green rows will have white text, making everything easy to read.

Updating Colors Dynamically

If your data changes at runtime - for example, a device's status changes from "NewConnection" to "Registered" - you need to tell the view that something has changed so it repaints. You do this by emitting the dataChanged signal:

def update_status(self, row, new_status):

self._data[row]["PRESENT_STATUS"] = new_status

# Emit dataChanged for the entire row.

top_left = self.index(row, 0)

bottom_right = self.index(row, self.columnCount() - 1)

self.dataChanged.emit(top_left, bottom_right)

This tells the view to re-query data() for every cell in that row, which picks up both the new display text and the new background color. For a deeper look at how signals work to keep your model and view in sync, see Signals, Slots & Events.

Summary

Once you understand how the model's data() method works, coloring entire rows in a QTableView is relatively straightforward. The view asks for each role on every cell, so returning a color from Qt.BackgroundRole based on row-level data - without filtering by column - naturally paints the whole row. Pair that with Qt.ForegroundRole for readable text, and you've got a clean, data-driven way to highlight rows in your table.

To learn more about using QTableView with custom models and data from numpy or pandas, see the QTableView with numpy and pandas tutorial. If you want to add sorting and filtering to your table, take a look at Sorting and Filtering Tables.

For an in-depth guide to building Python GUIs with PyQt6 see my book, Create GUI Applications with Python & Qt6.

10 Jun 2026 6:00am GMT

09 Jun 2026

Planet Python

Planet Python

PyCoder’s Weekly: Issue #738: sleep(), Polars Workflows, Iterators, and More (2026-06-09)

#738 - JUNE 9, 2026

View in Browser »

Python sleep(): How to Add Time Delays to Your Code

Learn how to use Python's sleep() function to add time delays and pause your code with time.sleep(), decorators, threads, and asyncio.

REAL PYTHON

Libraries for Your Python Polars Workflows

Four excellent libraries for your data science workflow with support for Polars DataFrames

ISABELLA VELÁSQUEZ • Shared by Isabella Velásquez

B2B AI Agent Auth Support

Your users are asking if they can connect their AI agent to your product, but you want to make sure they can do it safely and securely. PropelAuth makes that possible →

PROPELAUTH sponsor

Down the Iterator Rabbit Hole

Following the trail when you have a chain of iterators

STEPHEN GRUPPETTA

Articles & Tutorials

olmOCR-2 vs PaddleOCR-VL: Which Extracts PDF Tables Better?

Compare olmOCR-2 and PaddleOCR-VL on a real arXiv PDF with dense technical tables. This article walks through a Python-based OCR workflow, then evaluates how each model handles table detection, runtime, numeric accuracy, merged cells, and multi-tier headers.

KHUYEN TRAN • Shared by Khuyen Tran

Using Typing in Python Leads to Different Sorts of Code

Chris has been moving lots of code from Python 2 to 3 and experimenting with more rigid type hints as he goes along. He's found that keeping the type checker happy makes him write code in a different way, almost like writing in a second language.

CHRIS SIEBENMANN

Django: Introducing Django-Integrity-Policy

Recently, browsers have added support for the new Integrity-Policy response header (Firefox 145+, Chrome 138+). Adam quickly went to work to build a library that enables your Django project to take advantage of the feature.

ADAM JOHNSON

PSF Strategic Plan 2026 Draft

The Python Software Foundation board has been developing a strategic plan to guide the foundation's direction over the next five years. The first draft has been released and they're looking for community feedback.

PYTHON SOFTWARE FOUNDATION

EuroPython 2026 Language Summit Talks

This year's EuroPython includes a Python Language Summit. This post highlights the talks scheduled for it, including adding Rust capabilities to CPython, an update on incremental garbage collection, and more.

EUROPYTHON.EU

Free Threading Internals: Reference Counting

This article describes how the lifetime of Python objects are tracked using reference counting and how that is effected by the changes brought about by removing the GIL.

VICTOR STINNER

Keep Your Developer Instincts When AI Writes the Code

The promise was less friction. The cost, it turns out, is instinct, a high price to pay. Bob's answer: add deliberate practice to your routine, and keep the struggle.

BOB BELDERBOS • Shared by Bob Belderbos

How to Use GitHub Copilot Code Review in Pull Requests

Learn how to use GitHub Copilot code review on pull requests for AI-assisted feedback, one-click fixes, and project-specific custom instructions.

REAL PYTHON

Parsing XML EXIF From .avif Files (Plus a Rant)

The .avif format tends to result in smaller files, but the EXIF strippers that Andrew was using didn't support the format, so he wrote his own.

ANDREW STEPHENS

Structuring Your Python Script

Master Python script structure with best practices for shebangs, ordered imports, formatting with Ruff, constants, and a clean entry point.

REAL PYTHON course

Projects & Code

Events

Weekly Real Python Office Hours Q&A (Virtual)

June 10, 2026

REALPYTHON.COM

Python Atlanta

June 11 to June 12, 2026

MEETUP.COM

PyDelhi User Group Meetup

June 13, 2026

MEETUP.COM

DFW Pythoneers 2nd Saturday Teaching Meeting

June 13, 2026

MEETUP.COM

DjangoCologne

June 16, 2026

MEETUP.COM

PyCon Singapore 2026

June 19 to June 22, 2026

PYCON.SG

Happy Pythoning!

This was PyCoder's Weekly Issue #738.

View in Browser »

[ Subscribe to 🐍 PyCoder's Weekly 💌 - Get the best Python news, articles, and tutorials delivered to your inbox once a week >> Click here to learn more ]

09 Jun 2026 7:30pm GMT

Python Docs Editorial Board: Meeting Minutes: Jun 9, 2026

Meeting Minutes from Python Docs Editorial Board: Jun 9, 2026

09 Jun 2026 7:00pm GMT

Django community aggregator: Community blog posts

Django community aggregator: Community blog posts

Logical optimizations

The second article in the series. The first was about control flow; this one stays with the same tactic - reshaping code - one layer down, at the condition. Here: merging ifs, factoring shared decisions, and dropping checks that earn nothing. The Boolean algebra of conditions - De Morgan and friends - is a different lever, and gets its own installment next time.

09 Jun 2026 11:00am GMT

Planet Twisted

Planet Twisted

Hynek Schlawack: How to Ditch Codecov for Python Projects

Codecov's unreliability breaking CI on my open source projects has been a constant source of frustration for me for years. I have found a way to enforce coverage over a whole GitHub Actions build matrix that doesn't rely on third-party services.

09 Jun 2026 12:00am GMT

05 Jun 2026

Django community aggregator: Community blog posts

Django community aggregator: Community blog posts

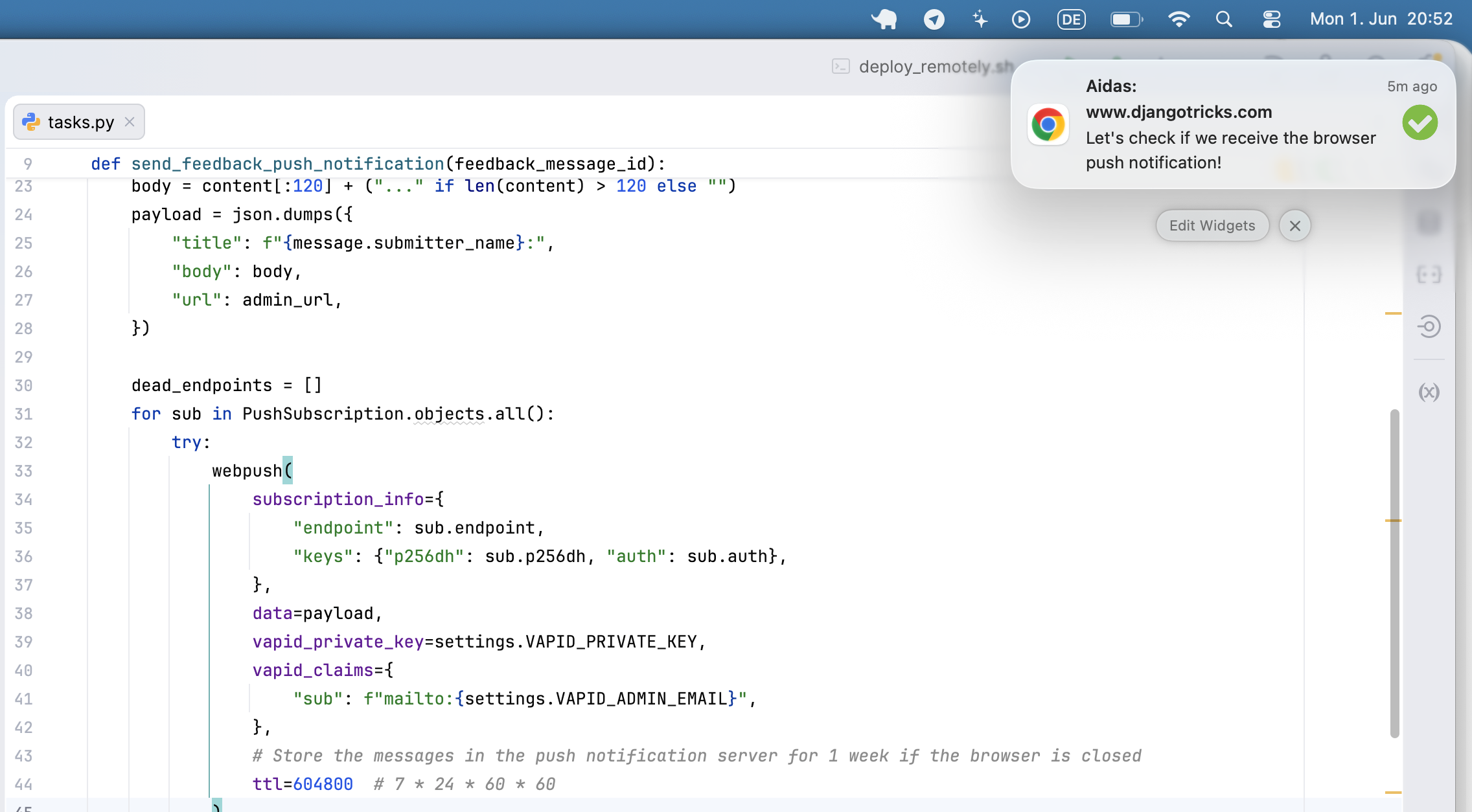

Browser Push Notifications for a Django Website

For DjangoTricks, and some other websites, I intentionally didn't set email notifications when a feedback message arrived - I didn't want to pay for an email server or spam my inbox. While checking the messages in the database from time to time, sometimes I found out about them too late.

Last weekend, I decided to implement Web Push notifications to get notified about the feedback in my OS, just like in this example:

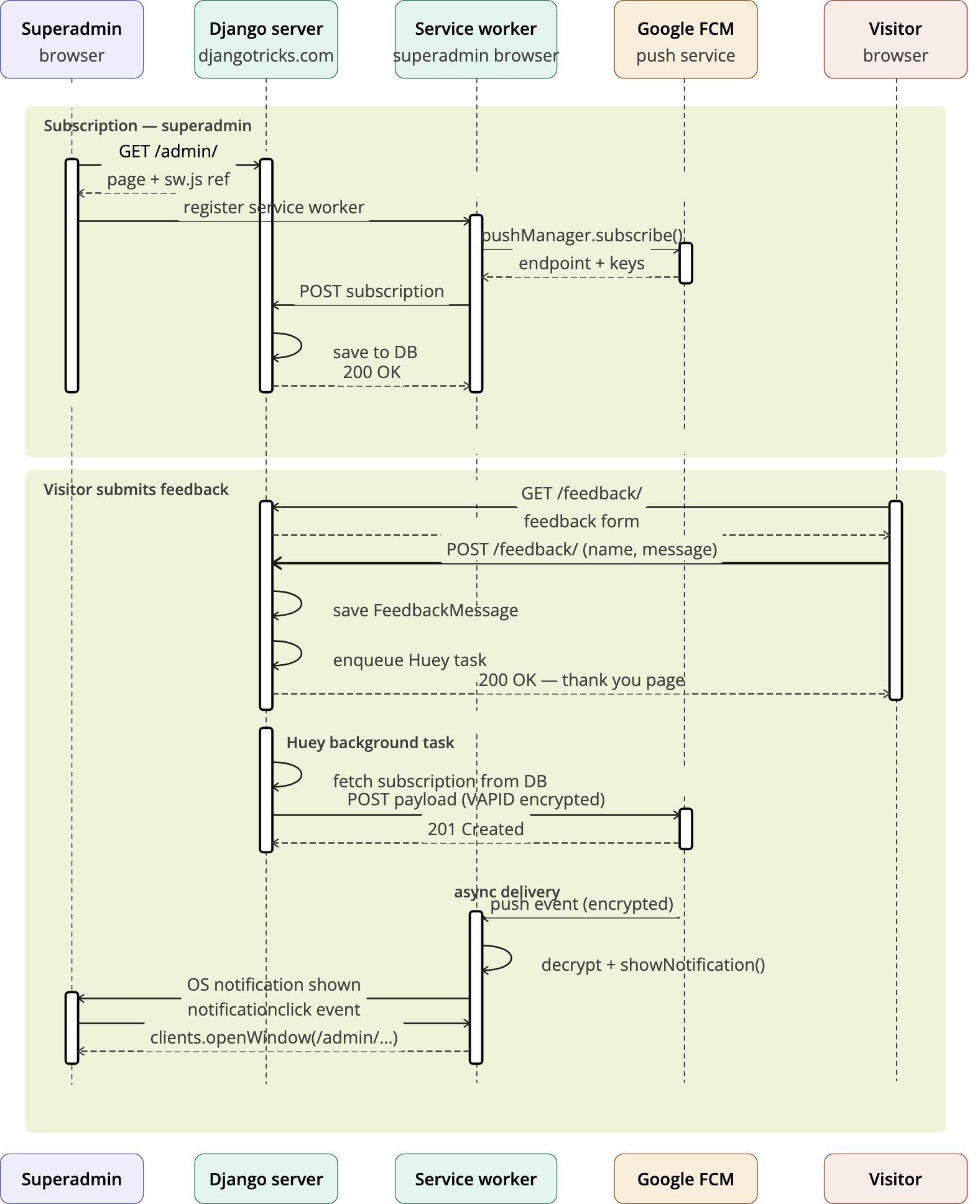

This tutorial walks through adding Web Push notifications to a Django project from scratch. When a visitor submits a feedback form the site owner receives a native browser notification - even if the admin tab is closed - thanks to a service worker and a Huey background task.

How it works

Push Notifications work in such a way: at first, people who want to get notifications need to subscribe to the notifications in their browser. The subscribers are stored on the push notification servers and also their identifiers are stored in Django website database. Whenever we need to send the messages to those subscribers, we send them to push notification server that passes the message to all subscribers if their browsers are open at the moment. If the browsers are closed at that moment, the message's TTL (time to live) in seconds set long enough, and the message is not expired yet, they will get the message later.

The push service depends on the browser:

- Chrome - Google's FCM (Firebase Cloud Messaging) -

fcm.googleapis.com - Firefox - Mozilla Autopush -

updates.push.services.mozilla.com - Safari - Apple Push Notification Service (APNs)

The two standard Web Push prerequisites are a VAPID key pair (identifies your server to the push service) and a service worker (a background JS script that receives the push and shows the notification even when the tab is closed).

The workflow would be as follows:

- I as a superuser visit the Django administration page that has sw.js file with a service worker and a JavaScript to subscribe to notidications.

- JavaScript request Notification permission. I accept it.

- JS calls

pushManager.subscribe()with the VAPID public key. - JS POSTs the subscription to

/notifications/push/subscribe/. - Django stores it in PushSubscription model.

Visitor submits feedback form

- A view saves

FeedbackMessageto the database. transaction.on_commitqueues a Huey task.- Huey task calls

pywebpush- browser's push service (FCM / Mozilla). - Push service wakes the service worker.

- Service worker shows a native OS notification.

- Clicking it opens the Django admin change page.

Prerequisites

- Django project using Huey for background tasks

- Python virtual environment

Install two dependencies:

(.venv)$ pip install pywebpush py-vapid

pywebpushis the library to communicate with the Push Notification server.py-vapidwill only be needed once to generate keys.

You'll need HTTPS to test the Web Push notifications if your host is not 127.0.0.1. If you use a custom domain in your /etc/hosts, such as djangotricks.localhost, you will also need to set up HTTPS with mkcert.

Step 1. The Feedback App

Create feedback app with FeedbackMessage model that will store submitted feedback messages:

# myproject/apps/feedback/models.py

from django.conf import settings

from django.db import models

from django.utils.translation import gettext_lazy as _

class FeedbackMessage(models.Model):

created_at = models.DateTimeField(_("Created at"), auto_now_add=True)

submitter_name = models.CharField(_("Submitter name"), max_length=200)

submitter_email = models.EmailField(_("Submitter email")

content = models.TextField(_("Content")

class Meta:

verbose_name = _("Feedback Message")

verbose_name_plural = _("Feedback Messages")

ordering = ("-created_at",)

def __str__(self):

return _("Feedback message from {}").format(self.submitter_name)

Create the form:

# myproject/apps/feedback/forms.py

from django import forms

from django.utils.translation import gettext_lazy as _

from .models import FeedbackMessage

class FeedbackMessageForm(forms.ModelForm):

class Meta:

model = FeedbackMessage

fields = [

"content",

"submitter_name",

"submitter_email",

]

widgets = {

"content": forms.Textarea(

attrs={

"rows": 5,

"placeholder": _("Your message")

}

),

}

labels = {

"submitter_name": _("Your name"),

"submitter_email": _("Your email"),

}

Create a view to handle that form:

# myproject/apps/feedback/views.py

from django.db import transaction

from django.shortcuts import render, redirect

from django.urls import reverse

from .forms import FeedbackMessageForm

from .tasks import send_feedback_push_notification

def feedback_form(request):

if request.method == "POST":

form = FeedbackMessageForm(data=request.POST)

if form.is_valid():

message = form.save(commit=False)

if request.user.is_authenticated:

message.user = request.user

message.save()

transaction.on_commit(

lambda: send_feedback_push_notification(message.pk)

)

return redirect(reverse("feedback:complete"))

else:

form = FeedbackMessageForm()

return render(request, "feedback/form.html", {"form": form})

def feedback_complete(request):

return render(request, "feedback/complete.html")

Create the app config, migrations, templates, URLs, and Django administration for it.

Step 2. VAPID keys

Generate the key pair once. These stay on the server and are never committed to version control.

(.venv)$ vapid --gen

(.venv)$ vapid --applicationServerKey --private-key private_key.pem

--gen writes private_key.pem and public_key.pem to the current directory.

The private_key.pem file will contain the key like:

-----BEGIN PRIVATE KEY-----

<Multiline private key data>

-----END PRIVATE KEY-----

--applicationServerKey prints the base64url-encoded public key the browser needs, such as:

Application Server Key = <Public key data as base64url>

For the secrets.json or .env file where you store your secrets, you will need the content of <Private key data with newlines removed> and <Public key data as base64url>.

{

...

"VAPID_PRIVATE_KEY": "<Private key data with newlines removed>",

"VAPID_PUBLIC_KEY": "<Public key data as base64url>"

}

Don't commit the *.pem files or the secrets to the Git repo!

Step 3. Django settings

# myproject/settings.py

### WEB PUSH ###

VAPID_PRIVATE_KEY = get_secret("VAPID_PRIVATE_KEY")

VAPID_PUBLIC_KEY = get_secret("VAPID_PUBLIC_KEY")

VAPID_ADMIN_EMAIL = "admin@mydomain.com"

Step 4. The Notifications App

Create notifications app with the PushSubscription model to track the Push notification subscribers:

# myproject/apps/notifications/models.py

from django.conf import settings

from django.db import models

from django.utils.translation import gettext_lazy as _

class PushSubscription(models.Model):

"""One browser push subscription for one device."""

created_at = models.DateTimeField(_("Created at"), auto_now_add=True)

user = models.ForeignKey(

_("User"),

settings.AUTH_USER_MODEL,

on_delete=models.CASCADE,

related_name="push_subscriptions",

)

endpoint = models.TextField(_("Endpoint"), unique=True)

p256dh = models.TextField(_("Browser ECDH public key"))

auth = models.TextField(_("16-byte auth secret"))

class Meta:

verbose_name = _("Push Subscription")

verbose_name_plural = _("Push Subscriptions")

ordering = ("-created_at",)

def __str__(self):

return f"{self.user} - {self.endpoint[:60]}"

Create app configuration, migrations, and Django administration for it.

Step 5. Subscribe / unsubscribe views

Create the views that will be called after the user subscribes or unsubscribes to Push notifications. Also a view for the service worker sw.js:

# myproject/apps/notifications/views.py

import json

from django.contrib.auth.decorators import login_required

from django.contrib.staticfiles import finders

from django.http import HttpResponse, JsonResponse

from django.views.decorators.http import require_POST

from .models import PushSubscription

@login_required

@require_POST

def push_subscribe(request):

try:

data = json.loads(request.body)

endpoint = data["endpoint"]

p256dh = data["keys"]["p256dh"]

auth = data["keys"]["auth"]

except (KeyError, json.JSONDecodeError):

return JsonResponse({"error": "Invalid subscription data"}, status=400)

PushSubscription.objects.update_or_create(

endpoint=endpoint,

defaults={"user": request.user, "p256dh": p256dh, "auth": auth},

)

return JsonResponse({"status": "subscribed"})

@login_required

@require_POST

def push_unsubscribe(request):

try:

endpoint = json.loads(request.body)["endpoint"]

except (KeyError, json.JSONDecodeError):

return JsonResponse(

{"error": "Invalid data"},

status=400

)

PushSubscription.objects.filter(

user=request.user,

endpoint=endpoint,

).delete()

return JsonResponse({"status": "unsubscribed"})

def service_worker(request):

"""Serve sw-feedback.js as sw.js at the admin root"""

path = finders.find("admin/js/sw-feedback.js")

with open(path) as fh:

content = fh.read()

return HttpResponse(

content,

content_type="application/javascript"

)

Step 6. Wire URLs into myproject/urls.py

Plug the notification views into URLs:

# myproject/apps/notifications/urls.py

from django.urls import path

from . import views

app_name = "notifications"

urlpatterns = [

path("push/subscribe/", views.push_subscribe, name="push_subscribe"),

path("push/unsubscribe/", views.push_unsubscribe, name="push_unsubscribe"),

]

The service worker URL must be mounted at the same prefix as ADMIN_URL so its scope covers the admin. Add both patterns before the admin.site.urls line:

# myproject/urls.py

from notifications import views as notifications_views

urlpatterns = [

# ...

path(

f"{settings.ADMIN_URL}sw.js",

notifications_views.service_worker,

name="admin_service_worker",

),

path(

"notifications/",

include("notifications.urls", namespace="notifications"),

),

path(settings.ADMIN_URL, admin.site.urls),

# ...

]

Step 7. Service worker JavaScript

Save as myproject/static/admin/js/sw-feedback.js:

self.addEventListener("push", function (event) {

const data = event.data ? event.data.json() : {};

const title = data.title || "New feedback message";

const options = {

body: data.body || "",

icon: "/static/admin/img/icon-yes.svg",

data: { url: data.url || "/" },

};

event.waitUntil(

self.registration.showNotification(title, options)

);

});

self.addEventListener("notificationclick", function (event) {

event.notification.close();

event.waitUntil(

clients.openWindow(event.notification.data.url)

);

});

Step 8. Admin subscription JavaScript

Save as myproject/static/admin/js/push-subscribe.js:

(function () {

"use strict";

// Injected by the Django template override in Part 9.

const VAPID_PUBLIC_KEY = window.VAPID_PUBLIC_KEY;

const SUBSCRIBE_URL = window.PUSH_SUBSCRIBE_URL;

const UNSUBSCRIBE_URL = window.PUSH_UNSUBSCRIBE_URL;

const ADMIN_URL = window.ADMIN_URL;

// Tracks the endpoint last registered on the server so we can delete it even

// when the browser subscription has already been silently revoked (e.g. user

// cleared site data or the push subscription expired without blocking).

const STORAGE_KEY = "pushSubscriptionEndpoint";

// Read the CSRF token from the hidden input injected by {% csrf_token %}.

// Do not use the cookie: this project has CSRF_USE_SESSIONS = True.

const CSRF_TOKEN = document.querySelector("[name=csrfmiddlewaretoken]")?.value || "";

function urlBase64ToUint8Array(base64String) {

const padding = "=".repeat((4 - (base64String.length % 4)) % 4);

const base64 = (base64String + padding).replace(/-/g, "+").replace(/_/g, "/");

const rawData = atob(base64);

return Uint8Array.from([...rawData].map((c) => c.charCodeAt(0)));

}

function post(url, body) {

return fetch(url, {

method: "POST",

headers: { "Content-Type": "application/json", "X-CSRFToken": CSRF_TOKEN },

body: JSON.stringify(body),

});

}

async function syncSubscription() {

if (!VAPID_PUBLIC_KEY) return;

if (!("serviceWorker" in navigator) || !("PushManager" in window)) return;

const registration = await navigator.serviceWorker.register(

"/" + ADMIN_URL + "sw.js",

{ scope: "/" + ADMIN_URL }

);

await navigator.serviceWorker.ready;

const storedEndpoint = localStorage.getItem(STORAGE_KEY);

const existing = await registration.pushManager.getSubscription();

// The browser subscription was revoked (user blocked notifications, cleared

// site data, or the subscription expired) but the server record still exists

// - delete it using the endpoint we stored at subscription time.

if (storedEndpoint && (!existing || existing.endpoint !== storedEndpoint)) {

await post(UNSUBSCRIBE_URL, { endpoint: storedEndpoint });

localStorage.removeItem(STORAGE_KEY);

}

// Already subscribed and the server already knows about this endpoint.

if (existing && existing.endpoint === storedEndpoint) return;

const permission = await Notification.requestPermission();

if (permission !== "granted") return;

const subscription = await registration.pushManager.subscribe({

userVisibleOnly: true,

applicationServerKey: urlBase64ToUint8Array(VAPID_PUBLIC_KEY),

});

await post(SUBSCRIBE_URL, subscription.toJSON());

localStorage.setItem(STORAGE_KEY, subscription.endpoint);

}

document.addEventListener("DOMContentLoaded", syncSubscription);

})();

localStorage is the key to reliable unsubscription. Notification.permission alone cannot detect silent revocations - when a user clears site data or a push subscription expires, the permission may still read "granted" while the browser-side subscription is gone. By storing the endpoint at subscribe time and comparing it on every page load, the script can call UNSUBSCRIBE_URL with the old endpoint even after the browser subscription object has disappeared.

Step 9. Context processor

A context processor injects the VAPID globals into every admin template response.

Create myproject/apps/notifications/context_processors.py:

from django.conf import settings

from django.urls import reverse

def push_notifications(request):

"""Inject push-notification globals into every admin page."""

if not request.path.startswith(f"/{settings.ADMIN_URL}"):

return {}

if not settings.VAPID_PUBLIC_KEY:

return {}

return {

"push_vapid_public_key": settings.VAPID_PUBLIC_KEY,

"push_subscribe_url": reverse("notifications:push_subscribe"),

"push_unsubscribe_url": reverse("notifications:push_unsubscribe"),

"push_admin_url": settings.ADMIN_URL,

}

Register it in the template settings:

TEMPLATES = [

{

"BACKEND": "django.template.backends.django.DjangoTemplates",

"DIRS": [

os.path.join(BASE_DIR, "myproject", "templates"),

],

"APP_DIRS": True,

"OPTIONS": {

"context_processors": [

# ...

"myproject.apps.notifications.context_processors.push_notification_settings",

],

},

},

]

Step 10. Inject globals via admin/base_site.html

Override myproject/templates/admin/base_site.html. If one already exists, add the extrahead block; otherwise create the file extending Django's built-in template:

{% extends "admin/base_site.html" %}

{% load static %}

{% block extrahead %}

{# ... any existing content such as a favicon include ... #}

{% if push_vapid_public_key %}

{% csrf_token %}

<script nonce="{{ request.csp_nonce }}">

window.VAPID_PUBLIC_KEY = "{{ push_vapid_public_key }}";

window.PUSH_SUBSCRIBE_URL = "{{ push_subscribe_url }}";

window.PUSH_UNSUBSCRIBE_URL = "{{ push_unsubscribe_url }}";

window.ADMIN_URL = "{{ push_admin_url }}";

</script>

<script src="{% static 'admin/js/push-subscribe.js' %}"></script>

{% endif %}

{% endblock %}

Step 11. Huey task

# myproject/apps/feedback/tasks.py

import json

from django.conf import settings

from django.urls import reverse

from huey.contrib.djhuey import db_task

@db_task()

def send_feedback_push_notification(feedback_message_id):

from pywebpush import webpush, WebPushException

from feedback.models import FeedbackMessage

from notifications.models import PushSubscription

private_key = settings.VAPID_PRIVATE_KEY

if not private_key:

return

if not (message := FeedbackMessage.objects.filter(

pk=feedback_message_id

).first()):

return

admin_url = settings.WEBSITE_URL + reverse(

"admin:feedback_feedbackmessage_change",

args=(message.pk,)

)

content = message.content

body = content[:120] + (

"..." if len(content) > 120 else ""

)

payload = json.dumps({

"title": f"{message.submitter_name}:",

"body": body,

"url": admin_url,

})

dead_endpoints = []

for sub in PushSubscription.objects.all():

try:

webpush(

subscription_info={

"endpoint": sub.endpoint,

"keys": {"p256dh": sub.p256dh, "auth": sub.auth},

},

data=payload,

vapid_private_key=private_key,

vapid_claims={"sub": f"mailto:{settings.VAPID_ADMIN_EMAIL}"},

)

except WebPushException as exc:

# 410 Gone / 404 Not Found means the subscription has expired

if exc.response is not None and exc.response.status_code in (404, 410):

dead_endpoints.append(sub.endpoint)

if dead_endpoints:

PushSubscription.objects.filter(

endpoint__in=dead_endpoints,

).delete()

The Huey task is already called from the view in feedback app via transaction.on_commit. Using on_commit ensures the task is only queued after the database row is fully committed, so the task always finds the message when it runs.

Step 12. Content Security Policy

If the project uses Django CSP, two directives need adjusting:

CSP_WORKER_SRC = ["'self'"] # allows service worker registration from this origin

CSP_CONNECT_SRC = ["'self'"] # allows the subscribe POST fetch

The pywebpush HTTP call to the external push service (FCM, Mozilla) runs server-side inside the Huey worker process and is not subject to the browser's CSP.

User experience

It' safest to keep the body of the message up to 120 characters long - the rest will likely be cut on different OSes or browsers.

You can check the current status of notification permissions by:

Notification.permission // should be "granted", not "default" or "denied"

Based on that you can write a specific message in the User Interface what to do if the permission has been denied.

If you have many different types of notifications, you would set the configuration in a Django website. And let the visitor subscribe to the notifications in the browser once. Then your Huey tasks would check the notification settings and trigger send according messages to the subscribers.

For example, for DjangoTricks website, I would allow subscribing to new tricks, blog posts, and goodies in the notification configuration, and the visitors would grant permission to Web Push notifications just once.

Privacy and security

Messages themselves are stored on the Web Push servers encrypted, but back in the OS they are shown in plain text, and can be seen by people standing behind the user or possibly read out by other apps (or viruses) which have permissions to access OS notifications.

Practical recommendations for sensitive content:

- Send a generic notification ("You have a new message") and make the user open the app to see the actual content - this is what most banking/healthcare apps do.

- If you do include content, keep it minimal - avoid full message text, Personally Identifiable Information (PII), medical info, etc.

- Make sure your VAPID keys are securely stored and rotated if compromised.

- Set a short TTL (time-to-live) on the push message so it doesn't sit on Google's servers long if the device is offline.

For anything regulated (HIPAA, GDPR, financial data), the safest approach is the generic notification pattern, since you have no control over how the OS handles notification display and history once it leaves the browser.

OS permissions

For notification receivers, the permissions can be denied for the Browser globally as well.

One can set or unset them on MacOS at Settings ➔ Notifications ➔ Google Chrome (or another browser analogically) or on Windows at Focus Assist / Notification settings.

Marketing perspective

Opt-in: When asking for push notifications without context, only 5-25% would grant the permission. If the permission is asked in the UI at first and the reason is given, about 60% would grant the permission.

Opt-out: On average, nearly 8-10% of subscribers opt out from web push notifications per year. Even just 1 push notification per week leads to 10% of users disabling notifications. 46% of users disable notifications if they receive more than 6 notifications.

Final words

The technique of Web Push is not trivial, but with the help of py-vapid and pywebpush, it becomes manageable. The best use cases for push notifications are those where a SaaS project or a web platform suggests to use this technique intentionally: when waiting for something to happen, such as a new comment, a reply to a message, a new task to do from another person, or a new post of a favorite author who writes irregularly.

Cover picture by cottonbro studio

05 Jun 2026 5:00pm GMT

Issue 340: Django security releases 6.0.6 and 5.2.15

News

Django security releases issued: 6.0.6 and 5.2.15

Five CVEs are fixed in this latest release. As ever, perhaps the best security step you can take is to always update to the latest version of Django.

Updates to Django

Today, "Updates to Django" is presented by Hwayoung from Djangonaut Space! 🚀

Last week we had 13 pull requests merged into Django by 8 different contributors - including 4 first-time contributors! Congratulations to Vishwa, Tim Harris, Codequiver, and Joe Babbitt for having their first commits merged into Django - welcome on board!

This week's Django highlights: 🦄

- Deprecated the safe parameter of JsonResponse, as the browser vulnerability it protected against was fixed in ECMAScript 5. #36905

Releases

Python Release Python 3.15.0b2

Python 3.15.0b2, the second beta of four, is out with an explicit push for third-party maintainers to test now and file issues as early as possible. The release targets feature-complete beta with no ABI changes after beta 4, and recommends delaying production releases until 3.15.0rc1.

Python Software Foundation

PSF Strategic Plan 2026 Draft: Open for Community Feedback

PSF is publishing the full Strategic Plan 2026 draft and opening a three-week feedback window ending June 25. The board asks reviewers to focus on whether the goals and objectives are right, while implementation details will be shaped later by staff.

Sponsored Link

Django middleware composes request handlers. Harnesses do the same for AI agents - Claude Code, Codex, Gemini in one coordinated system. Learn what a harness actually is, why it's a new primitive, and how to engineer one that holds in production. Apache 2.0, open source.

Articles

Showcasing allauth IdP: build an MCP server | allauth

Learn how to use Django and django-allauth to secure MCP endpoints with OIDC, including token validation, client registration, and host authorization flows.

Django: introducing django-integrity-policy

From Adam Johnson, a new security header and detailed article laying out the "why."

Dependency Pruning

Tips on how to treat every lockfile entry as an attack surface and maintenance burden you do not want, then start by deleting dependencies you never import.

Loopwerk: uv is fantastic, but its package management UX is a mess

uv shines for Python toolchains, but its package maintenance UX is rough: there is no straightforward uv outdated, and the upgrade workflow (uv lock --upgrade) can aggressively pull in breaking major releases.

Python 3.15: features that didn't make the headlines

Python 3.15 beta highlights worth a look: TaskGroup.cancel for graceful cancellation, ContextDecorator fixing decorator lifecycles for async and generators, a new threading iterator helpers to avoid broken state, and immutable JSON support via frozendict and an array_hook.

Please add an RSS Feed to Your Site

RSS is still the cleanest way to keep up with the people you actually want to hear from. If you host a personal site with Django, add an RSS feed quickly with a simple, up-to-date tutorial and ship it.

Using Read the Docs to benefit Django

Read the Docs can integrate with EthicalAds, letting maintainers earn a little from their documentation.

The Pursuit Of Purity (The Right Way To Do AI)

A thoughtful look at competing takes on AI ethics, from safety-first big-lab work to open, locally run, consensually sourced models.

Django Forum

django-alauth 65.18.0 released: IdP demo time

django-allauth 65.18.0 was just shipped with a bunch of Identity Provider (IdP) improvements!

Daphne v4.2.2 release

Daphne v4.2.2 is now available on PyPI. It fixes a couple of moderate/low security issues and is a recommended update for all users.

Django Fellow Reports

Natalia Bidart

My primary focus this week was polishing the upcoming security release. I spent time going deeper into areas I am less familiar with to ensure everything was in good shape for release. As release manager, this included reviewing and completing release notes, preparing backports for all three supported stable branches, and crafting the corresponding CVE metadata so records are ready ahead of disclosure (this is part of our CNA responsibilities).

Sarah Boyce

I was at PyCon Italia this week, which was fantastic, highly recommend going if you get the chance.

Jacob Walls

After a Monday holiday in the US, I spent a week focusing on contributions from the prior week's PyCon sprint.

Events

PyBay 2026

October 3rd in San Francisco this year. The Call for Proposals (CP) is open until July 8th.

Django Job Board

Founding Engineer at MyDataValue

Projects

feincms/feincms3-cookiecontrol

Cookie banner with support for embedded media.

adamghill/dj-lite-tenant

Multi-tenant SQLite databases for Django.

05 Jun 2026 2:00pm GMT

22 May 2026

Planet Twisted

Planet Twisted

Glyph Lefkowitz: Opaque Types in Python

Let's say you're writing a Python library.

In this library, you have some collection of state that represents "options" or "configuration" for a bunch of operations. Such a set of options is a bundle of potentially ever-increasing complexity. Thus, you will want it to have an extremely minimal compatibility surface, with a very carefully chosen public interface, that is either small, or perhaps nothing at all. Such an object conveys state and might have some private behavior, but all you want consumers to be able to do is build it in very constrained, specific ways, and then pass it along as a parameter to your own APIs.

By way of example, imagine that you're wrapping a library that handles shipping physical packages.

There are a zillion ways to do it ship a package. There are different carriers who can ship it for you. There's air freight, and ground freight, and sea freight. There's overnight shipping. There's the option to require a signature. There's package tracking and certified mail. Suffice it to say, lots of stuff.

If you are starting out to implement such a library, you might need an object called something like ShippingOptions that encapsulates some of this. At the core of your library you might have a function like this:

1 2 3 4 5 |

|

If you are starting out implementing such a library, you know that you're going to get the initial implementation of ShippingOptions wrong; or, at the very least, if not "wrong", then "incomplete". You should not want to commit to an expansive public API with a ton of different attributes until you really understand the problem domain pretty well.

Yet, ShippingOptions is absolutely vital to the rest of your library. You'll need to construct it and pass it to various methods like estimateShippingCost and shipPackage. So you're not going to want a ton of complexity and churn as you evolve it to be more complex.

Worse yet, this object has to hold a ton of state. It's got attributes, maybe even quite complex internal attributes that relate to different shipping services.

Right now, today, you need to add something so you can have "no rush", "standard" and "expedited" options. You can't just put off implementing that indefinitely until you can come up with the perfect shape. What to do?

The tool you want here is the opaque data type design pattern. C is lousy with such things (FILE, pthread_*_t, fd_set, etc). A typedef in a header file can easily achieve this.

But in Python, if you expose a dataclass - or any class, really - even if you keep all your fields private, the constructor is still, inherently, public. You can make it raise an exception or something, but your type checker still won't help your users; it'll still look like it's a normal class.

Luckily, Python typing provides a tool for this: typing.NewType.

Let's review our requirements:

- We need a type that our client code can use in its type annotations; it needs to be public.

- They need to be able to consruct it somehow, even if they shouldn't be able to see its attributes or its internal constructor arguments.

- To express high-level things (like "ship fast") that should stay supported as we add more nuanced and complex configurations in the future (like "ship with the fastest possible option provided by the lowest-cost carrier that supports signature verification").

In order to solve these problems respectively, we will use:

- a public

NewType, which gives us our public name... - which wraps a private class with entirely private attributes, to give us an actual data structure, while not exposing the constructor,

- a set of public constructor functions, which returns our

NewType.

When we put that all together, it looks like this:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

|

As a snapshot in time, this is not all that interesting; we could have just exposed _RealShipOpts as a public class and saved ourselves some time. The fact that this exposes a constructor that takes a string is not a big deal for the present moment. For an initial quick and dirty implementation, we can just do checks like if options._speed == "fast" in our shipping and estimation code.

However, the main thing we are doing here is preserving our flexibility to evolve the related APIs into the future, so let's see how we might do that. For example, let's allow the shipping options to contain a concrete and specific carrier and freight method:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 |

|

As a NewType, our public ShippingOptions type doesn't have a constructor. Since _RealShipOpts is private, and all its attributes are private, we can completely remove the old versions.

Anything within our shipping library can still access the private variables on ShippingOptions; as a NewType, it's the same type as its base at runtime, so it presents minimal1 overhead.

Clients outside our shipping library can still call all of our public constructors: shipFast, shipNormal, and shipSlow all still work with the same (as far as calling code knows) signature and behavior.

If you need to build and convey some state within your public API, while avoiding breakages associated with compatibility churn, hopefully this technique can help you do that!

Acknowledgments

Thanks for reading, and thank you to my patrons who are supporting my writing on this blog. If you like what you've read here and you'd like to read more of it, or you'd like to support my various open-source endeavors, you can support my work as a sponsor.

-

The overhead is minimal, but it is not completely zero. The suggested idiom for converting to a

NewTypeis to call it like a function, as I've done in these examples, but if you are wanting to use this pattern inside of a hot loop, you can use# type: ignore[return-value]comments to avoid that small cost. ↩

22 May 2026 12:33am GMT

04 Apr 2026

Planet Twisted

Planet Twisted

Donovan Preston: Using osascript with terminal agents on macOS

Here is a useful trick that is unreasonably effective for simple computer use goals using modern terminal agents. On macOS, there has been a terminal osascript command since the original release of Mac OS X. All you have to do is suggest your agent use it and it can perform any application control action available in any AppleScript dictionary for any Mac app. No MCP set up or tools required at all. Agents are much more adapt at using rod terminal commands, especially ones that haven't changed in 30 years. Having a computer control interface that hasn't changed in 30 years and has extensive examples in the Internet corpus makes modern models understand how to use these tools basically Effortlessly. macOS locks down these permissions pretty heavily nowadays though, so you will have to grant the application control permission to terminal. But once you have done that, the range of possibilities for commanding applications using natural language is quite extensive. Also, for both Safari and chrome on Mac, you are going to want to turn on JavaScript over AppleScript permission. This basically allows claude or another agent to debug your web applications live for you as you are using them.In chrome, go to the view menu, developer submenu, and choose "Allow JavaScript from Apple events". In Safari, it's under the safari menu, settings, developer, "Allow JavaScript from Apple events". Then you can do something like "Hey Claude, would you Please use osascript to navigate the front chrome tab to hacker news". Once you suggest using OSA script in a session it will figure out pretty quickly what it can do with it. Of course you can ask it to do casual things like open your mail app or whatever. Then you can figure out what other things will work like please click around my web app or check the JavaScript Console for errors. Another very important tips for using modern agents is to try to practice using speech to text. I think speaking might be something like five times faster than typing. It takes a lot of time to get used to, especially after a lifetime of programming by typing, but it's a very interesting and a different experience and once you have a lot of practice It starts to to feel effortless.

04 Apr 2026 1:31pm GMT