02 Jun 2026

Django community aggregator: Community blog posts

Django community aggregator: Community blog posts

You don't need React to be reactive — djust 1.0 is here

djust 1.0 is here - reactive UI for Django in pure Python. No client state, no JavaScript framework, no build step, no API layer. It brings the proven Phoenix LiveView model to Django with a Rust VDOM on the hot path. Try it live (multi-user, no install) at start.djust.org.

02 Jun 2026 6:00pm GMT

Code is cheap

The first time I said "code is cheap" out loud in a meeting, a manager waved at the budget - headcount, salaries, the tooling line - and asked which part of that looked cheap. He wasn't wrong about the number - he was wrong about what it was buying.

02 Jun 2026 10:15am GMT

01 Jun 2026

Django community aggregator: Community blog posts

Django community aggregator: Community blog posts

My PyCon Italia 2026

A timeline of my PyCon Italia 2026 journey, in Bologna (IT), told through the Mastodon posts I shared along the way.

01 Jun 2026 3:00am GMT

31 May 2026

Django community aggregator: Community blog posts

Django community aggregator: Community blog posts

Django: introducing django-integrity-policy

Back in January, Firefox's Security & Privacy Newsletter for 2025 Q4 piqued my interest with this mention:

Integrity-Policy: Firefox 145 has added support for the Integrity-Policy response header. The header allows websites to ensure that only scripts with an integrity attribute will load.

A new security header! That's right up my street: I've cared about getting security headers right since 2018, when I created django-permissions-policy to set the Permissions-Policy header. (At the time, it was called Feature-Policy: why they changed it, I can't say, people just liked it better that way.)

The new Integrity-Policy header helps with subresource integrity, a tool for securely including third-party scripts and stylesheets on your website. Browsers support the integrity attribute on <script> and <link> tags, which allows you to specify a hash of the expected content, like:

<script

src=https://cdn.jsdelivr.net/npm/htmx.org@4.0.0-beta4/dist/htmx.min.js

integrity=sha384-aWZK1NtOs/aWb/+YZdTM8q2JkWEshlMc9mgZ189numT9bwFhyAyYEoO4nO/2dTXt

crossorigin=anonymous></script>

If the content downloaded from the external source doesn't match the expected hash, the browser blocks it from loading. This is a great defense against the target URL changing its contents, executing a supply chain attack against your visitors.

(Generally, I recommend you avoid loading anything from a third-party URL, per Reasons to avoid Javascript CDNs. But sometimes, you gotta do what you gotta do, and declaring integrity is a great idea, then.)

Integrity-Policy allows you to opt in to requiring integrity attributes on your page, ensuring that you can never load potentially-compromised resources. The header is fairly simple, at least right now-here's a complete example that requires integrity for all scripts and stylesheets:

Integrity-Policy: blocked-destinations=(script style)

You can add in endpoints to tell browsers where to send violation reports to, and there's the second Integrity-Policy-Report-Only header which lets you test a policy without enforcing it.

Note there's no possibility to differentiate between first- and third-party resources in Integrity-Policy. If you set it, you'll need to add integrity attributes to all your scripts and stylesheets, including those you host yourself. This is by design: the header is being developed as part of Web Application Integrity, Consistency and Transparency (WAICT), an initiative to bring app-store-level "code signing" to the web, where users can be sure that CDNs and other intermediaries haven't tampered with served code.

Integrity-Policy is supported on Firefox 145+ and Chrome 138+.

django-integrity-policy

My new package, django-integrity-policy provides a middleware for setting the Integrity-Policy headers in a familiar Django-style way. You install it, add the middleware:

MIDDLEWARE = [

...,

"django.middleware.security.SecurityMiddleware",

"django_integrity_policy.IntegrityPolicyMiddleware",

...,

]

…and then configure the appropriate setting(s):

INTEGRITY_POLICY = {

"blocked-destinations": ["script", "style"],

}

So far, it's pretty basic, but I expect WAICT will increase the complexity of Integrity-Policy over time, and I'll add support for new options as they come along.

Once Integrity-Policy is set, the browser will block any scripts or stylesheets (depending on configuration) that lack a valid integrity attribute, including your first-party resources. That means you need to add integrity attributes to all your static files. Luckily, this has been considered before by the legendary Jake Howard, who made a package called django-sri. It provides template tags to generate appropriately hashed HTML tags. For example:

{% load sri %}

{% sri_static "app.js" %}

{% sri_static "app.css" %}

…will output:

<script src="/static/app.js" integrity="sha256-..."></script>

<link rel="stylesheet" href="/static/app.css" integrity="sha256-..."/>

These tags would be allowed under a maximally strict integrity policy.

See the example application in the django-integrity-policy repository for a full working project.

LLM generation

I built this package using just two prompts to Claude. I copied the repository for my previous security header package, django-permissions-policy, and reset its Git history. I then used this prompt to Claude, inside Zed:

The current repository is a copy of my package django-permissions-policy

It's time to turn it into django-integrity-policy, for the relevant headers per the below mdn docs

(Integrity-Policy MDN docs from https://github.com/mdn/content/blob/main/files/en-us/web/http/reference/headers/integrity-policy/index.md?plain=1)

(Integrity-Policy-Report-Only MDN docs from https://github.com/mdn/content/blob/main/files/en-us/web/http/reference/headers/integrity-policy-report-only/index.md?plain=1)

Check and edit every file to be that new package, copyright 2026, keeping the general testing infrastructure and so on.

Don't run any commands yet, just check and edit every file

I then took a 20-minute nap and woke up to a near-complete package. I reviewed it, ran the tests, made some minor edits, committed, and pushed to PyPI!

LLMs are rightfully a hot topic, with heady supporters and heavy detractors. I use them begrudgingly and somewhat sparingly, and I cannot wait for the future where I can stick to local models. (Gemma 4 can run on my M1 Mac and approaches Claude's performance on many tasks, so we're getting there.)

This task, though, was a perfect fit for LLM code generation: the existing repository acted as great context for structure, the new package was very similar in shape ("same same but different"), and the documentation provided a clear specification for what to build. The LLM could mash things up for me with minimal oversight, and I could check the work quickly.

I would guess that overall, using an LLM saved me a couple of hours of mostly grunt-work, like checking every copied-over configuration file. That's pretty valuable for me, and honestly made creating the package feasible.

The future

I'm not sure how widely used Integrity-Policy will be, and therefore how popular django-integrity-policy will end up. But this was an interesting exercise and I am interested to see how the header and other work from WAICT evolves. I will try to keep the package updated, and we'll see if it ever reaches a point where proposing support in Django itself makes sense.

31 May 2026 4:00am GMT

29 May 2026

Django community aggregator: Community blog posts

Django community aggregator: Community blog posts

Issue 339: Early Bird DjangoCon US Tickets Ending Soon

News

DjangoCon US 2026: Early Bird Tickets End May 31st!

Early bird ticket sales for DjangoCon US 2026 end on May 31, 2026, with discounted pricing available. The conference runs five days at Voco Chicago Downtown and includes community-selected talks plus Django contribution sprints.

Wagtail CMS News

Wagtail Space NL - June 12

A full-day conference in Rotterdam, The Netherlands on Wagtail, with talks covering a range of topics, lightning talks, hallway discussions, and more.

Updates to Django

Today, "Updates to Django" is presented by Pradhvan from Djangonaut Space! 🚀

Last week we had 16 pull requests merged into Django by 10 different contributors.

This week's Django highlights: 🦄

- Django's built-in error pages, admin, and registration templates now include the CSP nonce on

<script>,<link>, and<style>elements when available. (#36825) - Fixed

HttpResponse.reason_phraseto raiseBadHeaderErrorwhen set to a value containing control characters. (#37100) - Fixed

Query.clear_ordering()to recursively clear ordering on combined queries, preventing errors when using__inlookups on nestedunion()querysets. (#37097) - Admin change form actions now use

ModelAdmin.get_queryset(), ensuring custom annotations and filtering are consistently applied to form actions. (#37117)

If you haven't already, give Django 6.1 alpha 1 a spin and report anything suspicious to the issue tracker! 🎉

That's all for this week in Django development! 🐍🦄

Articles

Upgrade PostgreSQL from 17 to 18 on Ubuntu 26.04

After moving to Ubuntu 26.04, upgrade an existing 17/main cluster to 18 by running pg_upgradecluster 17 main -v 18, then verify the new 18/main cluster is online. Once confirmed, drop the old 17 cluster with pg_dropcluster 17 main and optionally purge postgresql-17 and postgresql-client-17 packages.

My not-so-static new static website

Jake Howard walks through his eighth website rewrite, this time ditching Wagtail for a custom "semi-static" Django setup that renders Markdown content into SQLite at startup and serves it dynamically with Jinja2 templates.

Improving First Byte and Contentful Paint on a Django Website

A look at how to use Django's StreamingHttpResponse to send the ` and above-the-fold content first, letting the browser fetch static assets and start painting while the rest of the page renders.

PyCon US 2026 Recap - Black Python Devs

A recap from from the community booth to open spaces, hallway track, and Jay Miller receiving the PSF Community Service Award.

django-removals 1.2.0 - Now with Django 6.1 deprecations

How the maintainers of django-removals shipped new warnings for the Django 6.1 deprecation wave.

Mentoring GSoC 2026: Experimental Flags - Software Crafts

Mentor and mentee are starting a GSoC 2026 project around an "Experimental Flags" framework for Django core, using the forum to gather requirements and drive early consensus. The plan balances fast iteration with faster-than-normal Django consensus, including an initial third-party package to test ideas before wider adoption.

Django Forum

GSoC 2026: Implementing a Formal Experimental API Framework for Django Core

A lively discussion around how experimental features can be merged into the main repository but remain explicitly non-stable.

Thoughts on advertising on djangoproject.com

New thoughts and comments on the age-old question.

Django Fellow Reports

Jacob Walls

Not much going on, "just" the 6.1 Feature Freeze/alpha release, a sprint at PyCon US, and a kickoff meeting with Google Summer of Code participants & mentors.

Sarah Boyce

As we had the feature freeze, focused on a few feature PRs I had prioritized for 6.1 release.

Natalia Bidart

This week was mostly about returning from PyCon, which was quite exhausting. I arrived back on Wednesday, fairly drained (and very hungry), so I worked during Thu and Fri catching up on a large backlog of email notifications and syncing with the other Fellows.

Events

Django on the Med - September 23-25 in Pescara, Italy

PyCon Italia this week has been Django members in attendance, so it is a good time to remind readers that Django on the Med will be back in Italy later in the year.

Django Job Board

Founding Engineer at MyDataValue

Projects

feincms/feincms3-cookiecontrol

Cookie banner with support for embedded media.

emfpdlzj/django-deploy-probes

HTTP deployment probes for Django applications.

29 May 2026 2:00pm GMT

27 May 2026

Django community aggregator: Community blog posts

Django community aggregator: Community blog posts

Please add an RSS Feed to Your Site

Why syndication feeds are having a moment in 2026.

27 May 2026 9:57pm GMT

Mentoring GSoC 2026: Experimental Flags

Over the last couple of weeks, Google Summer of Code (GSoC) has started for 2026, I think along side my mentee, I will blog about it as we progress through the project. So far, there has been a kick-off meeting with all participants and I have started to chat with my mentee (Praful) about the first steps of our project - Experimental Flags. he has posted to the Forum about the project, asking for feedback on what we want from the project.

Before I say anymore, please go and pitch your opinion and any ideas you may have, the more we have to work with the better! We need you!

What set's this project apart from GSoC projects in recent years is that we have yet to have an agreed solution in place that 'just' needs implementing. So my initial guide will be to focus on consensus gathering and documentation. But being a GSoC project with a limited time availabilty, I do feel the need to push the process forward at a pace for consensus that is faster than the normal Django pace. That said, the potential for this project is wide and expansive, currently with a lot of open questions both as to why we need them and what should be implemented and that's before we get to the details of how to implement this.

So for me, the why of experimental feature flags most things can be done or can be experimented with as a third-party package. I think the requirement for an experimental feature flag is perhaps for that last 10% of a new API, or where you need where getting higher usage of a feature is required to flesh out all of the use cases with a wider audience, this audience is beyond that of the community. If we think of the adoption curve we're talking about the early majority, those developers who are more likely to enable a feature inside Django, with it's stablilty guarantees, than a third-party package. Or perhaps this is the project which allows us as a community to get more flexible with what in the release package(s?) of Django and what code is in the source control repository?

One thing is for sure, I do want to ensure Praful isn't completely stuck so we will be experimenting with these ideas in a third-party package while we build consensus and then perhaps dogfood the process with our this package once consensus has been reached!

Again, go to the Forum and make your opinion known!

27 May 2026 5:00am GMT

26 May 2026

Django community aggregator: Community blog posts

Django community aggregator: Community blog posts

Code linearization

You can find plenty of articles about design - where and how to use SQL, NoSQL, message queues, Redis, VMs, and so on. Almost nobody writes about tactics: the actual coding. It borders on style, but it isn't just style. This is the first article in a series on tactics I use day to day. Highly opinionated - I don't expect you to follow it. Look, chuckle, think about it, and use what you like.

26 May 2026 8:00pm GMT

22 May 2026

Django community aggregator: Community blog posts

Django community aggregator: Community blog posts

Issue 338: Django 6.1 alpha 1 released

News

Django 6.1 alpha 1 released

Django 6.1 alpha 1 has been released, signaling the next round of framework updates headed your way. Plan a quick test run in a staging environment so you can catch compatibility issues early as 6.1 develops.

Wagtail CMS News

Wagtail accessibility statistics for GAAD 2026

Wagtail accessibility statistics for GAAD 2026 give a focused look at how well your CMS setup supports real accessibility needs. Use the figures to spot gaps and prioritize the most impactful improvements.

Updates to Django

Today, "Updates to Django" is presented by Pradhvan from Djangonaut Space! 🚀

Last week we had 16 pull requests merged into Django by 11 different contributors - including 2 first-time contributors!

Congratulations to somi and Kasey for having their first commits merged into Django - welcome on board! 🥳

This week's Django highlights: 🦄

- Deprecated

QuerySet.select_related()with no arguments, along with the corresponding admin options that relied on this implicit form. (#36593)

RedirectViewnow supports apreserve_requestattribute, letting redirects keep the original HTTP method and body by returning 307 or 308 instead of 302 or 301. (#37062)

- Admin actions are now also shown on the object edit page, allowing bulk actions to be triggered directly from the change form. (#12090)

- Fixed Oracle compound-query compilation by clearing unnecessary ordering from combined query components in unions and

ORDER BYwrappers. (#36938)

That's all for this week in Django development! 🐍🦄

Sponsored Link

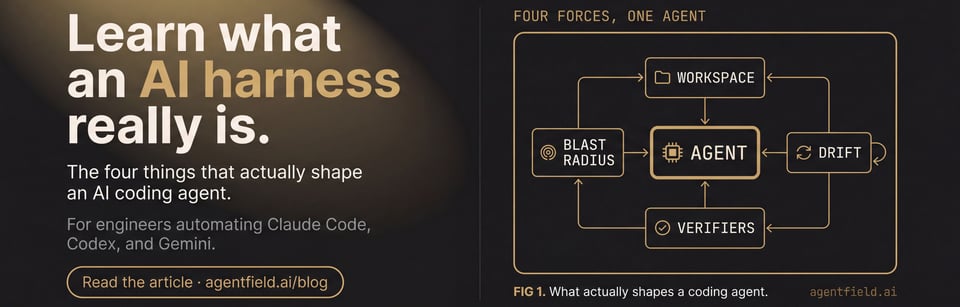

Middleware, but for AI agents

Django middleware composes request handlers. Harnesses do the same for AI agents - Claude Code, Codex, Gemini in one coordinated system. Learn what a harness actually is, why it's a new primitive, and how to engineer one that holds in production. Apache 2.0, open source.

Articles

My experience at PyCon US 2026

A first-person look at PyCon US 2026 with takeaways for developers who care about Python and the community around it. Expect practical impressions from talks and the conference vibe, not a generic recap.

PyCon US 2026 Recap

Will Vincent from PyCharm (and this newsletter!) shares seven days of talks, sprints, and hallway track conversations from this year's event.

My First PyConUS Experience

Jon Gould from Foxley Talent relates his first experience, takeaways, and comparisons to DjangoCons.

PostgreSQL 19 Beta: The Four Features You'll Actually Feel

PostgreSQL 19 Beta brings four changes highlighted for real-world impact, with a focus on what developers will actually notice. Expect a practical walkthrough rather than a long list of release notes.

Core Dispatch #4

Core Dispatch recaps a packed few weeks in the Python core world, including the arrival of Python 3.15 beta 1, free-threading improvements, PEP 788 landing in CPython, and a wave of new core developer activity.

Anything that could go wrong, will. The excuse is optional.

A thoughtful take on Murphy's Law in software engineering: resilient teams don't avoid risk or ignore it, they design systems assuming failure will happen and plan accordingly.

My PyCon US 2026

A chronological recap of PyCon US 2026 in Long Beach, with live notes ranging from the first AI track talk on AI-assisted contributions and maintainer load to security updates, community building, and Djangonaut Space. Expect practical takeaways about how AI affects review and conflict in open source, plus plenty of Django community moments including "Django on the Med."

Events

Organizing DjangoCon Europe 2026: The Afterthoughts | Blog with LOGIC

Find practical after-the-fact takeaways from organizing DjangoCon Europe 2026, focused on the details people usually only notice after the event. A useful read for anyone planning Django community events or sharpening their conference workflow.

Videos

Tech Hiring has got a FRAUD problem!

Tech hiring can attract fraud, from fake postings to misleading recruiting signals. Keep an eye on red flags in job listings and interview processes so you can spot scams early and protect candidates.

Podcasts

Django Chat #204:How France Ditched Microsoft with Samuel Paccoud

France's shift away from Microsoft is tied to decisions and experiences Samuel Paccoud discusses. The focus is on what prompted the move and what it meant operationally for organizations involved.

Django Job Board

Founding Engineer at MyDataValue

Junior Software Developer (Apprentice) at UCS Assist

PyPI Sustainability Engineer at Python Software Foundation

Projects

mliezun/caddy-snake

Caddy plugin to serve Python apps

AvaCodeSolutions/django-email-learning

An open source Django app for creating email-based learning platforms with IMAP integration and React frontend components.

ehmatthes/gh-profiler

Examine a GitHub user's profile, to help quickly decide how much to invest in their contributions. Was discussed by many maintainers at PyCon US sprints.

22 May 2026 2:00pm GMT

21 May 2026

Django community aggregator: Community blog posts

Django community aggregator: Community blog posts

Utrecht (NL) Python meetup summaries

I made summaries at the 4th PyUtrecht meetup (in Nieuwegein, at Qstars this time).

Qstars IT and open source - Derk Weijers

Qstars IT hosted the meeting. It is an infra/programming/consultancy/training company that uses lots of Python.

They also love open source and try to sponsor where possible.

One of the things they are going to open source (next week) is a "cable thermal model", a calculation method to determine the temperature of underground electricity cables. The Netherlands has a lot of net congestion... So if you can have a better grid usage by calculating the real temperature of cables instead of using an estimated temperature, you might be able to increase the load on the cable without hitting the max temperature. Coupled with "measurement tiles" that actually monitor the temperature.

They build it for one of the three big electricity companies in the Netherlands and got permission to open source it so that the other companies can also use it. They hope it will have real impact.

He explained an open source project he started personally: "the space devs". Integrating rocket launch data and providing an API. Now it has five core developers (and got an invitation to the biggest space conference, two years ago!)

Some benefits from writing open source:

- You build your own portfolio.

- You can try new technologies. Always nice to have the skill to learn new things.

- You improve your communication skills (both sending and receiving).

- You can make your own decisions.

- You write in the open.

- Perhaps you help others with your work.

- You could be part of a cummunity.

- It is your code.

How to start?

- Reach out to other communities.

- Read and improve documentation.

- Find good first issues.

- Be proactive.

- Don't be afraid to ask questions (and don't let negative comments discourage you).

When working on open source, make sure you take security serious. People nowadays like to use supply chain attacks via open source software. So use 2FA and look at your deployment procedure.

Learning Python with Karel - EiEi Tun H

What is Karel <https://github.com/alts/karel>)? A teaching tool/robot for learning programming. You need to steer a robot in an area and have it pick up or dump objects. And... in the meantime you learn how to use functions and loops.

Karel only has a turn_left() function. So if you want to have it turn right, it is handy to add a function for it:

def turn_right():

turn_left()

turn_left()

turn_left()

Simple, but you have to learn it sometime!

In her experience, AI can help a lot when learning to code: it explains stuff to you like you're a five-year-old, and that's perfect.

If you want to play with Karel: https://compedu.stanford.edu/karel-reader/docs/python/en/ide.html

JSON freedom or chaos; how to trust your data - Bart Dorlandt

For this talk, I'm pointing at the PyGrunn summary I made three weeks ago. I liked the talk!

Practical software architecture for Python developers - Henk-Jan van Hasselaar

There are several levels of architecture. Organization level. System level. Application, Code.

Cohesion: "the degree to which the elements inside a module belong together". What does it mean? Working towards the same goal or function. Together means something like distance. When two functions are in separate libraries, they're not together. It is also important for cognitive load.

Coupling: loose coupling versus high coupling. You want loose coupling, so that changes in one module don't affect another module.

You don't really have to worry about coupling and cohesion in existing systems that don't need to be changed. But when you start changing or build something new: take coupling/cohesion into account.

Software architecture is a tradeoff. Seperation of concerns is fine, but it creates layers and thus distance, for instance.

Python is one of the most difficult languages when it comes to clean coding and clean architecture. You're allowed to do so many dirty things! Typing isn't even mandatory...

He showed a simple REST API as an example. Database model + view. But when you change the database model, like a field name, that field name automatically changes in the API response. So your internal database structure is coupled to the function at the customer that consumes the API.

What you actually need to do is to have a better "contract". A domain model. In his example code, it was a Pydantic model with a fixed set of fields. A converter modifies the internal database model to the domain model.

You can also have services, generic pieces of code that work on domain models. And adapters to and from domain models, like converting domain models to csv.

Finding the balance is the software architect's job.

What is the least you should do as a software developer? At least to create a domain layer. Including a validator.

There was a question about how to do this with Django: it is hard. Django's models are everywhere. And you really need a clean domain layer...

21 May 2026 4:00am GMT

My PyCon US 2026

A timeline of my PyCon US 2026 journey, in Long Beach (US), told through the Mastodon posts I shared along the way.

21 May 2026 3:00am GMT

20 May 2026

Django community aggregator: Community blog posts

Django community aggregator: Community blog posts

Weeknotes (2026 week 17)

Weeknotes (2026 week 17)

I published the last entry near the beginning of March. I'm really starting to see a theme in my Weeknotes publishing schedule.

Releases since the first weeks of March

I'm trying out a longer-form version of those notes here than in the past. I think it's worth going into some detail and not just listing releases with half a sentence each.

feincms3-sites and feincms3-language-sites

I released updates to feincms3-sites and feincms3-language-sites fixing the same issue in both projects: When an HTTP client didn't strip the default ports :80 (for HTTP) or :443 (for HTTPS) from a request, finding the correct site would fail. Browsers generally strip the port already, but some other HTTP clients do not.

django-tree-queries

As I wrote elsewhere I closed many issues in the repositories, mostly documentation issues but also some bugs. {% recursetree %} should now work properly and not cache old data anymore, using the primary key in .tree_fields() now raises an intelligible error, and I also fixed a bug with table quoting when using django-tree-queries with the not yet released Django 6.1+.

feincms3-cookiecontrol

feincms3-cookiecontrol not only offers a cookie consent banner (which actually supports only embedding tracking scripts when users give consent) but also a third-party content embedding functionality which allows allowlisting individual services.

The privacy policies of these services are now linked inline instead of with an ugly extra link. This reduces content inside the embed which helps on small screens.

Version 1.7 used a buggy trusted publishing workflow so I immediately published 1.7.1.

django-cabinet and django-prose-editor

django-cabinet can now be used as a media library directly inside django-prose-editor. I'm (ab)using the CKEditor 4 protocol for embedding, which uses window.opener.CKEDITOR.callFunction to send data back from the file manager popup into the editor. It feels icky but works nicely. This is only available if you're installing the alpha prereleases, but I'm already testing the functionality in production somewhere, so I feel quite good about it.

django-prose-editor now also ships brand new ClassLoom and StyleLoom extensions. Both extensions allow adding either classes or inline styles to text spans or nodes. In an ideal world we might not use something like this, but to make the editor more useful in the real world, editors need more flexibility. These two extensions provide that. I already mentioned ClassLoom in December, now it's actually available. I'm not completely sold on how they work yet, but both of them are already solving real issues.

Honorable mentions

django-debug-toolbar 6.3 has been released, I only contributed reviews during this cycle.

20 May 2026 5:00pm GMT

How France Ditched Microsoft - Samuel Paccoud

🔗 Links

- La Suite numérique on GitHub

- La Suite website

- Samuel on LinkedIn

- TechEmpower Benchmarks being sunsetted

📦 Projects

📚 Books

🎥 YouTube

🤝 Sponsor

This episode is brought to you by Six Feet Up, the Python, Django, and AI experts who solve hard software problems. Whether it's scaling an application, deriving insights from data, or getting results from AI, Six Feet Up helps you move forward faster.

See what's possible at https://sixfeetup.com/.

20 May 2026 3:00pm GMT

PyCon US 2026 Recap

Seven days of sponsor booth, talks, sprints, and hallway chats.

20 May 2026 11:56am GMT

15 May 2026

Django community aggregator: Community blog posts

Django community aggregator: Community blog posts

Issue 337: Django Developers Survey 2026

Will and Jeff are at PyCon US in Long Beach, California this week. Drop by the Django Software Foundation booth or the JetBrains booth and say hello.

News

Django Developers Survey 2026

The Django Software Foundation is once again partnering with JetBrains to run the 2026 Django Developers Survey 📊 Help us better understand how Django is being used around the world and guide future technical and community decisions.

DSF member of the month - Bhuvnesh Sharma

Bhuvnesh is a Django contributor since 2022 and a Google Summer of Code (GSoC) participant in 2023 for Django. He is now a mentor and an admin organizer for GSoC for the Django organization, as well as the founder of Django Events Foundation India (DEFI) and DjangoDay India conference.

Announcing the Google Summer of Code 2026 contributors for Django

Google Summer of Code 2026 contributors have been announced for Django, listing the developers who will be working on projects as part of the program. If you are following Django's next wave of community work, this is the roll-up of who's joining and what to watch for.

Releases

Python 3.14.5 is out!

Python 3.14.5 is now available, bringing the latest point release in the Python 3.14 line. If you maintain Django apps, use the update as your prompt to verify dependencies and run your test suite against 3.14.5 before rolling forward.

Updates to Django

Today, "Updates to Django" is presented by Johanan Oppong Amoateng from Djangonaut Space! 🚀

Last week we had 22 pull requests merged into Django by 13 different contributors - including 4 first-time contributors! Congratulations to Denny Biasiolli, Milad Zarour, MANAS MADESHIYA and Héctor Castillo for having their first commits merged into Django - welcome on board!

This week's Django highlights: 🦄

- Allowed max redirect URL length to be set on HttpResponseRedirect. (#36767)

- Added support for object-based form media stylesheet assets. (#37085)

- Deprecated SHA-1 default for salted_hmac() and base64_hmac() algorithm. (#37078)

Python Software Foundation

Python Software Foundation News: Announcing PSF Community Service Award Recipients!

Python Software Foundation has announced the recipients of its PSF Community Service Award. The update highlights people recognized for their contributions to the Python community.

Python Software Foundation News: Strategic Planning at the PSF

Python Software Foundation News covers the PSF's strategic planning efforts and the direction they are working toward. Expect a focus on how the foundation plans its priorities and activities moving forward.

Wagtail CMS News

Results of the 2026 Wagtail DX with AI survey

The 2026 Wagtail DX survey reports where teams are applying AI and what they want next from the platform. Use the findings to align your own Wagtail and AI experimentation with the issues practitioners are actually raising.

Our four contributors for Google Summer of Code 2026

Google Summer of Code 2026 is welcoming four contributors, highlighting the people behind the upcoming work. If you're tracking Django ecosystem activity, this is a quick way to see who's starting and what to watch for next.

Sponsored Link

Middleware, but for AI agents

Django middleware composes request handlers. Harnesses do the same for AI agents - Claude Code, Codex, Gemini in one coordinated system. Learn what a harness actually is, why it's a new primitive, and how to engineer one that holds in production. Apache 2.0, open source.

Articles

How to have a great first PyCon (updated for 2026)

Timeless advice from Trey Hunner on how to make the most out of PyCon US this week or any other technical conference.

Using Django Tasks in production · Better Simple

Production-ready Django task setups: what to change, what to watch, and how to keep background jobs reliable once you leave local dev. Useful guidance for deploying and operating task workers with fewer surprises.

Dealing with Dead Links (404s): 2026 Edition | Will Vincent

A practical guide to handling dead links in Django, focusing on what to do when a URL no longer exists and how to respond with clean, user-friendly 404 behavior. Expect guidance on keeping routing and error handling tidy as your site evolves.

Podcasts

Django Chat #203: Deploy on Day One - Calvin Hendryx-Parker

Calvin is the co-founder and CTO of the consultancy SixFeetUp. We discuss developer experience from day one, Kubernetes as a feature, real-world usage of AI and agentic tooling, typing in Python, the junior developer pipeline problem, and more. Also available in video format on YouTube.

Django Job Board

Founding Engineer at MyDataValue

Junior Software Developer (Apprentice) at UCS Assist

PyPI Sustainability Engineer at Python Software Foundation

Projects

abu-rayhan-alif/djangoSecurityHunter

A security and performance inspector for Django & DRF. Features static analysis, config checks, N+1 query detection, and SARIF support for GitHub Code Scanning.

janraasch/dsd-vps-kamal

A Django Simple Deploy plugin for configuring & automating deployments of your Django project to any VPS using Kamal.

15 May 2026 2:00pm GMT

13 May 2026

Django community aggregator: Community blog posts

Django community aggregator: Community blog posts

Deploy on Day One - Calvin Hendryx-Parker

🔗 Links

- SixFeetUp Careers

- getscaf, copier, tilt

- A CTOs Guide to AI Coding Assistants

- kind, nix, spec-kit

- Figma make

📦 Projects

📚 Books

- London Review of Books

- Big Panda & Tiny Dragon by James Norbury

- Universal Principles of Typography by Elliot Jay Stocks

🎥 YouTube

🤝 Sponsor

This episode is brought to you by Six Feet Up, the Python, Django, and AI experts who solve hard software problems. Whether it's scaling an application, deriving insights from data, or getting results from AI, Six Feet Up helps you move forward faster.

See what's possible at https://sixfeetup.com/.

13 May 2026 3:00pm GMT