01 Jun 2026

Planet Lisp

Planet Lisp

Joe Marshall: Regression

Last year I wrote some Lisp related AI apps. There was a syntax highlighter that used the LLM to determine how to colorize and highlight syntax, and a prompt refiner that takes a wimpy LLM prompt and creates more elaborate prompt from them.

I took the apps down last week. They were `vibe coded' and therefore approximate and had bugs (but that's to be expected), but they had a security hole where you could hijack the LLM processing with your own prompt turning my app into an open relay using my API key. Last week I discovered that my AI spend on video creation was becoming serious. This is odd because I never create AI video. It turned out that my app was being hijacked by a proxy in Luxembourg and was generating videos on my dime.

So I shut down the apps. I knew they had the potential of being abused, and I was willing to tolerate a small amount of abuse, but it didn't occur to me that syntax highlighter could be hijacked to generate gigabytes of video at my expense. Future applications will be careful to obtain the API key from the user.

01 Jun 2026 7:00am GMT

31 May 2026

Planet Lisp

Planet Lisp

Joe Marshall: CLRHack: Meta-object Protocol

Metaobject Protocol (MOP) Implementation in CLRHack

The Metaobject Protocol in CLRHack is a high-performance implementation of the Common Lisp Object System (CLOS) integrated into the .NET 8.0 Common Language Runtime (CLR). It provides a complete meta-compilation pipeline that bridges the gap between dynamic Lisp semantics and the static CIL (Common Intermediate Language) execution model.

Core Architecture

The MOP is implemented through three primary layers:

- The Metaobject Hierarchy (C#): A set of foundational classes in

LispBaserepresenting classes, methods, generic functions, and slot definitions. - The Runtime Engine (

MopRuntime): A centralized orchestrator that manages class finalization, method combination, dispatch caching, and instance allocation. - The Compiler Bridge (Lisp): Transformations in

ast.lispthat translate high-level CLOS forms (defclass,defmethod) into optimized runtime calls.

Instance Representation

Because the CLR type system is strictly single-inheritance and statically defined, CLRHack decouples Lisp-level inheritance from C# inheritance. All CLOS instances are represented by the StandardObjectInstance class, which contains:

- A reference to its

ClassMetaobject. - A private

object[] storagearray for instance slots, indexed by locations calculated during class finalization.

The Dispatch Pipeline

Generic function invocation is the most complex part of the implementation. When a generic function is called:

- Cache Lookup: The

DiscriminatingFunctionfirst checks a thread-safedispatchCacheusing anInvocationCacheKey(a stack-allocatedstruct) to find a previously computed effective method. - Applicability & Precedence: If the cache misses, the runtime computes all applicable methods and sorts them based on specializer specificity and the Class Precedence List (CPL).

- Method Combination: The

ComputeEffectiveMethodlogic builds a nested execution chain following the Standard Method Combination rules::aroundmethods are called first, withcall-next-methodprogressing to the next around method or the main chain.- The main chain executes all

:beforemethods, the primary method, and finally all:aftermethods in reverse order.

- Fast Invocation: The resulting effective method is compiled into a

Func<object[], object>that uses direct delegate invocation to minimize overhead.

Challenges and Solutions

1. Thread-Safe Non-Local Exits (call-next-method)

Challenge: call-next-method and next-method-p require access to the current invocation's state (the remaining methods and original arguments). Passing this state through every function call would break compatibility with standard Lisp function signatures.

Solution: CLRHack utilizes [ThreadStatic] fields in MopRuntime to store the currentNextMethods and currentArguments. This ensures that even in highly concurrent environments (like a web server), each OS thread has its own isolated invocation context, allowing call-next-method to function correctly without state leakage.

2. Forward References and Lazy Finalization

Challenge: Lisp allows classes to refer to superclasses that haven't been defined yet. The runtime must handle these "zombie" classes without crashing the JIT compiler.

Solution: The system implements a ForwardReferencedClassMetaobject. When a class is defined, it is automatically finalized (computing its CPL and slot layout). If a superclass is missing, a forward reference is created. The EnsureFinalized protocol ensures that inheritance is resolved and slot locations are assigned the moment the class is first instantiated or used in dispatch.

3. Performance Overhead of the "MOP Bridge"

Challenge: A naive implementation of slot-value or generic dispatch using C# reflection or linear searches is orders of magnitude slower than native C# member access.

Solution: Three distinct optimizations were applied:

- O(1) Slot Access: Each

ClassMetaobjectmaintains aSlotDictionary. Slot names are mapped to physical array indices during finalization, allowingslot-valueto perform a direct array access after a single dictionary lookup. - Compiler Primitives: The compiler identifies

SLOT-VALUEandMAKE-INSTANCEcalls and emits direct CILcallinstructions to optimizedLisp.MopRuntimemethods, bypassing the generalFuncallpath. - Zero-Allocation Cache Hits: By making

InvocationCacheKeyareadonly structand avoiding the cloning of the argument array during cache probes, the hot-path for generic function dispatch generates zero garbage for the .NET Collector.

4. Bootstrapping the COMMON-LISP Package

Challenge: Core CLOS functions like make-instance must be available as symbols in the COMMON-LISP package before user code runs, but they rely on the MOP runtime being fully initialized.

Solution: A MopRuntime.Initialize() method is injected into the entry point (Main) of every generated assembly. This method interns the necessary symbols and binds them to GenericFunctionClosureAdapter objects, ensuring that the MOP is "alive" before the first line of Lisp code executes.

Vibe coding the MOP basically involved feeding chapters 4 and 5 of the Art of the Meta-Object Protocol into the LLM and telling it to make an implementation plan. It came up with a twenty-step plan to bootstrap CLOS. I then spent the rest of the day instructing an agent to take on each task of the twenty-step plan in sequential order. At the end of the day, I had a working MOP

This is the end of my series of posts on CLRHack.

31 May 2026 7:00am GMT

30 May 2026

Planet Lisp

Planet Lisp

Joe Marshall: CLRHack: signal and error

Implementation of SIGNAL and ERROR in CLRHack

In CLRHack, the condition signaling system is implemented in the Lisp.HandlerControl class within the LispBase library. It leverages .NET's [ThreadStatic] storage to maintain a per-thread dynamic stack of active condition handlers.

SIGNAL Implementation

The Signal(object condition) method performs the following logic:

- Retrieval: It fetches the

activeHandlerslist for the current thread. This list is a chain of[LispBase]Lisp.Handlerobjects maintained byhandler-bind. - Iteration: It iterates linearly through the list from the most recently bound handler to the oldest.

- Type Matching: For each handler, it calls

IsType(condition, handler.ConditionType).- If the condition is a symbol, it checks for symbol equality (supporting simple symbol-based conditions).

- If the condition is a .NET object, it checks if the handler's type is assignable from the condition's runtime type (supporting interop with system exceptions).

- It treats the symbols

TorEXCEPTIONas catch-all types.

- Handler Invocation: If a match is found:

- Recursive Signal Protection: Before calling the handler function, the current handler list is temporarily shadowed.

activeHandlersis set tocell.rest(the handlers bound outside the current one). This ensures that if the handler itself callssignal, it won't trigger itself recursively. - Execution: The handler's

Closureis invoked with the condition object as its argument. - Restoration: A

finallyblock ensures the originalactiveHandlerslist is restored if the handler returns normally.

ERROR Implementation

The

Error(object condition)method build uponSignal:- Signaling Pass: It first invokes

Signal(condition). If a handler performs a non-local exit (e.g., viahandler-case), theErrormethod never returns. - Debugger Entry: If

Signalreturns normally (meaning all handlers declined),ErrorcallsEnterDebugger(condition). - Interactive Debugging: The debugger:

- Prints the condition and a list of available restarts (retrieved via

RestartControl.GetActiveRestarts()). - Provides a prompt for the user to select a restart, launch the system-level debugger (Visual Studio/Rider), or abort.

- If a restart is selected, it is invoked interactively (potentially gathering arguments from the user).

- Prints the condition and a list of available restarts (retrieved via

- Final Fallback: If the debugger is exited without invoking a restart,

Errorthrows a C#Exceptionto ensure that execution does not continue on an invalid path.

Notable Implementation Decisions and Edge Cases

- Recursive Signal Protection: Before calling the handler function, the current handler list is temporarily shadowed.

- Handler Shadowing: The decision to pop the handler list during invocation is critical for maintaining Common Lisp semantics. It prevents infinite loops and ensures that "outer" handlers can handle errors raised within "inner" handlers.

- Unified Exception Model: CLRHack attempts to unify Lisp conditions and .NET exceptions.

IsTypeallows Lisp handlers to catch C# exceptions by their class name or Type object. - Thread Isolation: By using

[ThreadStatic]foractiveHandlers, CLRHack ensures that condition signaling is thread-safe. One thread signaling an error will not interfere with the handler state of another thread. - Debugger Capability: The

SYSTEM-DEBUGGERoption inEnterDebuggeris a bridge to the underlying .NET environment, allowing developers to use professional IDE tools to inspect the state of the Lisp VM when an unhandled error occurs.

signal and error complete the Common Lisp condition system implementation for CLRHack

30 May 2026 7:00am GMT

29 May 2026

Planet Lisp

Planet Lisp

Joe Marshall: CLRHack: handler-bind and handler-case

In the CLRHack compiler, handler-bind is a primitive form used to register condition handlers in the dynamic environment. It operates by managing a thread-local list of active handler objects, ensuring that condition signaling follows the standard Common Lisp search and execution rules.

Handling of handler-bind

When the compiler processes a handler-bind form, it generates CIL code that performs the following steps:

- Capture Previous State: It calls

Lisp.HandlerControl::GetActiveHandlers()to retrieve the current list of active handlers and stores it in a frame-local variable. - Construct New List: For each binding, it evaluates the condition type and the handler function (which is typically a closure). It instantiates a new

[LispBase]Lisp.Handlerobject and conses it onto the current handler list. - Install New State: It calls

Lisp.HandlerControl::SetActiveHandlers(new_list)to update the dynamic environment for the current thread. - Protected Execution: The body of the

handler-bindis wrapped in a CIL.tryblock. - Restoration: A

finallyblock is emitted that callsSetActiveHandlerswith the saved list. This ensures that handlers are properly uninstalled, regardless of whether the body completes normally, signals an error, or performs a non-local exit.

Lexical Non-Local Exits

Handlers in Common Lisp are executed in the dynamic environment of the signaller but have lexical access to the environment where they were defined. In CLRHack, if a handler function performs a non-local exit (such as a throw or return-from), the compiler utilizes its exception-based jump mechanism:

- If the exit is a

throw, it uses the standardCatchThrowExceptionmechanism. - If the exit is a

return-fromto a block outside the handler closure, the compiler identifies this as a non-local exit duringanalyze-environment. It compiles thereturn-frominto athrowof aBlockExitException, which is subsequently caught by thetry/catchframe established by the targetblock.

Handler Search

The handler search is performed at runtime by the signal or error functions. These functions retrieve the active handlers list via HandlerControl.GetActiveHandlers() and iterate through them. For each handler, the runtime checks if the signaled condition is of the type (or a subtype of the type) the handler was registered for. If a match is found, the handler function is invoked. If the handler returns normally (declines), the search continues with the next applicable handler.

Dynamic Tags

The handler-bind implementation itself relies on the dynamic state of the thread-local activeHandlers list. However, when used in conjunction with handler-case, unique dynamic tags (typically fresh ListCell objects) are generated. These tags are used as the "target" for the throw performed by the handler, ensuring that the control flow returns exactly to the correct handler-case frame and doesn't conflict with other active handler or catch frames.

handler-case as an Extension of handler-bind

In CLRHack, handler-case is not a primitive but a macro that expands into a combination of block, catch, and handler-bind. It extends handler-bind by providing a mechanism to automatically exit the signaling context and execute a specific branch of code based on the condition caught.

The implementation details of the expansion are as follows:

- Exit Block: The entire form is wrapped in a

blockwith a unique exit tag to allow the normal path to return immediately upon completion of the protected expression. - Dynamic Setup: A unique dynamic tag is created for the

catchframe. Local variables are established to store the captured condition and a unique ID identifying which clause was triggered. - The Binding: A

handler-bindis generated where each handler function is a closure that, when called:- Saves the signaled condition into the local

condition-var. - Sets the

id-varto a unique GENSYM representing that specific clause. - Performs a

throwto the dynamic tag.

- Saves the signaled condition into the local

- The Catch and Dispatch: A

catchblock surrounds the protected expression. If a handler performs thethrow, thecatchreturns, and acondstatement (the dispatcher) checks theid-var. It then executes the body of the matchinghandler-caseclause with the condition variable bound to the clause's parameter.

29 May 2026 7:00am GMT

28 May 2026

Planet Lisp

Planet Lisp

Joe Marshall: CLRHack: restarts

In the CLRHack compiler, restart-bind is a primitive form that manages the dynamic lifecycle of Common Lisp restarts by manipulating a thread-local stack of active restart objects.

Handling of restart-bind

When the compiler encounters a restart-bind form, it generates CIL code that performs the following steps:

- Capture Previous State: It calls

Lisp.RestartControl::GetActiveRestarts()to retrieve the current list of active restarts and stores it in a frame-local variable. - Construct New List: For each binding, it evaluates the restart name, handler function, and optional keyword arguments (

:report-function,:interactive-function,:test-function). It then instantiates a new[LispBase]Lisp.Restartobject and conses it onto the existing list. - Install New State: It calls

Lisp.RestartControl::SetActiveRestarts(new_list)to update the dynamic environment. - Protected Execution: The body of the

restart-bindis wrapped in a CIL.tryblock. - Restoration: A

finallyblock is emitted that restores the previously saved restart list usingSetActiveRestarts, ensuring that restarts are properly uninstalled even if the body performs a non-local exit.

Lexical Non-Local Exits

The CLRHack compiler supports lexical non-local exits (e.g., return-from or go) through an exception-based mechanism. During the analyze-environment pass, the compiler identifies if a return-from target block is "non-local" (i.e., the return occurs within a nested closure). If so:

- The target block is wrapped in a

try/catchfor[LispBase]Lisp.BlockExitException. - The block is assigned a unique string ID.

- The return-from form is compiled into a

throwof aBlockExitException, which carries the target ID, the return value, and a captured array of multiple return values (retrieved viaLisp.Values::CaptureValues()). - The

catchhandler verifies the target ID. If it matches, it restores any captured multiple values and resumes normal execution; otherwise, it rethrows the exception.

Restart Search

The search for an applicable restart is handled at runtime by Lisp.RestartControl::FindRestart. It performs a linear search through the current thread's activeRestarts list (stored in a [ThreadStatic] field). It can accept either a symbol name or a Restart object itself. If a name is provided, the search respects shadowing, returning the innermost (most recently bound) restart with that name.

Dynamic Tags

Dynamic tags are required for the catch and throw forms used in non-local control flow. In CLRHack, a dynamic tag is simply a fresh object (typically a ListCell or a new System.Object) used as a unique token. This ensures that a throw only matches the specific catch frame it was intended for, avoiding collisions between different invocations of the same function or different restart-case blocks.

restart-case as an Extension of restart-bind

In CLRHack, restart-case is implemented as a macro that expands into a combination of block, catch, and restart-bind. It extends the basic binding functionality by providing a built-in mechanism to jump back to the site of the restart-case when a restart is invoked.

The implementation details are as follows:

- Exit Block: The entire expansion is wrapped in a

(block exit_tag ...)to allow normal completion of the expression. - Dynamic Tag: A unique dynamic tag is created (e.g.,

(let ((tag (list nil))) ...)). - Catch Frame: A

(catch tag ...)is established around therestart-bindand the expression. - Binding: The

restart-bindcreates restarts whose handler functions are closures. When invoked, these closures capture their arguments into local variables, set a unique clause ID, and thenthrowto the dynamic tag. - Dispatch: When the

throwis caught, therestart-casebody executes acondorcasestatement. This dispatcher checks the clause ID set by the handler and executes the corresponding forms provided in therestart-caseclause, eventually returning the result from theexit_tagblock.

28 May 2026 7:00am GMT

27 May 2026

Planet Lisp

Planet Lisp

Joe Marshall: CLRHack: unwind-protect and catch-throw

Handling of unwind-protect

The CLRHack compiler maps Lisp unwind-protect semantics directly onto the Structured Exception Handling (SEH) infrastructure of the .NET Common Language Runtime (CLR). Specifically, it utilizes the try...finally construct provided by the Common Intermediate Language (CIL).

Lisp semantics require that the cleanup forms in an unwind-protect block be executed regardless of how control leaves the protected form-whether via normal return, a non-local throw, or a lexical exit like return-from. The CLR guarantees that a finally block will execute during stack unwinding, which is exactly the hook required for Lisp. The implementation details are as follows:

- Protected Form: The compiler generates the code for the protected form inside a CIL

tryblock. Upon successful completion, the primary return value is stored in a local variable, and aleaveinstruction is used to exit thetryblock, which automatically triggers the transition to thefinallyblock. - Side-Channel Preservation: A unique challenge in Lisp is that

unwind-protectmust return the values of the protected form, but cleanup forms may themselves perform operations that alter the Multiple Return Value (MRV) side-channel. CLRHack exploits method-local variables to save theReturnCountand the contents ofValue1throughValue63at the very beginning of thefinallyblock and restore them at the very end. - Unwinding: If a

throwor other exception occurs within thetryblock, the CLR stack walker identifies thefinallyblock and executes it before propagating the exception further. This ensures Lisp's "cleanup guarantee" is maintained even during catastrophic or non-local control transfers.

Handling of catch and throw

Lisp's catch and throw are implemented as a Dynamic Non-Local Exit system built on top of .NET's exception propagation mechanism. While CLR exceptions are typically filtered by type, Lisp requires filtering by a dynamic "tag" object (compared via eq).

The throw Mechanism

When a (throw tag value) is evaluated, CLRHack does not simply perform a jump. Instead, it performs the following steps:

- Evaluates the

tagand the primaryvalue. - Captures the current state of the MRV side-channel into an

object[]. - Instantiates a specialized exception class:

[LispBase]Lisp.CatchThrowException. This object acts as a carrier for the tag, the primary value, and the captured MRV array. - Executes the CIL

throwinstruction. This initiates the CLR's SEH stack walk.

The catch Mechanism

The (catch tag body) form is compiled into a try...catch block where the catch handler specifically targets CatchThrowException:

- Tag Setup: The

catchtag is evaluated and stored in a method-local variable. - Body Execution: The body forms are executed within a

tryblock. - The Catch Handler: When a

CatchThrowExceptionis intercepted, the handler performs a "Dynamic Filter":- It extracts the tag from the exception object and compares it to the local

catchtag usingSystem.Object.Equals(simulating Lisp'seqfor reference types). - Match: If the tags match, the handler "claims" the exception. It extracts the primary value and the MRV array from the exception, restores them to the thread-local side-channel, and resumes normal execution after the

catchblock. - Mismatch: If the tags do not match, the handler executes the CIL

rethrowinstruction. This allows the exception to continue up the stack to find a matchingcatchtag in a higher frame.

- It extracts the tag from the exception object and compares it to the local

Exploiting SEH for Lisp Semantics

CLRHack exploits the CLR's SEH in three fundamental ways to bridge the gap between .NET and Lisp:

- Automatic Stack Unwinding: By using

throwandtry...catch, the compiler delegates the complex task of cleaning up stack frames, registers, and intermediate states to the highly optimized .NET runtime. - Guaranteed Cleanup: The

finallyblock is the "silicon reality" of Lisp'sunwind-protect. The CLR ensures it runs even if an exception is re-thrown multiple times or if a thread is being terminated. - Payload-Heavy Exceptions: Unlike standard .NET exceptions which often carry only metadata,

CatchThrowExceptionis exploited as a transport mechanism. It carries the entire "return state" of a Lisp expression (primary value + MRV side-channel) across an arbitrary number of stack frames, allowing athrowto behave exactly like a multi-valued return to a dynamic point.

27 May 2026 7:00am GMT

TurtleWare: A brief note about slot access cost in Common Lisp

Common Lisp is renowned for its excellent object system CLOS. Its implementation is often accompanied by the Metaobject Protocol that, while it is not part of the standard, allows programmers to customize the system underpinnings in numerous interesting ways. This level of customization doesn't come without a cost - some CLOS code paths will be slower compared to open-coding equivalent solutions without the use of standard objects.

The purpose of this blog post is to draw an intuition of differences between structure objects and standard objects when it comes to accessing their slots. From now on I'm going to refer to structure objects as structures, and standard objects as instances.

We could imagine a structure is represented in memory as a tuple (CLASS SLOTS), while an instance is represented as a tuple (CLASS STAMP SLOTS). Modifying the structure class has undefined behavior, while the instance's class may change. This is why the instance needs to track whether it is up-to-date or obsolete. In our simple scheme that information is represented by a stamp that represents the class generation.

Tracking whether the instance is obsolete is important, because the memory layout of slots may change - they may be deleted, added, or moved to different positions. This is convenient for long-running programs without downtime, for incremental development and for image-based workflows - the program may be modified at any time to account for changing requirements, without recompiling it from scratch.

But this doesn't come without a downside. The implementation may conformingly assume that structure accessors won't ever change and therefore they can be inlined. In this case, structure access is a simple memory reference.

(declaim (inline structure-reader-a))

(defun structure-reader-a (object)

(svref (%slots object) 3))

On the other hand, this can't be assumed for objects, as they must be checked for obsolescence (at the very least), and because readers are more generic functions - another level of flexibility. Inlining generic functions is hard because new methods may be added at runtime and the effective method can change. Moreover, there may be different classes that have same reader names, so we need to include a piece of code that uses the correct class layout for an instance.

This is why calling instance readers involves:

- calling a function (can't be inlined)

- finding the memory layout (dispatch)

- verifying whether the instance is up-to-date

That is exemplified by the following pseudocode that ignores other generic function intrinsics. Depending on the implementation of generic functions, the test for obsolete instances may be evaded when instances are not obsolete.

(declaim (notinline instance-reader-a))

(define-reader-function instance-reader-a (object)

(unless (%up-to-date-p object)

;; Among other things updates indexes for memory accesses.

;; This is a slow path.

(%recompile-reader-function #'instance-reader-a)

(return-from instance-reader-a (instance-reader-a object)))

(typecase object

(standard-class-a (svref (%slots object) 3))

(standard-class-b (svref (%slots object) 4))

(custom-class-c (slot-value object 'a))

(custom-class-d (slot-value object 'a))

(otherwise (no-applicable-method #'instance-reader-a object))))

All this is assuming that we're dealing with standard readers. Using the metaobject protocol it is possible to store slot values anywhere - most notably, not in a vector bundled with the instance - or to add additional preprocessing. I'm not going to touch on MOP much here; this is just to signify that standard readers for standard classes may directly access the slot vector.

At minimum, assuming a single reader and a clever dispatch algorithm:

(declaim (notinline instance-reader-a))

(define-reader-function instance-reader-a (object)

(if (eql (stamp object) 42)

(svref (%slots object) 3)

(if (%up-to-date-p object)

(no-applicable-method #'instance-reader-a object)

(progn

(%recompile-reader-function #'instance-reader-a)

(return-from instance-reader-a (instance-reader-a object))))))

In other words, comparing structure access with instance readers is comparing apples to oranges, because the former is a memory access, while the latter is a function call.

SLOT-VALUE will be even slower, because this function is a trampoline to a more involved SLOT-VALUE-USING-CLASS, and to do that we need to:

- read the object class

- find the slot definition in the class

- invoke a generic function SLOT-VALUE-USING-CLASS

The generic function SLOT-VALUE-USING-CLASS may be similar to the reader defined above, with the caveat that it has more arguments to dispatch on (so the dispatch procedure may be more involved). In any case, it is at least as slow as the optimal reader defined above (a single reader for the standard class).

(defun slot-value (object slot-name)

(let* ((class (class-of object))

(slots (mop:class-slots class))

(slot (find slot-name slots :key #'mop:slot-definition-name)))

(mop:slot-value-using-class class object slot)))

Tim Bradshaw recently made a blog post that claims that instance slot access is around 38x slower than structure access, but he compares inlined memory access to generic function dispatch. A fair comparison would use the operator STANDARD-INSTANCE-ACCESS.

The metaobject protocol defines MOP:STANDARD-INSTANCE-ACCESS, an optimized way to access instance slots that does not incur the overhead associated with dispatching generic functions. This function may be inlined and is similar to structure object accessors. A possible definition would look like this:

(declare (inline mop:standard-instance-access))

(defun mop:standard-instance-access (object location)

(svref (%slots object) location))

The argument LOCATION is technically an opaque object, but for illustration purposes we assume that it is an index (it usually is!). Its value may be read using the function SLOT-DEFINITION-LOCATION.

Let's dig into benchmarks! We will measure access time to slots in equivalent structure and instance, each containing ten untyped slots initialized with fixnums.

(defpackage "FAR-FROM-MOP"

(:import-from #+ccl "CCL"

#+ecl "MOP"

#+lispworks "CLOS"

#+sbcl "SB-MOP"

#-(or ccl ecl lispworks sbcl) "MOP"

"FINALIZE-INHERITANCE"

"CLASS-SLOTS"

"SLOT-DEFINITION-LOCATION"

"SLOT-DEFINITION-NAME"

"STANDARD-INSTANCE-ACCESS"

#+lispworks "FAST-STANDARD-INSTANCE-ACCESS")

(:export "FINALIZE-INHERITANCE" "CLASS-SLOTS" "SLOT-DEFINITION-LOCATION"

"SLOT-DEFINITION-NAME" "STANDARD-INSTANCE-ACCESS"

#+lispworks "FAST-STANDARD-INSTANCE-ACCESS"))

(defpackage "EU.TURTLEWARE.SLOT-BENCH"

(:use "CL")

(:local-nicknames ("MOP" "FAR-FROM-MOP")))

(in-package "EU.TURTLEWARE.SLOT-BENCH")

(declaim (optimize (speed 3) (safety 0) (debug 0)))

(eval-when (:compile-toplevel :load-toplevel :execute)

(defclass a ()

((a :initform (random 10) :reader a-a)

(b :initform (random 10) :reader a-b)

(c :initform (random 10) :reader a-c)

(d :initform (random 10) :reader a-d)

(e :initform (random 10) :reader a-e)

(f :initform (random 10) :reader a-f)

(g :initform (random 10) :reader a-g)

(h :initform (random 10) :reader a-h)

(i :initform (random 10) :reader a-i)

(j :initform (random 10) :reader a-j)))

(defstruct b

(a (random 10)) (b (random 10)) (c (random 10)) (d (random 10)) (e (random 10))

(f (random 10)) (g (random 10)) (h (random 10)) (i (random 10)) (j (random 10)))

(defparameter *o1* (make-instance 'a))

(defparameter *o2* (make-b))

(defparameter *locations*

(mapcar (lambda (slot-name)

(let ((class (find-class 'a)))

(mop:finalize-inheritance class)

(mop:slot-definition-location

(find slot-name (mop:class-slots class)

:key #'mop:slot-definition-name))))

'(a b c d e f g h i j))))

We will measure four slot reading patterns:

- structure: structure reader

- instance : reader,

SLOT-VALUEandMOP:STANDARD-INSTANCE-ACCESS

Moreover, to put some pressure on a hypothesized method cache, we will randomize access to slots. The macro expand-body generates consecutive access forms:

(defmacro expand-body (type n-access)

(flet ((random-a () (nth (random 10) '(a-a a-b a-c a-d a-e a-f a-g a-h a-i a-j)))

(random-b () (nth (random 10) '(b-a b-b b-c b-d b-e b-f b-g b-h b-i b-j)))

(random-s () (nth (random 10) '(a b c d e f g h i j)))

(random-l () (nth (random 10) *locations*)))

(ecase type

(:reader

`(progn

,@(loop repeat n-access

for read = `(,(random-a) object)

collect `(incf count (the fixnum ,read)))))

(:slot-value

`(progn

,@(loop repeat n-access

for read = `(slot-value object ',(random-s))

collect `(incf count (the fixnum ,read)))))

(:instance-access

`(progn

,@(loop repeat n-access

for read = #+lispworks `(mop:fast-standard-instance-access object ',(random-l))

#-lispworks `(mop:standard-instance-access object ',(random-l))

collect `(incf count (the fixnum ,read)))))

(:structure-access

`(progn

,@(loop repeat n-access

for read = `(,(random-b) object)

collect `(incf count (the fixnum ,read))))))))

Now our "benchmark tool" and the tests. It is a simple measurement that compares internal real times before and after the computation.

(defmacro do-bench (() &body body)

`(let ((now (get-internal-real-time))

(cnt (progn ,@body)))

(values (- (get-internal-real-time) now) cnt)))

(macrolet ((frob (name object access-type)

`(defun ,name (n &aux (object ,object))

(declare (fixnum n)

(optimize (speed 3) (safety 0) (debug 0)))

(do-bench ()

(let ((count 0))

(declare (fixnum count))

(dotimes (v n count)

(expand-body ,access-type 100)))))))

(frob test-object-v1 *o1* :reader)

(frob test-object-v2 *o1* :slot-value)

(frob test-object-v3 *o1* :instance-access)

(frob test-object-v4 *o2* :structure-access))

(defun test-batch (n)

(list (test-object-v1 n)

(test-object-v2 n)

(test-object-v3 n)

(test-object-v4 n)))

(defun do-benchmarks ()

(list* (list (lisp-implementation-type)

(lisp-implementation-version)

(machine-type)

internal-time-units-per-second)

(loop for e from 17 upto 26

for n = (expt 2 e)

collect (let (b)

(format t "... (expt 2 ~a):~%" e)

(setf b (test-batch n))

(format t "~a~%" b)

b))))

I've run these tests on four implementations. This table presents ratios of the access pattern compared to the best result. Absolute timings are not included.

| Implementation | reader / best | svalue / best | access / best | struct / best |

|---|---|---|---|---|

| CCL 1.12.2 | 17 | 12 | 2 | 1 |

| ECL 26.5.5 | 616 | 719 | 1 | 175 |

| LispWorks 8.1.2 | 22 | 79 | 1 | 1 |

| SBCL 2.4.2 | 10 | 9 | 1 | 1 |

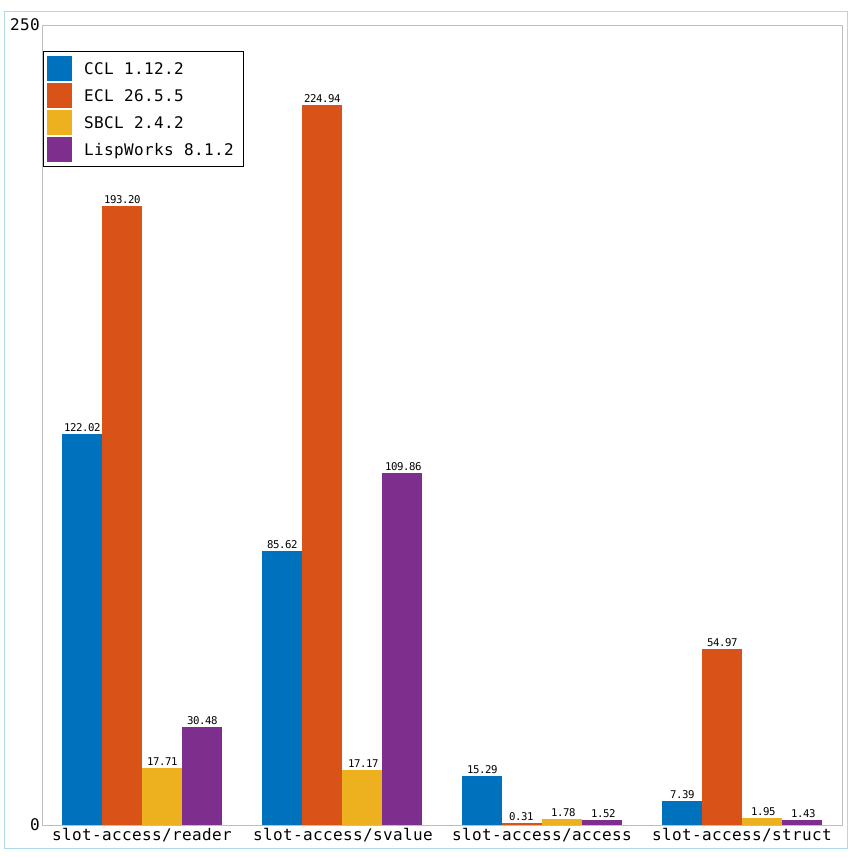

Edit: I've been asked a few times for a comparison between implementations, so I'm also including a bar chart comparing absolute timings between them:

Y-axis is in seconds and each bar represents 2^26 x 100 slot accesses in randomized order.

Conclusions:

Accessing slots using generic functions is indeed slower than a single memory access. This is because we can't inline these functions, and we must take care of many possibilities - most notably dispatching arguments of different classes and redefinitions of both the instance class and the reader generic function. All this cost buys us extensibility and runtime flexibility of the program.

Readers, under certain circumstances, can be better optimized than SLOT-VALUE, because they don't have to go through another function and access class slot definition. CCL and SBCL don't exploit this optimization opportunity.

Instance memory access and structure memory access times are roughly the same on SBCL and LispWorks, while instance access is two times slower on CCL.

ECL does a peculiar thing where structure readers are not inlined for some reason. That needs investigating, but hey, instance access is 175x faster ;-)! Instance access is also abnormally fast compared to other imlpementations and that also begs for investigation.

Notes:

To avoid external dependencies, I've defined a very basic time measurement and used MOP operators directly defined by a few hand-picked implementations. For more complete solutions look into "trivial-benchmark" by Yukari Hafner and "closer-mop" by Pascal Costanza.

Lispworks' CLOS::STANDARD-INSTANCE-ACCESS does not conform to MOP specification and errors when supplied with the slot location (it expects the slot name). That severely impacts the performance of instance access. The correct function to call is, for some reason, CLOS::FAST-STANDARD-INSTANCE-ACCESS.

ECL performance is poor in comparison, but I have good news! I'm implementing Fast Generic Function Dispatch algorithm and it will get better.

Somewhat a point of interest, but some implementations specialize slot-value-using-class and other CLOS protocols to structure classes too.

Plots were generated with Polyclot, work-in-progress McCLIM implementation of Grammar for Graphics.

I'd like to thank modula t. for reviewing this post and suggesting improvements.

27 May 2026 12:00am GMT

26 May 2026

Planet Lisp

Planet Lisp

Joe Marshall: CLRHack: Multiple return values

Multiple Return Value Implementation in CLRHack

The CLRHack compiler implements Multiple Return Values (MRV) by extending the single-value limitation of the .NET Common Intermediate Language (CIL) stack through a thread-local side-channel. This allows Lisp forms to communicate multiple values (up to 64) across function boundaries.

1. The Side-Channel Storage

Because a CIL method can only return a single object on the stack, CLRHack utilizes a static class [LispBase]Lisp.Values. This class contains [ThreadStatic] fields that act as a secondary communication channel:

- Primary Value: Always resides on the CIL evaluation stack.

ReturnCount: Anint32field indicating the total number of values returned (including the primary one).Value1throughValue63: Object fields that store the second through sixty-fourth return values.

2. Producing Multiple Values (The Staging Logic)

To prevent corruption during evaluation, the values form uses a Stage-and-Commit strategy. This is necessary because the side-channel is global to the thread; if a sub-expression inside a values form itself returns multiple values, it would overwrite the global fields before the outer values form is finished.

The compilation process for (values form1 form2 ... formN) follows these steps:

- Evaluation: Each form is evaluated in order.

- Local Staging: The result of

form1is kept on the stack. The results ofform2throughformNare immediately stored into method-local variables (temporaries). This ensures that ifform3calls a function that returns multiple values, the result ofform2is safely tucked away in a local variable and cannot be overwritten. - Commitment: After all forms are evaluated, the compiler generates code to move the values from the local temporaries into the global

Value1...ValueNfields. - Finalization: The

ReturnCountis set toN.

3. Preservation across Control Flow

Certain Lisp constructs must evaluate sub-forms without allowing those sub-forms to interfere with the return values of the primary form. This is handled by a Save-Restore pattern.

Multiple-Value-Prog1

The multiple-value-prog1 form evaluates its first form, then saves the entire side-channel state (the primary value, the ReturnCount, and all ValueN fields) into local variables. It then evaluates the subsequent forms. After they finish, it restores the side-channel state from its locals, ensuring the values of the first form are what the caller receives.

Unwind-Protect

In unwind-protect, the protected form is evaluated and its primary result is stored in a local variable. Crucially, the finally block (cleanup) must not destroy the side-channel state produced by the protected form. The compiler generates code at the start of the finally block to save ReturnCount and Value1...63 into locals. Once the cleanup forms complete, the state is restored from these locals before the method returns.

4. Nested Multiple Values (The Re-entrancy Problem)

The fundamental problem with a global side-channel is re-entrancy. If the compiler were to store form2 directly into the global Value1 field, and then form3 involved a function call like (some-func), that function might execute its own (values ...) logic. This would overwrite the global Value1 that was just set for the outer form.

By enforcing the use of method-local temporaries during the production of values, CLRHack ensures that the global side-channel is only updated at the last possible moment ("atomically" relative to the Lisp expression), effectively shielding the return values from being corrupted by nested evaluations.

26 May 2026 7:00am GMT

25 May 2026

Planet Lisp

Planet Lisp

Tim Bradshaw: Measuring slot access cost in Common Lisp

I've been interested in how slow CLOS slot access is in Common Lisp. Here's how I measured it.

I wanted to compare the cost of access to fields of various objects in Common Lisp. In particular I wanted to get a feel for the difference between a slot in a class defined with defclass, so an instance of a subclass of standard-object, and a field in a class defined with defstruct, so an instance of a subclass of structure-object.

A naïve model of the access cost

I measured forms like

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (slot-value a 'a)))

(incf s (the fixnum (slot-value a 'b)))

...))For \(N\) iterations you might think the time \(T\) should be

\[T = N(c_l + n(c_i + c_s)) + c_c\]

where \(c_l\) is the per-step loop overhead, \(n\) is the number of slots, \(c_i\) is the time to increment a variable, \(c_s\) is the slot read cost (the thing we want), and \(c_c\) is the overhead of calling the function to do all this. \(N\gtrapprox 10^9\), so it's safe to treat \(c_c\) as zero. This is linear in \(n\), and \(T\) is a thing we can measure, so we can differentiate and get an expression for \(c_s\) which is what we want.

\[c_s = \frac{1}{N}\frac{dT}{dn} - c_i\]

In fact, everything works in terms of the per-step time \(t\doteq T/N\) as \(N\) varies for different classes and numbers of slots to keep the runtimes reasonable, and then \(c_s = dt/dn - c_i\).

A better model

Well, this turns out to be wrong. In particular if you estimate \(c_i\) (see below on how I did this) and use it in the above expression you will end up calculating values of \(c_s\) for structures which are either absurdly tiny (\(\sim 10^{-11}\)s for a machine with a cycle time \(\sim 10^{-9}\)s) or even negative. The reason is pretty obviously that the increment and the access are largely overlapped.

So in what follows I simply treat \(c_i\) as zero. This may overestimate \(c_s\) somewhat. But a result of that overestimation is that the factor by which slot access is slower than structure field access will be underestimated, which will make CLOS seem faster than it is, since if \(a \gt b \gt c \gt 0\) then \((a - c)/(b - c) \gt a/b\). That's good, because what I'm trying to demonstrate is that it's really slow, so an underestimation is safe.

The new model expression for \(c_s\) is then just \(c_s = dt/dn\).

What I did

I measured slot access time in the same way for a class with 10 slots, measuring 2, 4, 6, 8 and 10 slots, and did the same thing for a structure with 10 fields.

Because the access times and numbers of accesses per step vary widely I adjusted the number of iterations to keep the run-times sane: more than 10 seconds per test but ideally less than 60.

Each measurement was repeated 4 times.

I then fitted a linear function to the data for each class (least-squares fit), and used its gradient and the estimated variable-increment cost to estimate \(c_s\) for each type.

All the measurements were done on an M1 MacBook Air, using caffeinate to prevent it sleeping. I measured LispWorks 8.1.2 and SBCL 2.6.4. Total run times were somewhat over an hour for each implementation.

Results

SBCL slot access data and best fit

LispWorks slot access data and best fit

SBCL structure field access data and best fit

LispWorks structure field access data and best fit

From these you can see that the results are consistent between runs and the best fit is pretty good.

The per-slot cost is then the slope of the best fit curve, or perhaps slightly less.

Structure field access cost estimate

- SBCL: \(c_s \approx 3.2\times 10^{-10}\)s.

- LispWorks: \(c_s \approx 3.1\times 10^{-10}\)s.

Note that these are both almost certainly a single cycle up to rounding.

standard-instance subclass slot access cost estimate

- SBCL: \(c_s \approx 1.2\times 10^{-8}\)s.

- LispWorks: \(c_s \approx 1.0\times 10^{-8}\)s.

Ratios

The ratios between these two values for each implementation are then about 38 for SBCL and about 32 for LispWorks: this is how much slower CLOS slot access is than structure field access. In fact it is probably an underestimate of how much slower it is.

What is obvious1

CLOS slot access is really slow.

What is less obvious but almost certainly true2

This is not because multiple inheritance is inherently slow: it's because the design of CLOS, especially if you want to take the AMOP MOP seriously, implies crappy performance.

Can this be fixed? Yes, I think so, with well-defined tradeoffs. Will it be? Up to implementors. So, probably not, sadly.

Notes

To get an estimate of the time to increment a variable, \(c_i\), first measure a large number of iterations of an empty loop and then a loop which increments a variable 100 times for each step. Both of the implementations I measured do not optimize empty loops away, intentionally I think. This estimate is now not used (see above), but if it's not about a clock cycle (about \(3.3\times 10^{-10}\)s on M1) then probably something is wrong.

Code

This is the CL code I used.

;;;; Some slot-value benchmarks

;;;

;;; None of this code is general-purpose.

;;;

(in-package :cl-user)

(define-condition too-short (simple-error)

((seconds :initform 0 :initarg :seconds :reader too-short-seconds)))

(defmacro noting-too-short (&body forms)

`(handler-bind ((too-short (lambda (e)

(format *debug-io* "~&Too short: ~,2Fs when minimum was ~Ds~%"

(too-short-seconds e)

*minimum-seconds*)

(continue e))))

,@forms))

(defvar *minimum-seconds* 10) ;how long it must run for

(defmacro ticks (&body forms)

`(let ((start (get-internal-real-time))

(end (progn

,@forms

(get-internal-real-time))))

(let* ((elapsed-ticks (- end start))

(elapsed-seconds (/ elapsed-ticks internal-time-units-per-second)))

(when (< elapsed-seconds *minimum-seconds*)

(cerror "just return ~D (~,2F seconds)"

(make-condition

'too-short

:format-control "~D ticks (~,2F seconds) is not long enough"

:format-arguments (list elapsed-ticks (float elapsed-seconds))

:seconds (float elapsed-seconds))

elapsed-ticks (float elapsed-seconds)))

elapsed-ticks)))

(defun seconds (ticks &optional (divider 1))

(/ ticks internal-time-units-per-second divider))

(defun note (control &rest args)

(format *debug-io* "~&[~?]~%" control args)

(force-output *debug-io*))

(defmacro noting ((&rest notes) &body forms)

;; Single value only, but this is all we need

`(progn

(format *debug-io* "~&[~@{~A~^ ~}" ,@notes)

(force-output *debug-io*)

(let ((r (progn ,@forms)))

(format *debug-io* " -> ~A]~%" r)

(force-output *debug-io*)

r)))

(defun inc-n (n incs)

(declare (type fixnum n incs)

(optimize speed (safety 0)))

(case incs

(0

(dotimes (i n 0)))

(100

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s)

(incf s))))

(otherwise

(error "what even is this"))))

(defun estimate-increment-time (&key

(exponent 11)

&aux

(n (round (expt 10 exponent)))

(n/100 (round (expt 10 (- exponent 2)))))

(declare (type fixnum n n/100))

(/ (- (seconds (noting (100 n/100) (ticks (inc-n n/100 100))) n/100)

(seconds (noting (0 n) (ticks (inc-n n 0))) n))

100))

(defclass a ()

((a :initform 0 :reader a-a)

(b :initform 0 :reader a-b)

(c :initform 0 :reader a-c)

(d :initform 0 :reader a-d)

(e :initform 0 :reader a-e)

(f :initform 0 :reader a-f)

(g :initform 0 :reader a-g)

(h :initform 0 :reader a-h)

(i :initform 0 :reader a-i)

(j :initform 0 :reader a-j)))

(defstruct b

(a 0)

(b 0)

(c 0)

(d 0)

(e 0)

(f 0)

(g 0)

(h 0)

(i 0)

(j 0))

(defgeneric svn (o n count &key)

(declare (optimize speed)))

(defmethod svn ((a a) n count &key (reader nil))

(declare (type fixnum n count)

(optimize speed (safety 0)))

(if reader

(case count

(2

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (a-a a)))

(incf s (the fixnum (a-b a))))))

(4

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (a-a a)))

(incf s (the fixnum (a-b a)))

(incf s (the fixnum (a-c a)))

(incf s (the fixnum (a-d a))))))

(6

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (a-a a)))

(incf s (the fixnum (a-b a)))

(incf s (the fixnum (a-c a)))

(incf s (the fixnum (a-d a)))

(incf s (the fixnum (a-e a)))

(incf s (the fixnum (a-f a))))))

(8

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (a-a a)))

(incf s (the fixnum (a-b a)))

(incf s (the fixnum (a-c a)))

(incf s (the fixnum (a-d a)))

(incf s (the fixnum (a-e a)))

(incf s (the fixnum (a-f a)))

(incf s (the fixnum (a-g a)))

(incf s (the fixnum (a-h a))))))

(10

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (a-a a)))

(incf s (the fixnum (a-b a)))

(incf s (the fixnum (a-c a)))

(incf s (the fixnum (a-d a)))

(incf s (the fixnum (a-e a)))

(incf s (the fixnum (a-f a)))

(incf s (the fixnum (a-g a)))

(incf s (the fixnum (a-h a)))

(incf s (the fixnum (a-i a)))

(incf s (the fixnum (a-j a))))))

(otherwise

(error "what even is this")))

(case count

(2

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (slot-value a 'a)))

(incf s (the fixnum (slot-value a 'b))))))

(4

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (slot-value a 'a)))

(incf s (the fixnum (slot-value a 'b)))

(incf s (the fixnum (slot-value a 'c)))

(incf s (the fixnum (slot-value a 'd))))))

(6

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (slot-value a 'a)))

(incf s (the fixnum (slot-value a 'b)))

(incf s (the fixnum (slot-value a 'c)))

(incf s (the fixnum (slot-value a 'd)))

(incf s (the fixnum (slot-value a 'e)))

(incf s (the fixnum (slot-value a 'f))))))

(8

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (slot-value a 'a)))

(incf s (the fixnum (slot-value a 'b)))

(incf s (the fixnum (slot-value a 'c)))

(incf s (the fixnum (slot-value a 'd)))

(incf s (the fixnum (slot-value a 'e)))

(incf s (the fixnum (slot-value a 'f)))

(incf s (the fixnum (slot-value a 'g)))

(incf s (the fixnum (slot-value a 'h))))))

(10

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (slot-value a 'a)))

(incf s (the fixnum (slot-value a 'b)))

(incf s (the fixnum (slot-value a 'c)))

(incf s (the fixnum (slot-value a 'd)))

(incf s (the fixnum (slot-value a 'e)))

(incf s (the fixnum (slot-value a 'f)))

(incf s (the fixnum (slot-value a 'g)))

(incf s (the fixnum (slot-value a 'h)))

(incf s (the fixnum (slot-value a 'i)))

(incf s (the fixnum (slot-value a 'j))))))

(otherwise

(error "what even is this")))))

(defmethod svn ((b b) n count &key)

(declare (type fixnum n)

(optimize speed (safety 0)))

(case count

(2

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (b-a b)))

(incf s (the fixnum (b-b b))))))

(4

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (b-a b)))

(incf s (the fixnum (b-b b)))

(incf s (the fixnum (b-c b)))

(incf s (the fixnum (b-d b))))))

(6

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (b-a b)))

(incf s (the fixnum (b-b b)))

(incf s (the fixnum (b-c b)))

(incf s (the fixnum (b-d b)))

(incf s (the fixnum (b-e b)))

(incf s (the fixnum (b-f b))))))

(8

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (b-a b)))

(incf s (the fixnum (b-b b)))

(incf s (the fixnum (b-c b)))

(incf s (the fixnum (b-d b)))

(incf s (the fixnum (b-e b)))

(incf s (the fixnum (b-f b)))

(incf s (the fixnum (b-g b)))

(incf s (the fixnum (b-h b))))))

(10

(let ((s 0))

(declare (type fixnum s))

(dotimes (i n s)

(incf s (the fixnum (b-a b)))

(incf s (the fixnum (b-b b)))

(incf s (the fixnum (b-c b)))

(incf s (the fixnum (b-d b)))

(incf s (the fixnum (b-e b)))

(incf s (the fixnum (b-f b)))

(incf s (the fixnum (b-g b)))

(incf s (the fixnum (b-h b)))

(incf s (the fixnum (b-i b)))

(incf s (the fixnum (b-j b))))))

(otherwise

(error "what even is this"))))

(defun measure-thing (thing &key

(exponent 11)

(specs

'((2 -0.2)

(4 -0.4)

(6 -0.6)

(8 -0.8)

(10 -1)))

(sleep 0)

&aux (cn (class-name (class-of thing))))

(mapcar (lambda (spec)

(destructuring-bind (count delta &rest kws &key) spec

(let ((iterations (round (expt 10 (+ exponent delta)))))

(let ((per-step (float

(seconds (noting (cn count iterations)

(ticks (apply #'svn thing

iterations count kws)))

iterations))))

(note "~S ~D elapsed ~Ds per-step ~Ds"

cn count (* per-step iterations) per-step)

(when (> sleep 0)

(noting ("sleep" sleep)

(sleep sleep)))

(list cn count per-step)))))

specs))

(defun measure-things (&key

(things-and-exponents `((,(make-b) 11)

(,(make-instance 'a) 10)))

(log-file "thing-times.ldat")

(tries 4)

(sleep 5))

;; Dump measurements to a log file

(with-standard-io-syntax

(with-open-file (log log-file :direction :output

:if-exists :supersede)

(noting-too-short

(let ((increment-time (float (estimate-increment-time))))

(note "increment time ~Ds" increment-time)

(pprint increment-time log)

(force-output log))

(dolist (thing-and-exponent things-and-exponents)

(destructuring-bind (thing exponent) thing-and-exponent

(note "~S exponent ~D"

(class-name (class-of thing))

exponent)

(dotimes (try tries)

(pprint

(measure-thing thing

:exponent exponent

:sleep sleep)

log)

(force-output log)))))))

log-file)This is the Racket code which plotted the data and computed the fit & cost.

#lang racket

;;;; Fit data from tsv

;;;

(require simple-polynomial

plot)

(define (snarf from)

;; Stolen from warranted (wcs.rkt): just read all the forms, safely

(call-with-default-reading-parameterization

(thunk

(parameterize ([read-accept-lang #f]

[read-accept-reader #f])

(call-with-input-file from

(λ (p)

(for/list ([form (in-port read p)])

form)))))))

(define (classify file-data)

;; The data is an increment time, followed by lists of (class-name

;; slot-count seconds) Return a hash table mapping from class names

;; and the imcrememt time

(match-let ([(cons increment-time records) file-data])

(define cmap (make-hasheqv))

(for* ([record (in-list records)]

[single (in-list record)])

(match-let ([(list class-name count seconds) single])

(hash-update! cmap class-name

(λ (c)

(cons (list count seconds) c))

'())))

(values cmap increment-time)))

(define (linear-fit class-name cmap)

(points->best-fit-polynomial (hash-ref cmap class-name) 1))

(define (slot-cost class-name cmap (increment-time 0.0))

(- (first (polynomial-terms (linear-fit class-name cmap)))

increment-time))

(define (file-slot-cost class-name file

#:use-increment-time (use-increment-time #f))

(let-values ([(cmap increment-time)

(classify (snarf file))])

(slot-cost class-name cmap (if use-increment-time

increment-time

0.0))))

(define (file-A/B-ratio file #:use-increment-time (use-increment-time #f))

(/ (file-slot-cost 'A file #:use-increment-time use-increment-time)

(file-slot-cost 'B file #:use-increment-time use-increment-time)))

(define (plot-linear-fit class-name cmap

#:to-file (to-file #f)

#:title (title #f))

(parameterize ([plot-font-family 'modern]

[plot-width 560]

[plot-x-far-axis? #f]

[plot-y-far-axis? #f]

[plot-x-ticks (linear-ticks #:number 5)])

(define pts (hash-ref cmap class-name))

((if to-file

(curryr plot-file to-file)

plot)

(list

(points pts #:sym 'plus #:label (format "~A data" class-name))

(function (points->best-fit-polynomial pts 1) #:label "linear fit"))

#:x-min 0

#:x-max 10.5

#:x-label "count"

#:y-label "seconds/step"

#:title title)))

(define (file-plot-linear-fit class-name file

#:to-file (to-file #f)

#:title (title #f))

(let-values ([(cmap _) (classify (snarf file))])

(plot-linear-fit class-name cmap

#:to-file to-file

#:title title)))25 May 2026 1:46pm GMT

Joe Marshall: CLRHack: Tail Recursion

Tail-Call Handling in CLRHack

I decided to make proper tail recursion a fundamental requirement in CLRHack. This prevents stack overflow errors during standard recursive patterns and ensures the runtime remains stable regardless of recursion depth. Technically, Common Lisp isn't required to be tail recursive, but I want mine to be.

1. Tail Position Identification

The compiler performs a structural analysis of the Abstract Syntax Tree (AST) to identify "tail positions." An expression is in a tail position if its value is the final result of the function, meaning no further work remains to be done in the current frame after the call returns. The generate-step2 walker propagates a tail-p flag through the following logic:

- Functions/Lambdas: The final expression in the body is in the tail position.

- Conditionals (IF): Both the "then" and "else" branches are in the tail position.

- Sequences (PROGN/LET): Only the very last form in the sequence is in the tail position.

- Blocks: The last form of a

BLOCKis in the tail position, provided the block is not the target of aRETURN-FROM.

2. CIL Instruction Emission

To implement proper tail-call semantics, the compiler utilizes the native tail. prefix in the Common Intermediate Language (CIL). When a function call is detected in a tail position, the compiler applies the following mandatory transformation:

- The Prefix: It prepends the

tail.opcode to thecallorcallvirtinstruction. - The Return: It immediately follows the call with a

ret(return) instruction.

The tail. prefix instructs the .NET Just-In-Time (JIT) compiler to discard the current method's stack frame before jumping to the target function. This ensures that the call consumes zero additional stack space, turning the recursive call into a semantic jump.

3. Safety and Context Constraints

The implementation of tail-calls is subject to specific safety rules imposed by the Common Language Runtime (CLR) to maintain execution integrity:

- Protected Regions: The CLR prohibits

tail.calls insidetry,catch, orfinallyblocks. Because Lisp constructs such asunwind-protectandhandler-caserely on these CIL features, tail-call elimination is suspended within these specific scopes to ensure cleanup handlers and error recovery mechanisms function correctly. - Frame Cleanup: The compiler ensures that all local resources are in a valid state before the

tail.prefix is issued, allowing the CLR to safely deallocate the current frame.

Example CIL Output

Consider a recursive counter that must be able to run indefinitely:

(defun count-down (n)

(if (= n 0)

"Done"

(count-down (- n 1))))

The compiled CIL for the recursive branch is transformed to ensure stack neutrality:

; ... code to calculate (- n 1) ...

tail.

call object Program::'COUNT-DOWN'(object)

ret

By strictly enforcing this pattern, CLRHack guarantees that recursive programs can execute with constant stack space, fulfilling my core requirement of tail recursion.

25 May 2026 7:00am GMT