27 May 2026

Planet Python

Planet Python

PyPy: PyPy v7.3.23 release

PyPy v7.3.23: release of python 2.7, 3.11

The PyPy team is proud to release version 7.3.23 of PyPy after the previous release on April 26, 2026. This is a bug-fix release that fixes an overeager warning about unused coroutines, and some problems around multiple inheritance in c-extensions.

This version includes a change to the bytecode interpreter to use exception tables instead of dedicated opcodes. Now the PyPy disassembly will be closer to CPython format. So far it does not impact performance.

The release includes two different interpreters:

-

PyPy2.7, which is an interpreter supporting the syntax and the features of Python 2.7 including the stdlib for CPython 2.7.18+ (the

+is for backported security updates) -

PyPy3.11, which is an interpreter supporting the syntax and the features of Python 3.11, including the stdlib for CPython 3.11.15.

The interpreters are based on much the same codebase, thus the double release. This is a micro release, all APIs are compatible with the other 7.3 releases.

We recommend updating. You can find links to download the releases here:

We would like to thank our donors for the continued support of the PyPy project. If PyPy is not quite good enough for your needs, we are available for direct consulting work. If PyPy is helping you out, we would love to hear about it and encourage submissions to our blog via a pull request to https://github.com/pypy/pypy.org

We would also like to thank our contributors and encourage new people to join the project. PyPy has many layers and we need help with all of them: bug fixes, PyPy and RPython documentation improvements, or general help with making RPython's JIT even better.

If you are a python library maintainer and use C-extensions, please consider making a HPy / CFFI / cppyy version of your library that would be performant on PyPy. In any case, cibuildwheel supports building wheels for PyPy.

What is PyPy?

PyPy is a Python interpreter, a drop-in replacement for CPython. It's fast (PyPy and CPython performance comparison) due to its integrated tracing JIT compiler.

We also welcome developers of other dynamic languages to see what RPython can do for them.

We provide binary builds for:

-

x86 machines on most common operating systems (Linux 32/64 bits, Mac OS 64 bits, Windows 64 bits)

-

64-bit ARM machines running Linux (

aarch64) and macos (macos_arm64).

PyPy supports Windows 32-bit, Linux PPC64 big- and little-endian, Linux ARM 32 bit, RISC-V RV64IMAFD Linux, and s390x Linux but does not release binaries. Please reach out to us if you wish to sponsor binary releases for those platforms. Downstream packagers provide binary builds for debian, Fedora, conda, OpenBSD, FreeBSD, Gentoo, and more.

What else is new?

For more information about the 7.3.23 release, see the full changelog.

Please update, and continue to help us make pypy better.

Cheers, The PyPy Team

27 May 2026 7:40am GMT

Python GUIs: Fixing Missing Icons in PyInstaller-Packaged PyQt6 Applications on Windows — Why your app icon disappears after packaging and how to fix it

I've packaged my PyQt application with PyInstaller, but the icon isn't showing up - both the executable icon and the running application icon are just the default Python/Windows icon. What's going on?

This is a common issue when packaging PyQt6 apps with PyInstaller on Windows. The good news is that it usually comes down to one of two straightforward causes: Windows icon caching, and missing resource files in your packaged output.

Setting the executable icon with PyInstaller

When you run PyInstaller, you can set the icon for the .exe file itself using the --icon flag:

pyinstaller --windowed --icon=myicon.ico myapp.py

This embeds the icon into the executable, so it shows up in File Explorer and on the desktop. The icon file needs to be in .ico format - .png or .svg won't work here.

After building, check the dist/ folder. Your .exe should display the custom icon. But sometimes... it doesn't.

Windows icon caching

Windows caches icons aggressively. If you've previously built your app without a custom icon, Windows may continue to show the old default icon even after you've rebuilt the app with the correct one.

This still catches me out, even though I know this. You'll reflexively start checking the config assuming something is wrong, and think you're going mad.

There are a few ways to deal with this:

- Rename the executable. Changing the filename forces Windows to look up the icon fresh. This is the quickest way to confirm that your icon is actually embedded correctly.

- Clear the Windows icon cache. You can do this by restarting Windows Explorer or by deleting the icon cache files manually. To manually clear the Windows icon cache open a Command Prompt and run:

ie4uinit.exe -show

After clearing the cache, the correct icon should appear.

You can also try turning your computer off and on again, or rather restarting Windows. That will also trigger the icon cache to rebuild.

Missing icon file at runtime

Setting the executable icon with --icon only affects what shows up in File Explorer. If your application also sets a window icon in code (using setWindowIcon), that icon file needs to be available at runtime too.

For example, if your code does this:

from PyQt6.QtWidgets import QApplication, QMainWindow

from PyQt6.QtGui import QIcon

import sys

class MainWindow(QMainWindow):

def __init__(self):

super().__init__()

self.setWindowTitle("My Application")

app = QApplication(sys.argv)

app.setWindowIcon(QIcon("myicon.ico"))

window = MainWindow()

window.show()

app.exec()

Then myicon.ico needs to exist in the working directory when the packaged app runs. By default, PyInstaller doesn't include data files like .ico images unless you tell it to.

You can add the icon file to your build using the --add-data flag:

pyinstaller --windowed --icon=myicon.ico --add-data "myicon.ico;." myapp.py

On Linux or macOS, use : instead of ; as the separator:

pyinstaller --windowed --icon=myicon.ico --add-data "myicon.ico:." myapp.py

This copies myicon.ico into the output directory alongside your executable (or into the temporary directory if you're using --onefile).

An alternative approach (not available on PyQt6) is to use the Qt Resource System to embed your icon directly into your application, which avoids the need to bundle separate icon files entirely.

Handling --onefile builds

When you use --onefile, PyInstaller extracts everything to a temporary folder at runtime. Your code needs to know how to find files relative to that temporary folder. You can handle this by detecting the base path:

import sys

import os

if getattr(sys, 'frozen', False):

# Running as a PyInstaller bundle

basedir = sys._MEIPASS

else:

# Running as a normal script

basedir = os.path.dirname(__file__)

Then use basedir when constructing file paths:

app.setWindowIcon(QIcon(os.path.join(basedir, "myicon.ico")))

Taskbar grouping with an Application User Model ID

On Windows, the taskbar groups windows by their application identity. Without an explicit identity, Windows guesses - and sometimes guesses wrong. This can cause your app to show the Python icon in the taskbar, or to group instances inconsistently depending on where they were launched from.

You can fix this by setting an Application User Model ID before creating your QApplication. This tells Windows exactly which application this is:

import ctypes

myappid = "com.mycompany.myapp.1.0"

ctypes.windll.shell32.SetCurrentProcessExplicitAppUserModelID(myappid)

The string can be anything, but it's conventional to use a reverse-domain format. The value just needs to be unique to your application.

With an explicit app ID set, all instances of your app will group together in the taskbar regardless of where they were launched from - whether that's your IDE, the dist/ folder, or a --onefile build.

Complete working example

Here's a complete example that handles all of the above - the runtime base path, the window icon, and the application user model ID. If you're new to building PyQt6 applications, you may want to start with creating your first window before tackling packaging.

import sys

import os

import ctypes

from PyQt6.QtWidgets import QApplication, QMainWindow, QLabel

from PyQt6.QtGui import QIcon

from PyQt6.QtCore import Qt

# Set the app user model ID before creating QApplication (Windows only)

if sys.platform == "win32":

myappid = "com.mycompany.myapp.1.0"

ctypes.windll.shell32.SetCurrentProcessExplicitAppUserModelID(myappid)

# Determine the base directory for resource files

if getattr(sys, "frozen", False):

basedir = sys._MEIPASS

else:

basedir = os.path.dirname(__file__)

class MainWindow(QMainWindow):

def __init__(self):

super().__init__()

self.setWindowTitle("My Application")

label = QLabel("Hello, world!")

label.setAlignment(Qt.AlignmentFlag.AlignCenter)

self.setCentralWidget(label)

app = QApplication(sys.argv)

app.setWindowIcon(QIcon(os.path.join(basedir, "myicon.ico")))

window = MainWindow()

window.show()

app.exec()

To package this with PyInstaller:

pyinstaller --windowed --icon=myicon.ico --add-data "myicon.ico;." myapp.py

For an in-depth guide to building Python GUIs with PyQt6 see my book, Create GUI Applications with Python & Qt6.

27 May 2026 6:00am GMT

Python Morsels: Selecting random values in Python

Python's random module provides utilities for generating pseudorandom numbers. For cryptographically-secure randomness, use the secrets module instead.

Table of contents

- Generating random integers

- Generating random floating point numbers

- Selecting random items from a sequence

- The

randomutilities are only pseudorandom - Cryptographically-secure randomness with the

secretsmodule RandomandSystemRandomclasses- Use

randomfor pseudo-random numbers andsecretsfor true randomness

Generating random integers

If you need a random integer, you can use the randint function from Python's random module:

>>> from random import randint

>>> randint(1, 6)

4

This function accepts a start value and a stop value and it returns a random integer between the start and stop values inclusively.

The random module also includes a randrange function, which is named after Python's range function:

>>> from random import randrange

>>> randrange(10)

7

This function accepts the same values as range.

Either a stop value:

>>> randrange(5)

2

Or start and stop values:

>>> randrange(5, 10)

8

Or start, stop, and step values:

>>> randrange(0, 100, 10)

70

The randrange function basically chooses a random number within a given range.

When I need a random number, I usually use randint.

Generating random floating point numbers

What if you need a …

Read the full article: https://www.pythonmorsels.com/random-numbers/

27 May 2026 4:00am GMT

26 May 2026

Planet Python

Planet Python

PyCoder’s Weekly: Issue #736: Polars Sort-Merge Joins, Zen, Resolving Lazy Imports, and More (2026-05-26)

#736 - MAY 26, 2026

View in Browser »

Streaming Sort-Merge Joins in Polars

"Joins are often one of the most expensive parts of a query. Once tables get large, the join can heavily impact both runtime and memory usage… If the join keys are already sorted, Polars can now take a cheaper path: a streaming sort-merge join."

THIJS NIEUWDORP

Tapping Into the Zen of Python

Explore the Zen of Python and its 19 guiding principles for writing readable, practical code. Learn its history, jokes, and meaning.

REAL PYTHON course

FREE Python Error Tracking From Honeybadger - all Signal, no Noise

Production bugs don't arrive one at a time. Honeybadger groups similar errors into a single issue and lets you pause or ignore alerts in a single click. More signal. Less noise. ⚡ Sign Up for Your FREE Developer Account →

HONEYBADGER sponsor

Resolve a Lazy Import Manually

Learn how to work around the Python 3.15 machinery to resolve an explicit lazy import manually.

RODRIGO GIRÃO SERRÃO

Django 6.1 Alpha 1 Released

Posted by Jacob Walls on May 20, 2026

DJANGO SOFTWARE FOUNDATION

PEP 831: Frame Pointers Everywhere: Enabling System-Level Observability for Python (Final)

This PEP proposes two things:

PYTHON.ORG

Articles & Tutorials

PyCon US 2026 Packaging Summit Recap

Per-talk notes from the PyCon US 2026 Packaging Summit, including: Emma Smith on Wheel 2.0 and Zstandard compression, Mike Fiedler on PyPI abuse vectors, Mahe Iram Khan on ecosystems, lightning talks on PEP 772, mobile wheels, AI accelerator variants, and the roundtable discussions.

BERNÁT GÁBOR

Slim Down Python Docker Containers

Learn how SlimToolkit can reduce a Python Docker image by analyzing what your app actually uses at runtime. This tutorial walks through slimming a Chainlit LLM chatbot image, shows where container bloat comes from, and explains how to avoid breaking lazily loaded Python frameworks.

CODECUT.AI • Shared by Khuyen Tran

Object-Oriented Python: 5-Day Live Workshop, June 8 to 12

A new live cohort for Python developers comfortable with the basics who want to design classes that hold up under change. Across five 2-hour sessions, OOP features appear at the moment a growing project actually needs them. You leave with a working app and the judgment to know when a class earns its keep →

REAL PYTHON sponsor

What Types of Exceptions Should You Catch?

The trickiest programming bugs are often caused by catching exceptions that you didn't mean to catch or handling exceptions in ways that obfuscate the actual error that's occurring. Which exceptions should you catch and which should you leave unhandled?

TREY HUNNER

Reverse Geocoding With Overture Maps

Mark is working on a reverse geocoder that can fetch the 2-letter ISO country code for any point on a map in a country's boundaries. This post talks about the prototype and his progress on the project.

MARK LITWINTSCHIK

Stop Writing Edge Case Tests. Use Hypothesis Instead

An introduction to property-based testing in Python with Hypothesis: the mental shift from 'what input should I test?' to 'what invariant should always hold?'

PEYTON GREEN • Shared by Anonymous

Opaque Types in Python

Learn how to use the NewType to mask a private class while still providing a public construction mechanism for the users of your library.

GLYPH LEFKOWITZ

How to Use the Claude API in Python

Learn how to use the Claude API in Python to send prompts, control responses with system instructions, and get structured JSON output.

REAL PYTHON

uv Is Fantastic, but Its Package UX Is a Mess

This opinion piece talks about how uv's CLI feels surprisingly clunky compared to its peers like pnpm or Poetry.

KEVIN RENSKERS

Python Built-in Functions: A Complete Guide

Use Python's built-in functions for math, data types, iterables, and I/O to write shorter, more Pythonic code.

REAL PYTHON

Projects & Code

agent-memory-guard: OWASP ASI06 AI Agent Memory Guard

GITHUB.COM/OWASP • Shared by Vaishnavi Gudur

Events

PyCon Italia 2026

May 27 to May 31, 2026

PYCON.IT

Python Leiden User Group

May 28, 2026

PYTHONLEIDEN.NL

PyDelhi User Group Meetup

May 30, 2026

MEETUP.COM

PyLadies El Alto: Flash Talks

May 30 to May 31, 2026

MEETUP.COM

Happy Pythoning!

This was PyCoder's Weekly Issue #736.

View in Browser »

[ Subscribe to 🐍 PyCoder's Weekly 💌 - Get the best Python news, articles, and tutorials delivered to your inbox once a week >> Click here to learn more ]

26 May 2026 7:30pm GMT

Real Python: Connecting LLMs to Your Data With Python MCP Servers

The Model Context Protocol (MCP) is a new open protocol that allows AI models to interact with external systems in a standardized, extensible way. In this video course, you'll install MCP, explore its client-server architecture, and work with its core concepts: prompts, resources, and tools. You'll then build and test a Python MCP server that queries e-commerce data and integrate it with an AI agent in Cursor to see real tool calls in action.

By the end of this video course, you'll understand:

- What MCP is and why it was created

- What MCP prompts, resources, and tools are

- How to build an MCP server with customized tools

- How to integrate your MCP server with AI agents like Cursor

You'll get hands-on experience with Python MCP by creating and testing MCP servers and connecting your MCP to AI tools. To keep the focus on learning MCP rather than building a complex project, you'll build a simple MCP server that interacts with a simulated e-commerce database. You'll also use Cursor's MCP client, which saves you from having to implement your own.

[ Improve Your Python With 🐍 Python Tricks 💌 - Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

26 May 2026 2:00pm GMT

Real Python: Quiz: Testing and Continuous Integration

In this quiz, you'll revisit the core concepts covered in the Testing and Continuous Integration learning path:

Learning Path

Testing and Continuous Integration

10 Resources ⋅ Skills: Unit Testing, Doctest, Mock Object Library, Pytest, Continuous Integration, Docker, Code Quality, GitHub Actions, Software Testing, CI/CD

The 20 questions span testing fundamentals, the unittest framework, mock objects, pytest, code quality tools, and continuous integration with GitHub Actions. They give you a way to check that you understood the most important ideas.

Take your time and revisit any topics that feel rusty before moving on.

[ Improve Your Python With 🐍 Python Tricks 💌 - Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

26 May 2026 12:00pm GMT

Real Python: Quiz: Python Web Scraping

In this quiz, you'll revisit the core concepts covered in the Python Web Scraping learning path:

You'll be quizzed on making HTTP requests, parsing HTML with Beautiful Soup, extracting data with Scrapy, working with JSON and CSV response data, and automating browsers with Selenium.

Take your time and revisit any topics that feel rusty before moving on.

[ Improve Your Python With 🐍 Python Tricks 💌 - Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

26 May 2026 12:00pm GMT

Real Python: Quiz: Python Control Flow and Loops

In this quiz, you'll revisit the core concepts covered in the Python Control Flow and Loops learning path:

Learning Path

Python Control Flow and Loops

15 Resources ⋅ Skills: Python, Control Flow, Conditional Statements, Booleans, for Loops, while Loops, enumerate, Nested Loops, break, continue, pass

The questions span conditional statements, the or Boolean operator, for and while loops, enumerate(), nested loops, and the break and continue keywords, giving you a way to check that you understood the most important ideas.

Take your time and revisit any topics that feel rusty before moving on.

[ Improve Your Python With 🐍 Python Tricks 💌 - Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

26 May 2026 12:00pm GMT

Real Python: Quiz: I/O Operations and String Formatting

In this quiz, you'll revisit the core concepts covered in the I/O Operations and String Formatting learning path:

Learning Path

I/O Operations and String Formatting

10 Resources ⋅ Skills: Python, Fundamentals, I/O, String Formatting, f-strings, print()

The 20 questions span reading keyboard input, controlling print(), stripping characters from strings, the format mini-language, and f-strings, giving you a way to check that you understood the most important ideas.

Take your time and revisit any topics that feel rusty before moving on.

[ Improve Your Python With 🐍 Python Tricks 💌 - Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

26 May 2026 12:00pm GMT

Real Python: Quiz: Functions and Scopes

In this quiz, you'll revisit the core concepts covered in the Functions and Scopes learning path:

Learning Path

Functions and Scopes

12 Resources ⋅ Skills: Python, Functions, Scope, Arguments, Parameters, Return, Globals

The 20 questions span defining functions, positional and keyword arguments, default values, *args and **kwargs, return statements, inner functions, the LEGB rule, namespaces, and the global and nonlocal keywords.

Take your time and revisit any topics that feel rusty before moving on to the next learning path.

[ Improve Your Python With 🐍 Python Tricks 💌 - Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

26 May 2026 12:00pm GMT

Real Python: Quiz: Files and File Streams

In this quiz, you'll revisit the core concepts covered in the Files and File Streams learning path:

Learning Path

Files and File Streams

13 Resources ⋅ Skills: Python, Pathlib, File I/O, Serialization, Encoding, Unicode, PDF, WAV, Context Managers, ZIP Files

You'll check your understanding of opening and reading files, navigating the file system with pathlib, managing resources with context managers and the with statement, and reading or writing WAV audio files.

Take your time and revisit any topics that feel rusty before moving on.

[ Improve Your Python With 🐍 Python Tricks 💌 - Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

26 May 2026 12:00pm GMT

Real Python: Quiz: DevOps With Python

In this quiz, you'll revisit the core concepts covered in the DevOps With Python learning path:

Learning Path

DevOps With Python

10 Resources ⋅ Skills: Packaging & Deployment, CI/CD, AWS, Docker, Logging

The 16 questions span running Python scripts, managing dependencies with pip, automating workflows with GitHub Actions, and configuring Python's logging module.

Take your time and revisit any topics that feel rusty before moving on.

[ Improve Your Python With 🐍 Python Tricks 💌 - Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

26 May 2026 12:00pm GMT

Real Python: Quiz: Data Science With Python Core Skills

In this quiz, you'll revisit the core concepts covered in the Data Science With Python Core Skills learning path:

Learning Path

Data Science With Python Core Skills

21 Resources ⋅ Skills: Pandas, NumPy, Data Cleaning, Data Visualization, Statistics

You'll cover reading and writing CSV files, working with JSON data, manipulating pandas DataFrames, and applying NumPy techniques for numerical computing.

Take your time and revisit any topics that feel rusty before moving on.

[ Improve Your Python With 🐍 Python Tricks 💌 - Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

26 May 2026 12:00pm GMT

Real Python: Quiz: Data Collection & Storage

In this quiz, you'll revisit the core concepts covered in the Data Collection & Storage learning path:

Learning Path

Data Collection & Storage

9 Resources ⋅ Skills: CSV, JSON, pandas, Excel, SQL, SQLite, SQLAlchemy, AWS S3, Databases

You'll work through questions on reading and writing CSV files, serializing and parsing JSON data, and interacting with SQL databases from Python. Together, these topics give you the foundation you need to ingest, persist, and query data in your own projects.

Take your time and revisit any topics that feel rusty before moving on.

[ Improve Your Python With 🐍 Python Tricks 💌 - Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

26 May 2026 12:00pm GMT

Real Python: Quiz: Connecting LLMs to Your Data With Python MCP Servers

In this video course quiz, you'll test your understanding of Connecting LLMs to Your Data With Python MCP Servers.

By working through this quiz, you'll revisit core MCP concepts like the client-server architecture, tools that LLMs can call, resources that expose static data, and prompts that act as reusable templates.

[ Improve Your Python With 🐍 Python Tricks 💌 - Get a short & sweet Python Trick delivered to your inbox every couple of days. >> Click here to learn more and see examples ]

26 May 2026 12:00pm GMT

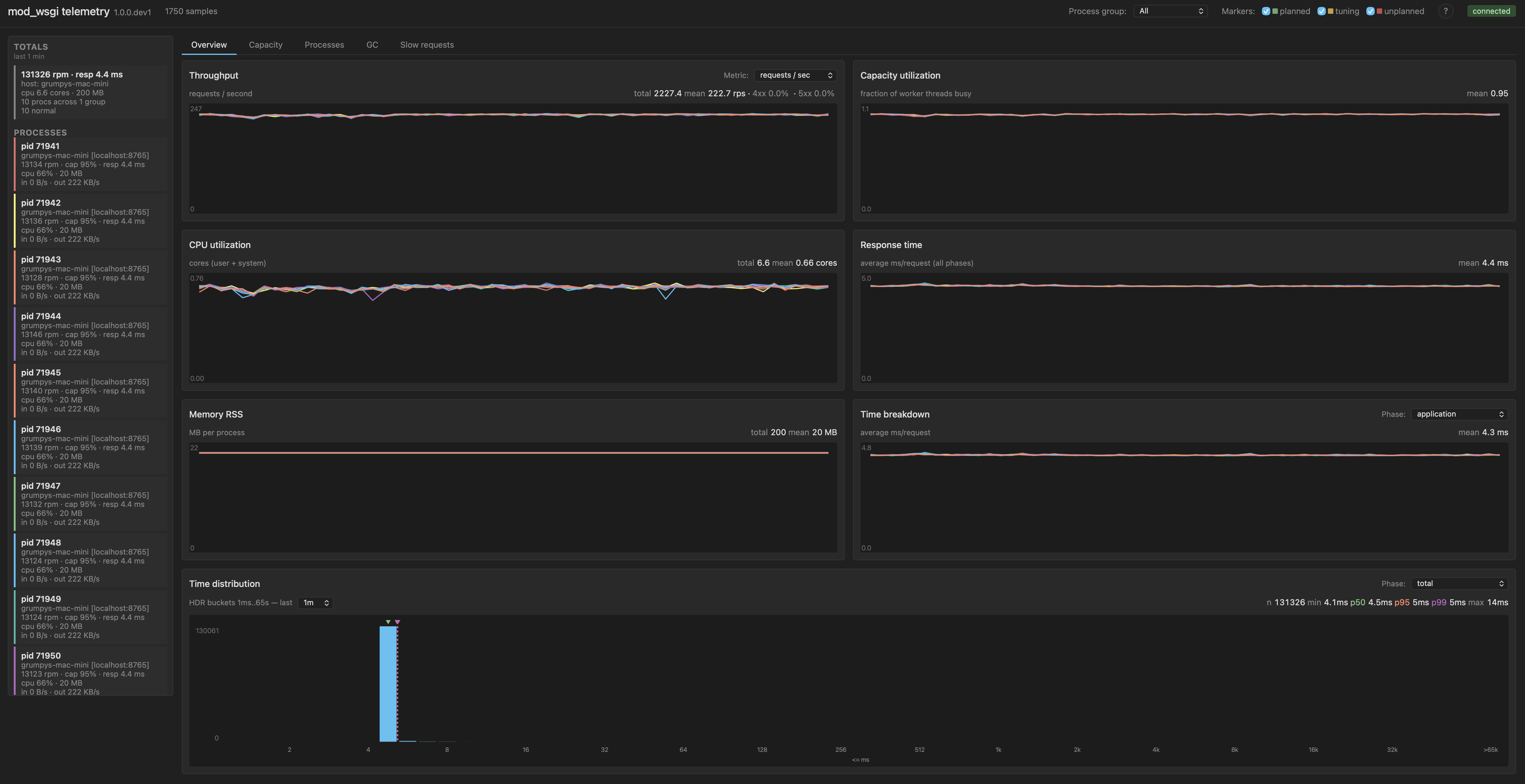

Graham Dumpleton: WSGISwitchInterval in mod_wsgi 6.0.0

The first two posts in this series covered new directives in mod_wsgi 6.0.0 that change the concurrency model the interpreter runs under. WSGIPerInterpreterGIL opts a sub-interpreter into its own GIL. WSGIFreeThreading opts a process into PEP 703 free-threaded mode. This third directive, WSGISwitchInterval, is a different sort of thing. It does not change the concurrency model. It exposes a Python tuning knob that has existed since Python 3.2 and that almost nobody touches, but that I have come to think is worth touching for a meaningful class of WSGI workloads.

The post is partly about what the directive does. Mostly though it is about a measurement story, and about why having telemetry to drive tuning decisions matters more than the directive itself.

What the switch interval is

The Python GIL is the lock that serialises bytecode execution across threads in a CPython process. Only one thread at a time holds it. For other threads to make progress on Python code, the holder has to release the lock. Some releases are voluntary, for instance during I/O calls that drop the GIL while they wait. Voluntary releases are not enough on their own to schedule cleanly between several CPU-busy threads though, so the interpreter also has a scheduler that nudges the holder to give the lock up periodically. That scheduler is what the switch interval controls.

In CPython 2 the scheduler was bytecode-count based. After every N bytecodes the interpreter would check for pending signals, drop the lock, and reacquire it. The setting was sys.setcheckinterval(N), default 100 ticks. The problem with bytecode counting was that bytecodes are not equal-cost. Some operations completed in a fraction of a microsecond. Others, like calling out into a slow built-in, took milliseconds. So the actual wall-clock interval between handoffs varied widely depending on what code was running.

Python 3.2 replaced this with a time-based scheduler. Antoine Pitrou's new GIL implementation moved the handoff trigger from "after N bytecodes" to "after T seconds since the last release", controlled by sys.setswitchinterval() with a default of 5 milliseconds. That default was a reasonable compromise on the hardware that existed in 2010. It has not changed since. Fifteen years on, on hardware that runs Python several times faster per cycle, the same 5 ms can be a much larger amount of Python work than it used to be. That is the rationale for considering whether the default is still the right value for your workload.

What WSGISwitchInterval does

The directive calls sys.setswitchinterval() after interpreter initialisation, so the setting takes effect for the rest of that interpreter's life. The simplest form is at server scope.

WSGISwitchInterval 0.002

This applies to the embedded mode interpreter in Apache child processes. For daemon mode the equivalent is the switch-interval= option on WSGIDaemonProcess.

WSGIDaemonProcess my-app processes=2 threads=5 switch-interval=0.002

The directive can also appear inside the <WSGIInterpreterOptions> container introduced in the per-interpreter GIL post. If the matched sub-interpreter has its own GIL via WSGIPerInterpreterGIL, you can tune that one sub-interpreter's switch interval separately from the others in the same process.

<WSGIInterpreterOptions process-group="my-app" application-group="cpu-heavy">

WSGIPerInterpreterGIL On

WSGISwitchInterval 0.001

</WSGIInterpreterOptions>

Without an own-GIL on the matched sub-interpreter the directive cannot be made per-sub-interpreter, because the GIL is shared across the process and tuning it for one sub-interpreter would silently affect all of them. mod_wsgi rejects that configuration with a warning rather than silently scoping wider than the operator asked for.

Under free-threading the directive is a no-op. There is no GIL to schedule.

The default is to leave Python's own default alone. You opt in to tune.

You cannot tune what you cannot measure

The case for adjusting the switch interval rests on being able to see what happens when you change it. Python itself does not expose any direct measure of GIL contention. There is no counter you can read to ask "how much time was spent waiting for the GIL". The interpreter knows in some sense, but it does not surface the information.

mod_wsgi exposes a partial measure, surfaced as gil_wait_time. It is the time the worker thread was held up acquiring the GIL at points where mod_wsgi is doing work on the application's behalf: request dispatch, request body reads, response writes, logging. It does not see contention while the application's own Python code is running, and it cannot see contention inside C extensions that release and reacquire the GIL on their own schedule. So the value is a lower bound, not an absolute measure of contention.

That is enough to drive tuning decisions, though. The metric moves directionally with actual contention. Combined with throughput and response time, three numbers from the same telemetry stream, it is enough to tell you whether a switch interval change helped or hurt.

The rest of the post is a worked example that uses exactly those three signals.

A benchmark to make the case

The workload is a synthetic WSGI handler. Each request spends approximately 3 ms running Python code on the CPU, plus a 1 ms simulated wait standing in for a small bit of I/O, and returns a 1 KB response body. The load generator drives concurrency 10, more than enough to saturate the available workers in every configuration shown below. The workload is deliberately idealised, with no real I/O and no C extension calls, because the point is to surface the effect of GIL scheduling on pure-Python compute as clearly as possible.

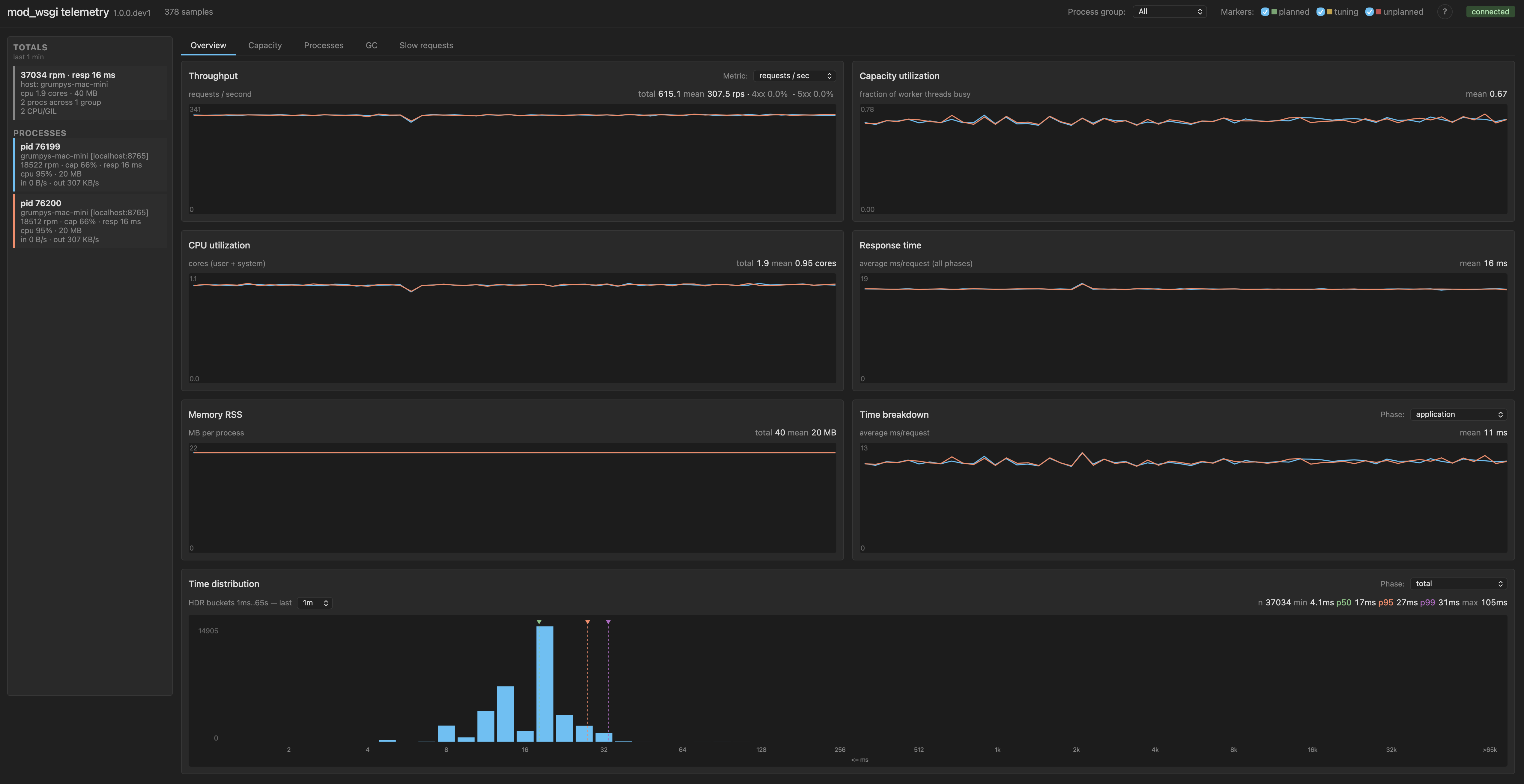

All four configurations below run on the same host, same Apache, same Python, same WSGI handler. Only the process and thread counts and the switch interval change. Each step includes a small table of the key metrics so the numbers are legible even if the dashboard screenshots are too small to read, and the table grows as we go so each configuration can be compared with the ones before.

Baseline: ten processes, one thread each

This is the no-contention reference point. Each daemon process has a single worker thread, so no two threads compete for the same GIL. Whatever GIL pressure shows up here is whatever overhead the lock adds on the dispatch and I/O paths in mod_wsgi itself, with no waiting.

WSGIDaemonProcess my-app processes=10 threads=1

The result is 134k requests per minute, 4 ms mean response time, gil_wait_time effectively zero. The GIL wait time distribution is a single bar in the head bucket, which is what no-contention looks like.

| Config | rpm | response | app | GIL p95 |

|---|---|---|---|---|

| 10 × 1, 5 ms (baseline) | 134k | 4 ms | 4 ms | none |

This is the upper bound for what the workload can do on the available cores when nothing contends with anything. Roughly 13.4k rpm per process.

Add threads: GIL contention takes over

Keep the total worker pool roughly comparable, but reshape it: two processes with five threads each. Same default 5 ms switch interval.

WSGIDaemonProcess my-app processes=2 threads=5

Throughput collapses to 37k requests per minute, about 28% of the baseline. Mean response time goes from 4 ms to 16 ms. Application time mean is now 11 ms, up from 4 ms in the baseline. Each process is now CPU/GIL-bound: five threads competing for one GIL inside the process, with cores sitting underutilised because only one thread can run Python at a time.

| Config | rpm | response | app | GIL p95 |

|---|---|---|---|---|

| 10 × 1, 5 ms (baseline) | 134k | 4 ms | 4 ms | none |

| 2 × 5, 5 ms | 37k | 16 ms | 11 ms | 13 ms |

The shape of the contention is most visible in the GIL wait time distribution chart.

The chart tells a clear story. There is a head bucket holding the requests that got their handoff immediately, then a series of bumps further out at multiples of the 5 ms switch interval. Each bump corresponds to a request that had to wait one or more switch intervals to acquire the GIL: one missed cycle, two missed cycles, three missed cycles, and so on. The bumps shrink as you move right, which is the shape of a contention pattern where missed cycles do not pile up too heavily. But the tail is fat. The percentile numbers along the top of the chart confirm this: p95 is 13 ms and p99 is 18 ms, meaning a meaningful fraction of requests are waiting several full switch intervals to make progress on Python code.

This is the textbook case for the CPU/GIL-bound label. With five threads competing for one GIL on each process, the GIL is the wall. The standard remediation is to add processes. The point of this post is that there is a second lever, which is to make each handoff cheaper rather than less frequent.

Tighten the switch interval to 2 ms

Same process and thread shape, but cut the switch interval from 5 ms to 2 ms.

WSGIDaemonProcess my-app processes=2 threads=5 switch-interval=0.002

Throughput moves from 37k to 42k requests per minute, about 13% better. Mean response time drops from 16 ms to 14 ms. The GIL wait time distribution chart is where the more interesting change shows up.

| Config | rpm | response | app | GIL p95 |

|---|---|---|---|---|

| 10 × 1, 5 ms (baseline) | 134k | 4 ms | 4 ms | none |

| 2 × 5, 5 ms | 37k | 16 ms | 11 ms | 13 ms |

| 2 × 5, 2 ms | 42k | 14 ms | 13 ms | 6 ms |

The chart is dramatically more head-heavy than at 5 ms. The head bucket now holds the bulk of the requests, where at 5 ms it was only about a fifth of them. Most requests are getting the GIL on their first try at the new interval. The smaller bumps further out are still there, but they sit closer to the head than their counterparts at 5 ms did, because each cycle is now 2 ms wide instead of 5 ms wide. The percentile numbers in the chart header confirm what the shape is showing: p50 has dropped from 5 ms to under 1 ms, p95 from 13 ms to 6 ms, p99 from 18 ms to 10 ms. Contention is both less frequent and cheaper when it does happen, and the throughput gain on the dashboard follows from that.

A reasonable stopping point for tuning the GIL switch interval on a mixed workload is around 2 ms. The reasoning is that more frequent GIL handoffs means more context switching, and at very short intervals that overhead can start to dominate. So if you do not have telemetry that lets you see the effect on your specific workload, 2 ms is a sensible place to stop. Going lower than that is something to do only when you can measure the result and confirm that the gain is real. The benchmark workload here is not a mixed workload, and the rest of this post is the measurement story that earns the right to go further.

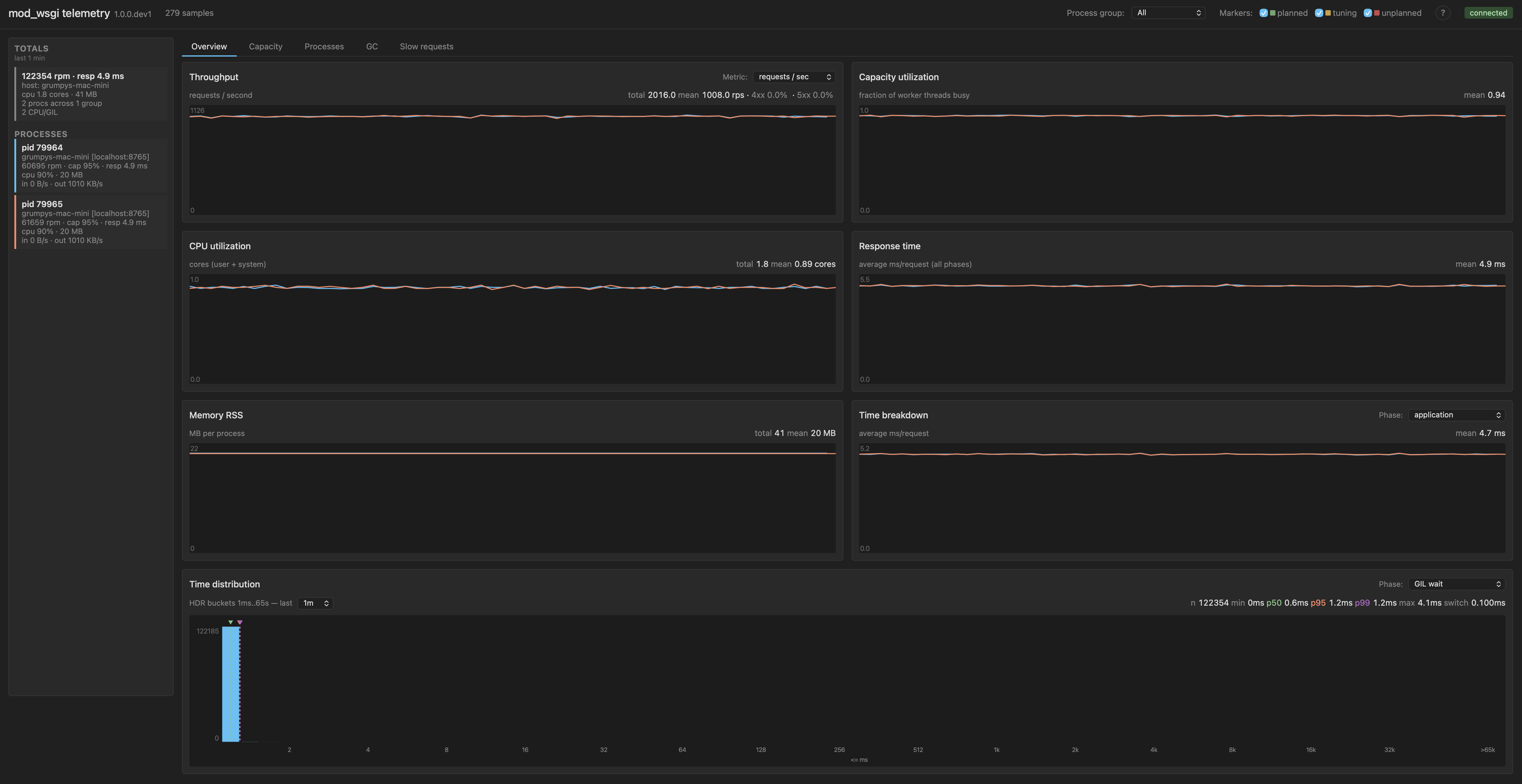

Tighten further to 0.1 ms

Same shape again, but switch interval down to 0.1 ms.

WSGIDaemonProcess my-app processes=2 threads=5 switch-interval=0.0001

Throughput jumps to 121k requests per minute. That is within roughly 10% of the no-contention baseline of 134k. Mean response time is back to 5 ms. Application time mean is back down to around 4.7 ms, close to its baseline value of 4.3 ms.

| Config | rpm | response | app | GIL p95 |

|---|---|---|---|---|

| 10 × 1, 5 ms (baseline) | 134k | 4 ms | 4 ms | none |

| 2 × 5, 5 ms | 37k | 16 ms | 11 ms | 13 ms |

| 2 × 5, 2 ms | 42k | 14 ms | 13 ms | 6 ms |

| 2 × 5, 0.1 ms | 121k | 5 ms | 5 ms | 1 ms |

The GIL wait time distribution collapses back to essentially the head bucket.

The bumps are gone. p95 is 1.2 ms and p99 is 1.2 ms, which is essentially "everything fits in the first bucket of the histogram". What is going on at this setting is that the switch interval is now much shorter than the per-request CPU cost. Each handoff happens many times during a single request's CPU work, so threads are interleaving at fine granularity rather than passing the GIL around in big chunks. There is no missed-cycle structure left for waiters to pile up on. Handoffs are continuous rather than periodic.

The workload is still CPU/GIL-bound in shape. Threads still spend most of their wall time holding a request without consuming CPU on it directly, because at any given instant only one thread per process can run Python. That structural fact has not changed. But the measured throughput cost of that shape has nearly vanished. The new switch interval has just made the cost of being that workload small enough not to hurt.

What this means

The default 5 ms switch interval is conservative for a pure-Python CPU-bound workload. For workloads of that shape the knob is real, and the gain can be substantial. Three observations follow from that, all of them important.

Most WSGI applications do not look like this benchmark. The typical web request spends most of its time in I/O, in database calls, in C extensions like JSON parsers or template engines, in HTTP client libraries. All of those release the GIL during their slow phase. For those workloads the default is probably fine, and tuning the switch interval will not move much.

Stopping at around 2 ms is a sensible default for a mixed workload. It is not the answer for every workload, though. If you have endpoints that are heavy on Python compute, data processing endpoints, ML preprocessing, anything that does meaningful work in pure Python before returning, those endpoints may be in the same regime as this benchmark, and the same lever can apply. The further down you go past 2 ms the more important it is to have the telemetry that confirms you are actually winning rather than guessing.

The way you find out is by measuring. Throughput, response time, and gil_wait_time on the same telemetry stream, with the switch interval as the only variable, is enough to tell you whether tuning helps for your workload.

Caveats

More frequent GIL handoffs mean more context switching. There is a cost to that, and at some interval that cost begins to dominate the gain. The benchmark workload here does not show that cost emerging at 0.1 ms, but that is partly because the workload is idealised. With real concurrency patterns and real I/O it would emerge sooner.

Tuning the switch interval down does not fix GIL contention inside C extensions that manage their own GIL acquire and release. If your contention lives inside NumPy or a database driver, this knob does not reach it.

The right framing is that this is a tuning lever for a specific class of workload, not a default to flip across the board. Use it where the measurements say it helps. Leave it alone where they say it does not.

What's next

If you run mod_wsgi and the case above is interesting for your workload, please install the 6.0.0 release candidate, try WSGISwitchInterval against your real traffic, and file issues against the GitHub project for anything that does not behave the way the documentation suggests it should.

This post has leaned heavily on telemetry from mod_wsgi-telemetry, the companion tool that records and visualises the metrics shown in the screenshots above. That tool is going to be the subject of a follow-up series. Before we get to that though, the next post will revisit the free-threading configuration from earlier in this series and look at how performance under it manifests through the same request metrics used here. The argument for tuning at all rests on having that visibility, and the screenshots here are what the tool surfaces out of the box.

For reference:

- mod_wsgi documentation

- mod_wsgi 6.0.0 release notes

- Per-interpreter GIL and free-threading user guide

WSGISwitchIntervaldirective documentation- Previous post: Per-interpreter GIL in mod_wsgi 6.0.0

- Previous post: Free-threading in mod_wsgi 6.0.0

26 May 2026 10:38am GMT