24 May 2026

Planet Grep

Planet Grep

Staf Wagemakers: Ansible roles: proxy_env, ssh, etc_hosts, libvirt released

Made some time to do some work for a few Ansible roles that I maintain. You'll find the new releases below.

stafwag.proxy_env 2.1.0

An Ansible role to set up the proxy environment in the shell environment ( /etc/profile & /etc/csh_cshrc ) and the package manager. The following package managers are supported:

- apt

- pacman

- pkgng

- Suse (on Suse /etc/sysconfig/proxy is configured)

- yum

stafwag.proxy_env 2.1.0 is available at:

- https://github.com/stafwag/ansible-role-proxy_env

- https://galaxy.ansible.com/ui/standalone/roles/stafwag/proxy_env/

Changelog

stafwag.proxy_env 2.1.0

-

Create pkg.conf on FreeBSD if not exists

- Create pkg.conf on FreeBSD if not exists

- Corrected ansible-lint errors

- Clean up

stafwag.ssh 1.1.1

An ansible role to manage sshd/ssh

stafwag.ssh 1.1.1 is available at:

- https://github.com/stafwag/ansible-role-ssh

- https://galaxy.ansible.com/ui/standalone/roles/stafwag/ssh/

Changelog

stafwag.ssh 1.1.1

- Corrected handler name

- BUGFIX: Corrected handler name

stafwag.ssh 1.1.0

- Corrected last ansible-lint errors

- Corrected last ansible-lint errors

- Example playbooks added

- Documentation updated

stafwag.libvirt 2.1.0

An ansible role to install libvirt/KVM packages and enable the libvirtd service.

stafwag.libvirt 2.1.0 is available at:

- https://github.com/stafwag/ansible-role-libvirt

- https://galaxy.ansible.com/ui/standalone/roles/stafwag/libvirt/

Changelog

stafwag.libvirt 2.1.0

- Update ArchLinux packages

- update_ssh_known_hosts directive added to allow to update the ssh host key after the virtual machine is installed.

- Documentation updated

-

Debug code added

stafwag.etc_hosts 1.1.1

An ansible role to manage /etc/hosts

stafwag.etc_hosts 1.1.1 is available at:

- https://github.com/stafwag/ansible-role-etc_hosts

- https://galaxy.ansible.com/ui/standalone/roles/stafwag/etc_hosts/

Changelog

stafwag.etc_hosts 1.1.1

- CleanUp

- Ansible-lint errors corrected

- Documenation updated

Have fun!

24 May 2026 3:00pm GMT

Jan De Luyck: Integrating the Ikea Starkvind with Home Assistant using deCONZ

Ikea Starkvind and Home Assistant

We recently bought an Ikea Starkvind Air Purifier, which supports Zigbee. I wanted to find out what I could do with it from within Home Assistant, possibly automating when it runs and when not. I also wanted to add some UI elements, like the mushroom fan card or the air purifier card, both of which rely on there being a fan entity.

Zigbee integration with deCONZ

I already had a ConbeeII Zigbee USB controller in use with the deCONZ Integration. Pairing the Starkvind was a matter of telling the Phoscon Software (which comes with the deCONZ integration) to scan for new sensors and pushing the pairing button on the Starkvind.

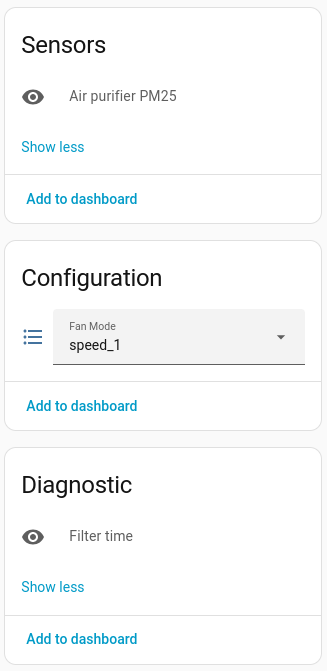

Surprisingly enough, only three entities showed up in Home Assistant:

- Air Purifier PM25

- Fan Mode

- Filter Runtime

deCONZ entities exposed through Home Assistant for the Starkvind Air Purifier

deCONZ entities exposed through Home Assistant for the Starkvind Air Purifier

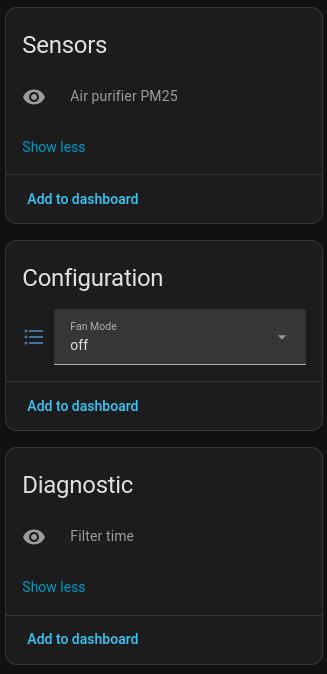

When looking in the deCONZ application there were a lot more attributes:

deCONZ cluster information

deCONZ cluster information

The deCONZ integration uses a python library for deconz, and in issue #322 I found that only these three items were actually requested to be added. I have since requested some more, but it's uncertain when and if those will be made available.

I came across this blog post by OyWin, detailing how they used the REST sensors to add their Starkvind into Home Assistant. While the approach was definitely the right way to go, I was not a fan of doing so many individual REST calls (one per sensor) as it's not needed - Home Assistant can handle it in 1 call per REST-API target.

deCONZ REST-API

Checking the deCONZ REST-API documentation for the Starkvind, there are a lot more attributes available, published under different devices: ZHAAirPurifier and ZHAParticulateMatter

The ones I wanted were:

ZHAAirPurifier

| Section | Attribute | Exposed via deCONZ integration | R/O or R/W |

|---|---|---|---|

| Config | filterlifetime | x | Read/Write |

| Config | ledindication | x | Read/Write |

| Config | locked | x | Read/Write |

| Config | mode | ✓ | Read Write |

| Config | on | x | Read Only |

| State | deviceruntime | x | Read Only |

| State | filterruntime | ✓ | Read Only |

| State | lastupdated | x | Read Only |

| State | replacefilter | x | Read Only |

| State | speed | x | Read Only |

ZHAParticulateMatter

| Section | Attribute | Exposed via deCONZ integration | R/O or R/W |

|---|---|---|---|

| State | measured_value | ✓ | Read Only |

| State | airquality | x | Read Only |

Time to get those into Home Assistant.

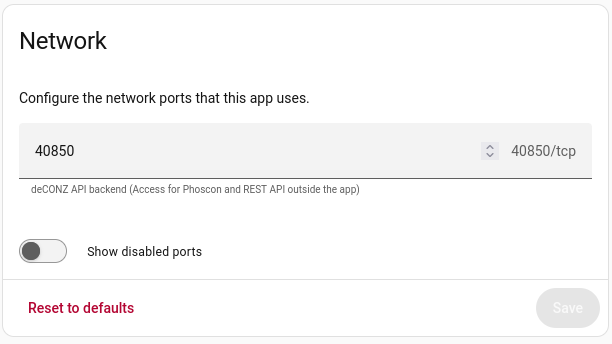

Configuring the deCONZ REST-API port

In order to be able to query the deCONZ REST-API, you need to make sure a port is configured in Home Assistant → Settings → Apps → deCONZ → Configuration

deCONZ App Network Configuration

deCONZ App Network Configuration

If this port is set you'll be able to issue http queries to the URL of your Home Assistant installation on the port specified. In my case this is http://home-assistant.internal:40850/

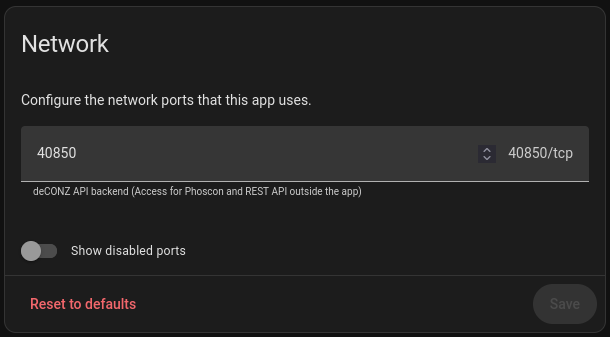

Finding the correct API urls

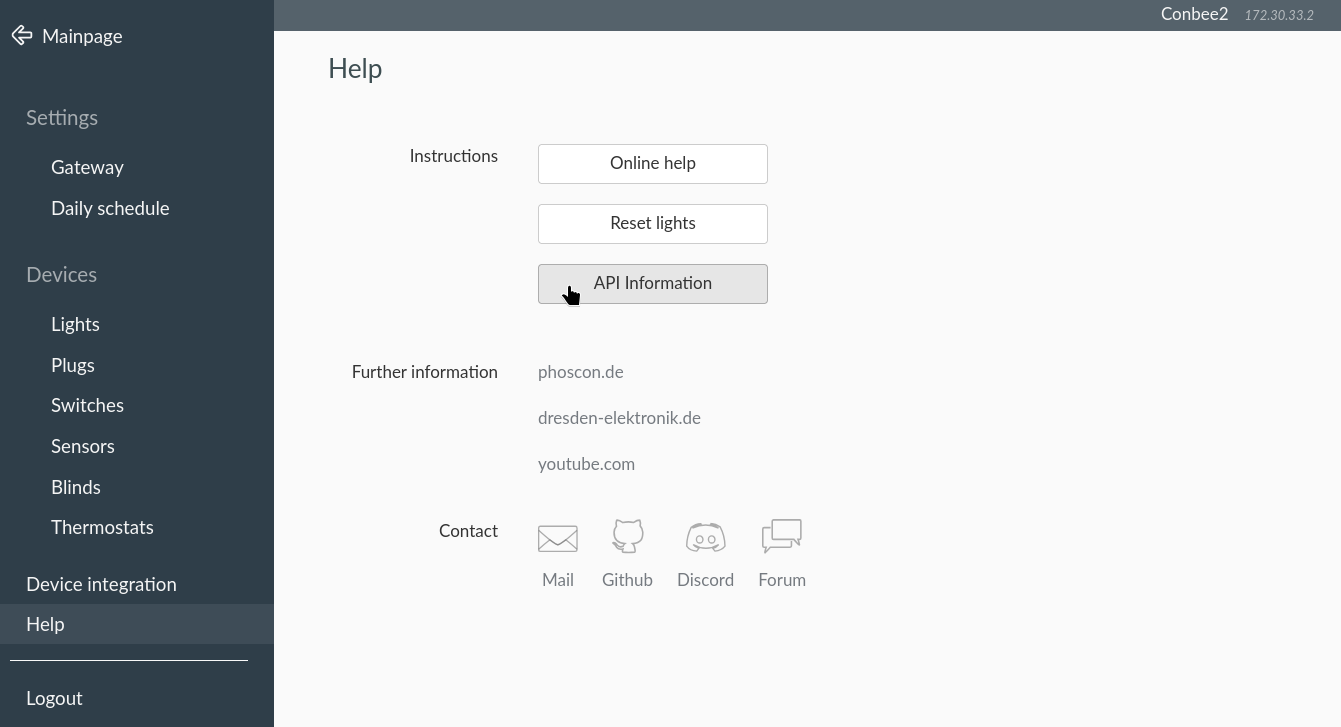

To find the correct API endpoints to use go to Home Assistant → deCONZ → Phoscon. Open the hamburger menu on the left and pick Help → API Information  Phoscon API Information screen

Phoscon API Information screen

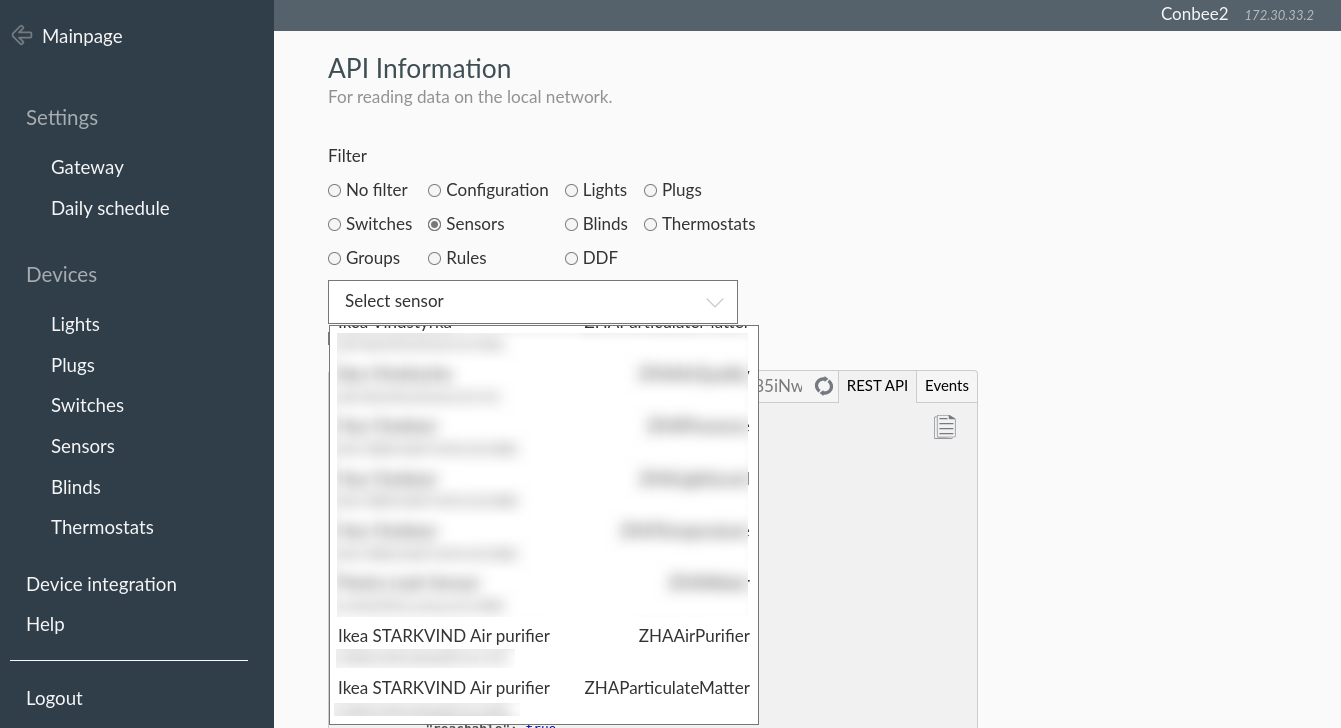

In the subsequent screen, pick "Sensors" and look for "Ikea STARKVIND Air Purifier". You should find two entries in the dropdown:  Phoscon API Information for the Starkvind Air Purifier

Phoscon API Information for the Starkvind Air Purifier

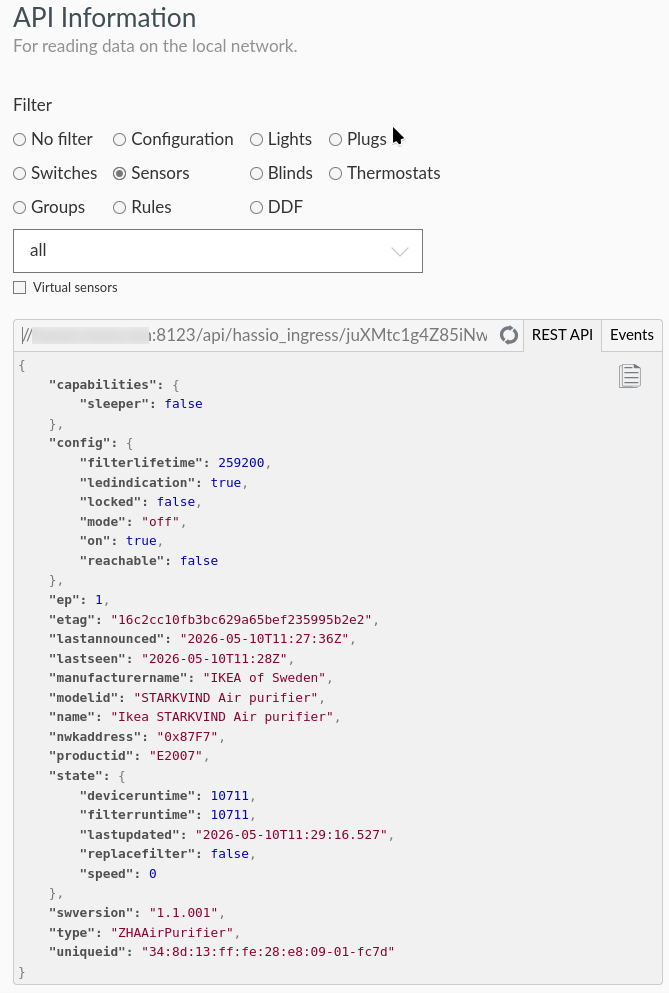

Once you click on one of the sensors, you will get a dump of what the API returns, and on top of that window, the API endpoint URL. In my example this reads:

//home-assistant.internal:8123/api/hassio_ingress/juXMtc1g4Z85iNwXSis58q2z7Kw7XO0Lz5k2X6cBsZ0/api/792DA42905/sensors/93. The converted direct unauthenticated URL becomes http://home-assistant.internal:40850/api/792DA42905/sensors/93.  Phoscon API information for the ZHAAirPurifier entity of the Starkvind Air Purifier

Phoscon API information for the ZHAAirPurifier entity of the Starkvind Air Purifier

792DA42905 is your own API key, and 93 is the internal numbering of deCONZ for your sensor.

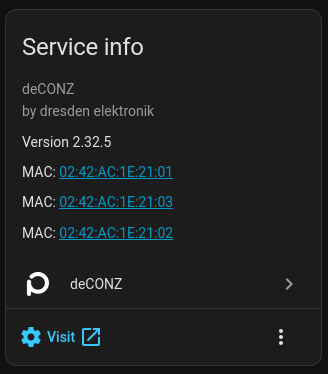

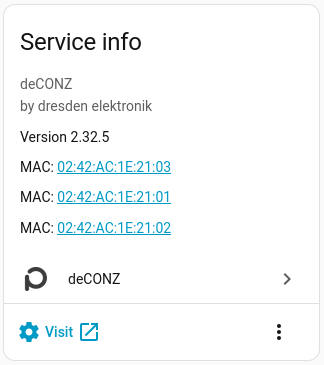

Now, this URL allows you to query the API from the outside. I did not need this as I wanted to run the queries from inside Home Assistant. You can find the internal url by going to Home Assistant → Settings → Devices & services, selecting the deCONZ integration and picking the Conbee2. In the Service Info there is a "Visit" link, which shows you the internal hostname to use.

deCONZ Conbee2 Service Information

deCONZ Conbee2 Service Information

This will usually be core-deconz, so the URL becomes http://core-deconz:40850/api/<apikey>/sensors/<sensor-id>.

Home Assistant Configuration

Creating the REST sensors

Using the URL assembled above I added the sensor and binary_sensor entities to Home Assistant.

- rest:

- resource: http://core-deconz:40850/api/792DA42905/sensors/93

binary_sensor:

- name: Ikea Starkvind Led Indication

value_template: "{{ value_json.config.ledindication }}"

unique_id: ikea_starkvind_led_indication

- name: Ikea Starkvind Locked

value_template: "{{ value_json.config.locked }}"

unique_id: ikea_starkvind_locked

- name: Ikea Starkvind Sensor On

value_template: "{{ value_json.config.on }}"

unique_id: ikea_starkvind_sensor_on

- name: Ikea Starkvind Replace Filter

value_template: "{{ value_json.state.replacefilter }}"

unique_id: ikea_starkvind_replace_filter

sensor:

- name: Ikea Starkvind Filter Runtime

value_template: "{{ value_json.state.filterruntime }}"

unique_id: ikea_starkvind_filter_runtime

device_class: duration

unit_of_measurement: min

- name: Ikea Starkvind Device Runtime

value_template: "{{ value_json.state.deviceruntime }}"

unique_id: ikea_starkvind_device_runtime

device_class: duration

unit_of_measurement: min

- name: Ikea Starkvind Filter Lifetime

value_template: "{{ value_json.config.filterlifetime }}"

unique_id: ikea_starkvind_filter_lifetime

device_class: duration

unit_of_measurement: min

- name: Ikea Starkvind Mode

value_template: "{{ value_json.config.mode }}"

unique_id: ikea_starkvind_mode

- name: Ikea Starkvind Fan Speed

value_template: "{{ value_json.state.speed }}"

unique_id: ikea_starkvind_fan_speed

state_class: measurement

- name: Ikea Starkvind Last Updated

value_template: "{{ value_json.state.lastupdated + 'Z' }}"

unique_id: ikea_starkvind_lastupdated

device_class: timestamp

Enabling setting the led indicator

To be able to update the ledindication, I added a binary helper called ikea_starkvind_ledindication as a toggle, a rest_command to set it, and an automation to bind the two together:

rest_command:

ikea_starkvind_set_ledindication:

url: "http://core-deconz:40850/api/792DA42905/sensors/93"

method: put

content_type: "application/json; charset=utf-8"

payload: '{ "config": { "ledindication": {{ states("input_boolean.ikea_starkvind_ledindication") | bool | lower }}}}'

alias: Ikea Starkvind - Sync Led Indication

description: ""

triggers:

- trigger: state

entity_id:

- input_boolean.ikea_starkvind_ledindication

actions:

- action: rest_command.ikea_starkvind_set_ledindication

data: {}

mode: single

Additional sensors

I also added a template sensor to calculate the lifetime left of the filter:

template:

- sensor:

name: Ikea Starkvind Filter Lifetime Remaining

state: "{{ states('sensor.ikea_starkvind_filter_lifetime') | int - states('sensor.ikea_starkvind_filter_runtime') | int }}"

unique_id: ikea_starkvind_filter_lifetime_remaining

device_class: duration

unit_of_measurement: min

Creating a Fan entity

To use the premade cards I needed a fan entity. This can be created as a template, based off of the previously created entities:

- fan:

- name: "IKEA Starkvind"

unique_id: ikea_starkvind_fan

availability: "{{ states('select.ikea_starkvind_fan_mode') not in ['unknown', 'unavailable'] }}"

state: "{{ states('select.ikea_starkvind_fan_mode') != 'off' }}"

percentage: >

{% set map = {

'speed_1': 20, 'speed_2': 40, 'speed_3': 60,

'speed_4': 80, 'speed_5': 100

} %}

{{ map.get(states('select.ikea_starkvind_fan_mode'), 0) }}

preset_mode: >

{% if states('select.ikea_starkvind_fan_mode') == 'auto' %}auto{% endif %}

preset_modes:

- auto

speed_count: 5

turn_on:

action: select.select_option

target:

entity_id: select.ikea_starkvind_fan_mode

data:

option: auto

turn_off:

action: select.select_option

target:

entity_id: select.ikea_starkvind_fan_mode

data:

option: "off"

set_percentage:

action: select.select_option

target:

entity_id: select.ikea_starkvind_fan_mode

data:

option: >

{% if percentage == 0 %} off

{% elif percentage <= 20 %} speed_1

{% elif percentage <= 40 %} speed_2

{% elif percentage <= 60 %} speed_3

{% elif percentage <= 80 %} speed_4

{% else %} speed_5

{% endif %}

set_preset_mode:

action: select.select_option

target:

entity_id: select.ikea_starkvind_fan_mode

data:

option: "{{ preset_mode }}"

Home Assistant Cards

I tried out a few cards to see what I liked.

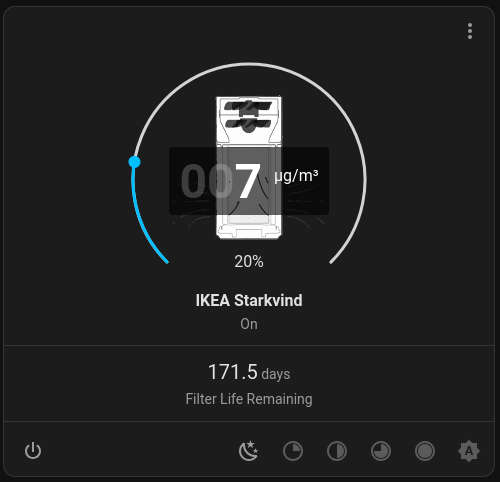

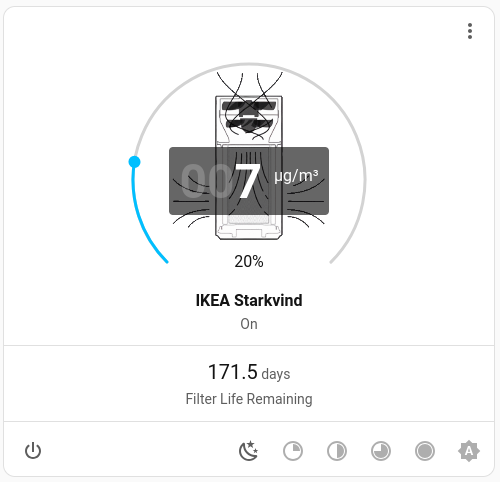

Custom Purifier Card

My first try was the custom air purifier card with this configuration:

type: custom:purifier-card

entity: fan.ikea_starkvind

aqi:

entity_id: sensor.ikea_starkvind_air_quality_measured_value

unit: μg/m³

stats:

- entity_id: sensor.ikea_starkvind_filter_lifetime_remaining

value_template: "{{ (value | float(0) / 60 / 24 ) | round(1) }}"

unit: days

subtitle: Filter Life Remaining

shortcuts:

- name: Speed 1

icon: mdi:weather-night

percentage: 20

- name: Speed 2

icon: mdi:circle-slice-2

percentage: 40

- name: Speed 3

icon: mdi:circle-slice-4

percentage: 60

- name: Speed 4

icon: mdi:circle-slice-6

percentage: 80

- name: Speed 5

icon: mdi:circle-slice-8

percentage: 100

- name: Auto

icon: mdi:brightness-auto

preset_mode: auto

It ended up looking like this, which did not thrill me.

Air Purifier Card

Air Purifier Card

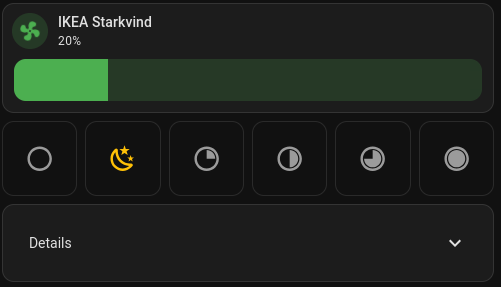

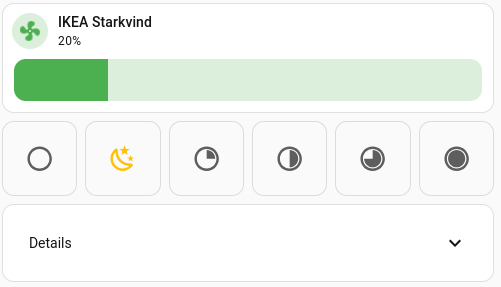

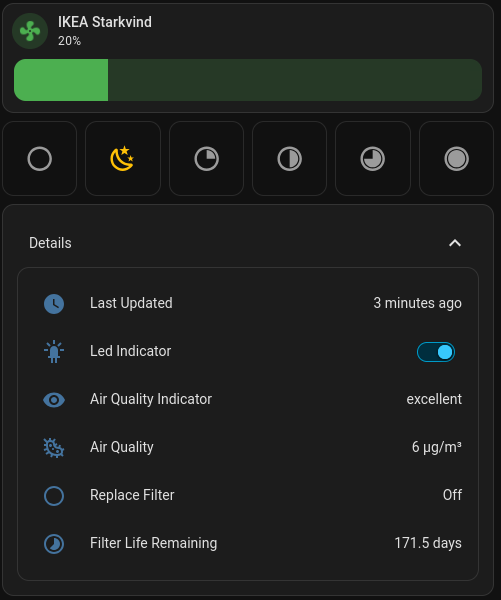

Custom cards

I cobbled something together based on the mushroom-fan-card, the button-card, the template-entity-row and the lovelace-expander-card.

type: vertical-stack

cards:

- type: custom:mushroom-fan-card

entity: fan.ikea_starkvind

icon_animation: true

primary_info: name

secondary_info: state

show_percentage_control: true

collapsible_controls: true

show_direction_control: false

show_oscillate_control: false

- type: horizontal-stack

cards:

- type: custom:button-card

icon: mdi:circle-outline

entity: fan.ikea_starkvind

show_name: false

aspect_ratio: 1/1

tap_action:

action: call-service

service: fan.set_percentage

data:

percentage: 0

target:

entity_id: fan.ikea_starkvind

styles:

card:

- background-color: |-

[[[

return entity.state === 'off'

? 'rgba(var(--rgb-state-active-color), 0.2)'

: 'var(--ha-card-background)';

]]]

icon:

- color: |-

[[[

return entity.state === 'off'

? 'var(--state-active-color)'

: 'var(--secondary-text-color)';

]]]

- type: custom:button-card

icon: mdi:weather-night

entity: fan.ikea_starkvind

show_name: false

aspect_ratio: 1/1

tap_action:

action: call-service

service: fan.set_percentage

data:

percentage: 20

target:

entity_id: fan.ikea_starkvind

styles:

card:

- background-color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 20

? 'rgba(var(--rgb-state-active-color), 0.2)'

: 'var(--ha-card-background)';

]]]

icon:

- color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 20

? 'var(--state-active-color)'

: 'var(--secondary-text-color)';

]]]

- type: custom:button-card

icon: mdi:circle-slice-2

entity: fan.ikea_starkvind

show_name: false

aspect_ratio: 1/1

tap_action:

action: call-service

service: fan.set_percentage

data:

percentage: 40

target:

entity_id: fan.ikea_starkvind

styles:

card:

- background-color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 40

? 'rgba(var(--rgb-state-active-color), 0.2)'

: 'var(--ha-card-background)';

]]]

icon:

- color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 40

? 'var(--state-active-color)'

: 'var(--secondary-text-color)';

]]]

- type: custom:button-card

icon: mdi:circle-slice-4

entity: fan.ikea_starkvind

show_name: false

aspect_ratio: 1/1

tap_action:

action: call-service

service: fan.set_percentage

data:

percentage: 60

target:

entity_id: fan.ikea_starkvind

styles:

card:

- background-color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 60

? 'rgba(var(--rgb-state-active-color), 0.2)'

: 'var(--ha-card-background)';

]]]

icon:

- color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 60

? 'var(--state-active-color)'

: 'var(--secondary-text-color)';

]]]

- type: custom:button-card

icon: mdi:circle-slice-6

entity: fan.ikea_starkvind

show_name: false

aspect_ratio: 1/1

tap_action:

action: call-service

service: fan.set_percentage

data:

percentage: 80

target:

entity_id: fan.ikea_starkvind

styles:

card:

- background-color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 80

? 'rgba(var(--rgb-state-active-color), 0.2)'

: 'var(--ha-card-background)';

]]]

icon:

- color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 80

? 'var(--state-active-color)'

: 'var(--secondary-text-color)';

]]]

- type: custom:button-card

icon: mdi:circle-slice-8

entity: fan.ikea_starkvind

show_name: false

aspect_ratio: 1/1

tap_action:

action: call-service

service: fan.set_percentage

data:

percentage: 100

target:

entity_id: fan.ikea_starkvind

styles:

card:

- background-color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 100

? 'rgba(var(--rgb-state-active-color), 0.2)'

: 'var(--ha-card-background)';

]]]

icon:

- color: |-

[[[

return entity.state === 'on' && entity.attributes.percentage === 100

? 'var(--state-active-color)'

: 'var(--secondary-text-color)';

]]]

- type: custom:expander-card

title: Details

cards:

- type: entities

entities:

- entity: sensor.ikea_starkvind_last_updated

name: Last Updated

- entity: input_boolean.ikea_starkvind_ledindication

name: Led Indicator

- entity: sensor.ikea_starkvind_air_quality

name: Air Quality Indicator

- type: custom:template-entity-row

state: >-

{{ states('sensor.ikea_starkvind_air_quality_measured_value') }}

µg/m³

name: Air Quality

icon: mdi:bacteria-outline

- entity: binary_sensor.ikea_starkvind_replace_filter

name: Replace Filter

- type: custom:template-entity-row

name: Filter Life Remaining

state: |-

{{ (states('sensor.ikea_starkvind_filter_lifetime_remaining') |

float(0) / 60 / 24)| round(1) }} days

icon: mdi:timelapse

animation: false

clear-children: false

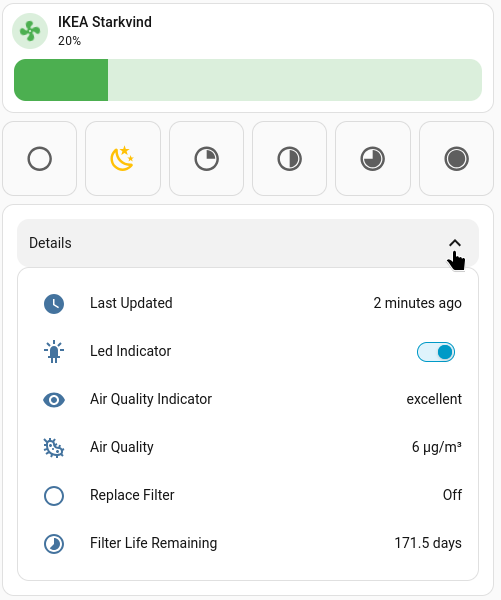

This one I kept :)

Collapsed custom card

Collapsed custom card

Unfolded custom card

Unfolded custom card

24 May 2026 3:00pm GMT

Frederic Descamps: Long live to dbdeployer!

As you know, MySQL-Sandbox and then dbdeployer have always been part of the Swiss Army knife for DBAs trying to evaluate, test, or reproduce issues with a certain version of their database. The author, Giuseppe Maxia, aka the datacharmer, produced incredible work on these two projects. Unfortunately, Giuseppe decided to archive the project in 2023. […]

As you know, MySQL-Sandbox and then dbdeployer have always been part of the Swiss Army knife for DBAs trying to evaluate, test, or reproduce issues with a certain version of their database. The author, Giuseppe Maxia, aka the datacharmer, produced incredible work on these two projects. Unfortunately, Giuseppe decided to archive the project in 2023. […]

24 May 2026 3:00pm GMT

Frederic Descamps: Dealing with caching_sha2_password as authentication method in MariaDB Server

MariaDB Server supports different authentication methods just like MySQL. Depending on your installation, the number of available and active authentication plugins can vary. But you should always have those 3 by default: If we compare with MySQL 9.7, we can see only 2 default authentication plugins: About caching_sha2_password module In some packaging, the caching_sha2_password auhentication […]

MariaDB Server supports different authentication methods just like MySQL. Depending on your installation, the number of available and active authentication plugins can vary. But you should always have those 3 by default: If we compare with MySQL 9.7, we can see only 2 default authentication plugins: About caching_sha2_password module In some packaging, the caching_sha2_password auhentication […]

24 May 2026 3:00pm GMT

Frederic Descamps: Adding a New Data Type to MariaDB with Type_handler – Part 5

We are concluding our series related to new data types using the Type_handler framework, with some limitations that are not yet covered by the framework: It would have been handy for our MONEY datatype to have the possibility to define, for example, the currency to show. Or the format to have something like this: Unfortunately, […]

We are concluding our series related to new data types using the Type_handler framework, with some limitations that are not yet covered by the framework: It would have been handy for our MONEY datatype to have the possibility to define, for example, the currency to show. Or the format to have something like this: Unfortunately, […]

24 May 2026 3:00pm GMT

Frederic Descamps: Adding a New Data Type to MariaDB with Type_handler – Part 4

This is part 4 of a series related to extending MariaDB with a custom data type using the Type_handler framework. You can find the previous articles below: Overriding Existing Types In the previous examples, our MONEY data type inherits from DOUBLE and then we override some methods. But all the methods of every type cannot […]

This is part 4 of a series related to extending MariaDB with a custom data type using the Type_handler framework. You can find the previous articles below: Overriding Existing Types In the previous examples, our MONEY data type inherits from DOUBLE and then we override some methods. But all the methods of every type cannot […]

24 May 2026 3:00pm GMT

Frederic Descamps: Adding a New Data Type to MariaDB with Type_handler – Part 3

In the previous article, we wrote, compiled, and tested our first custom data type for MariaDB using the Type_handler framework. But currently, aside from allowing the use of its new name (MONEY) and listing it in the metadata, our new data type behaves exactly like a DOUBLE, the class it inherits from. In this article, […]

In the previous article, we wrote, compiled, and tested our first custom data type for MariaDB using the Type_handler framework. But currently, aside from allowing the use of its new name (MONEY) and listing it in the metadata, our new data type behaves exactly like a DOUBLE, the class it inherits from. In this article, […]

24 May 2026 3:00pm GMT

Frederic Descamps: Adding a New Data Type to MariaDB with Type_handler – Part 2

After having discovered the Type_hander framework and learned how to build MariaDB Server from source, it's time to code our first data type! We will create a MariaDB plugin that registers a new MONEY type and instantiates a custom field object. Our component won't be exciting, but we want to understand how to use the […]

After having discovered the Type_hander framework and learned how to build MariaDB Server from source, it's time to code our first data type! We will create a MariaDB plugin that registers a new MONEY type and instantiates a custom field object. Our component won't be exciting, but we want to understand how to use the […]

24 May 2026 3:00pm GMT

Frederic Descamps: Adding a New Data Type to MariaDB with Type_handler – Part 1

This is the first part of the series about how to add a new data type to MariaDB using the Type_handler framework. A preliminary article has already been published to start the series; it covers how to set up your development environment and compile MariaDB Server: Adding a New Data Type to MariaDB with Type_handler […]

This is the first part of the series about how to add a new data type to MariaDB using the Type_handler framework. A preliminary article has already been published to start the series; it covers how to set up your development environment and compile MariaDB Server: Adding a New Data Type to MariaDB with Type_handler […]

24 May 2026 3:00pm GMT

Frederic Descamps: Adding a New Data Type to MariaDB with Type_handler – Part 0

Welcome to this new series about extending MariaDB. This series covers the addition of a new data type using the Type_handler. The goal of the entire series is to create a new plugin data type MONEY to store and display amounts with currency. Something like: Of course, the ultimate goal is to teach how to […]

Welcome to this new series about extending MariaDB. This series covers the addition of a new data type using the Type_handler. The goal of the entire series is to create a new plugin data type MONEY to store and display amounts with currency. Something like: Of course, the ultimate goal is to teach how to […]

24 May 2026 3:00pm GMT

Frank Goossens: stofrijden voor beginners

Deze ochtend het parcours van het WK Gravel 2025 gereden. Best stoffig!

24 May 2026 3:00pm GMT

Dries Buytaert: Why Drupal CMS matters

Last week at Drupal South, Pamela Barone delivered a keynote on Drupal CMS. Her talk is one of the clearest articulations I've seen of what Drupal CMS is, why it exists, and where it's headed. That shouldn't come as a surprise because Pam is the Product Lead for Drupal CMS.

Pam quoted a familiar Drupal saying: Drupal makes hard things possible, but it also makes easy things hard.

. The room laughed because it's true.

Her keynote was about how Drupal CMS is helping to fix that. Drupal CMS is making Drupal easier to learn, easier to use, and easier to sell, without removing any of Drupal's power and flexibility. It brings visual page editing, a smoother path for new developers, and better project economics.

And these improvements are not just interesting for smaller projects. Universities, governments, and large enterprises want the same benefits. That is why Drupal CMS matters at every scale.

Pam also explains how Drupal CMS sits on top of Drupal Core, why it is not a Drupal distribution, how it gives digital agencies leverage, what site templates unlock, and how Drupal Canvas reshapes the page building experience.

If you watch one Drupal video this week, make it Pam's!

24 May 2026 3:00pm GMT

Dries Buytaert: The gap between Drupal and its reputation

I saw two thoughtful posts in my LinkedIn feed over the last week that I wanted to reshare here before the LinkedIn feed buried them. Both were spot on, honest, and deserve a longer shelf life.

The first was from Hynek Naceradsky:

I'm pissed.

Not at Drupal. At the people confidently hating on it without ever having understood what it actually does.

"Drupal is outdated." "Drupal is too complex." "Nobody uses Drupal anymore."

Tell that to the EU institutions, governments, universities, and enterprises quietly running mission-critical platforms on it.

Here is what actually gets me though: the Drupal community lets this narrative win.

I am guilty of this too.

We literally have thousands of contributed modules, maintained for free, by people who owe you absolutely nothing. The security team responds faster than most paid vendors. The community has been showing up for 20+ years.

And yet we're somehow losing the PR war to frameworks that can't handle a proper content workflow without three paid plugins and a prayer.

Drupal people: talk louder. Write the posts. Go to the meetups. Tell the stories, fight for Drupal.

Because the Drupal community is honestly the best thing in Open Source, and both it and Drupal deserve way better than silence.

The second was from Thomas Scola, writing from a Drupal AI event in New York (lightly trimmed):

I overheard a couple people say, "Drupal? Is that still around?"

Hell yes it is.

And not only is it still around, I'd argue pretty heavily that Drupal is uniquely positioned for what comes next with the agentic web.

API-first before API-first was cool and trendy. Structured content that actually makes sense. Mature permissions, workflows, governance, integrations.

A lot of platforms are now scrambling to figure out how AI fits into what they already built.

Drupal doesn't have to force it. The architecture has been there.

But honestly, the tech is only part of it. The community is what always gets me. The people, passion and innovation. [...]

What comes next? Who knows.

But if I'm betting on a community to adapt, build, and help define that future, I'm putting my money on this one, and on what we've all built together.

For a platform people love to ask if it's "still around", it feels more relevant than ever.

I could not agree more with both posts. Drupal is one of the strongest Open Source platforms out there right now, but too few people realize it. The Drupal community has been modernizing the platform faster than its reputation evolves.

If the loudest narrative about Drupal is that it is outdated, people will keep repeating it, even when it is wrong. AI systems will too, because they absorb the same narratives, blog posts, forum threads, and social media the rest of the industry does.

The danger is not just that Drupal is misunderstood today. It's that the gap between perception and reality may be growing, not shrinking.

The narratives we reinforce today become part of how AI describes Drupal tomorrow. The Drupal community's silence today becomes tomorrow's AI consensus.

So if you're in the Drupal community, take Hynek's advice and help set the record straight. Not for AI, but for people. Write about the great work happening in Drupal: share the case studies, the technical breakthroughs, the AI innovation, the shared learnings, and the hard problems being solved every day.

We need to spend a lot more time explaining where Drupal fits, the kinds of problems it solves well, and why so many organizations believe in Open Source and the Drupal community.

I know many people in Open Source dislike marketing or self-promotion. I do too, sometimes. But if we don't document what is great about Drupal, others will define Drupal for us.

Every accurate case study, technical blog post, demo, presentation, or community success story helps future developers, evaluators, and AI systems understand what Drupal actually is.

Drupal does not need hype. It needs a better public record.

24 May 2026 3:00pm GMT

Dries Buytaert: Acquia builds Drupal funding into its partner program

Today Acquia announced something I'm really proud of. We're calling it the Acquia Fair Trade Initiative.

When an Acquia partner closes a deal, 2% of that deal flows directly to the Drupal Association, credited in the partner's name, to fund Drupal's infrastructure and long-term growth. This is in addition to the millions of dollars Acquia already invests in Drupal each year.

Imagine an Acquia partner closes a $100,000 Drupal deal with Acquia. $2,000 goes to the Drupal Association, attributed to that partner. The 2% comes from Acquia, not from partner margins, so the partner keeps their full revenue and incentives.

The donation is publicly attributed in the Acquia Partner Portal and counts toward the partner's standing in the Drupal Association's Certified Partner Program. It is recognized as financial support for the Drupal Association, separate from non-financial contributions like code, case studies, or community participation.

Most of all, I like that this program is structural. It is not a one-time gift or sponsorship campaign. It is built into the economics of Acquia's partner program, so Drupal's funding grows automatically as Acquia and its partners grow.

Too often, funding for Open Source projects depends on periodic fundraising or individual goodwill. That can work, but it rarely scales in a predictable way.

Open Source sustainability works best when incentives align. With the Fair Trade Initiative, the Drupal Association receives more predictable funding, partners receive recognition through the Drupal Association's Certified Partner Program, and Acquia invests in the long-term health of the Drupal ecosystem its business depends on. And yes, this also creates more incentive for partners to work with Acquia on Drupal projects. Drupal wins, Acquia's partners win, and Acquia wins too. That is what incentive alignment looks like.

I set a reminder for myself to report back in a year, maybe sooner. I'm curious to see what this model can become.

24 May 2026 3:00pm GMT

Dries Buytaert: AI-generated Rector rules for Drupal

Keeping up with major Drupal Core releases takes real effort. Each release deprecates APIs and introduces new coding patterns, forcing module developers to update their code.

That is how most software evolves: old patterns are gradually replaced by better ones.

Tools like Drupal Rector help automate parts of that work, but still rely on hand-written rules. Historically, that hasn't scaled well. Writing Rector rules is often more tedious than difficult: reading change records, understanding edge cases, finding real-world usage patterns, and testing rules.

So I asked a different question: what if we didn't have to write Rector rules at all?

If AI can generate Rector rules automatically, Drupal Core can keep evolving without every API change turning into manual migration work.

That idea led me to extend Drupal Digests, the tool I built to follow key Drupal developments. In addition to generating summaries, it now also analyzes Drupal Core commits and generates Rector rules automatically.

When a Drupal Core commit deprecates an API or introduces a new pattern, the tool reads the related issue, analyzes the discussion around it, reviews the code changes, and generates a corresponding Rector rule.

The system has only been running for a few weeks, yet it has already generated over 175 Rector rules, with new rules continuously added as the pipeline processes more Drupal Core issues.

AI-generated code is far from perfect. Some rules will have bugs, and others will miss edge cases. But that is exactly why I wanted to publish them now: the more people test them on real projects, the faster they will improve.

Special thanks to Björn Brala, co-maintainer of Drupal Rector, who discovered I was working on this and quickly jumped in to help test and validate some of the generated rules. That kind of feedback is incredibly valuable.

You can try them as follows:

git clone https://github.com/dbuytaert/drupal-digests.git

composer require --dev rector/rector

vendor/bin/rector process web/modules/custom \

--config drupal-digests/rector/all.php --dry-run

Example

Take Drupal's modernization of the $entity->original property, which exposed the unchanged copy of an entity. Drupal 11.2 deprecated the property in favor of explicit $entity->getOriginal() and $entity->setOriginal() methods. The old property will be removed in Drupal 12 so various module maintainers have to update their code.

Drupal Digests generated a Rector rule that rewrites read access to getOriginal() and write assignment to setOriginal().

Before:

$entity->original->field->value;

$entity->original = $unchanged;

After:

$entity->getOriginal()->field->value;

$entity->setOriginal($unchanged);

AI-generated upgrade rules will not eliminate all upgrade work anytime soon. But even partial automation can reduce a surprising amount of repetitive work while helping Drupal evolve faster.

24 May 2026 3:00pm GMT

Dieter Plaetinck: Open Source Consulting & Advisory

I've been an enthusiastic contributor in the Open Source community for over 25 years. During my career, I've worked with and on Open Source software from multiple angles: as a builder, user, seller, customer, and investor. I've seen projects grow and falter. I've seen how licensing and business decisions can both destroy or boost projects and their communities. Though the biggest killer of promising projects and businesses is probably blind spots (challenges that were not expected or well understood).

I was the first engineering hire when Grafana Labs was founded. I was never a founder, board member, or executive, but I worked directly with its exceptional founders, executives and leaders. It's where I learned how to build Open Source software and enterprise OSS businesses the right way. I learned about productive collaboration with communities, with customers, and with colleagues regardless of which department they're in. I also learned the subtle interdependencies between GTM, engineering, and support, and what it really takes to build, launch, and sell products. It takes many revisions of products, their positioning, and licensing strategy (among others).

After 9 years I left Grafana and started exploring many cool OSS projects and people building them. It turned out I have some relevant experience that is complementary to their own expertise. So I started investing in cool projects and refining my thesis on commercial Open Source models, including Open Core, Source Available, and Fair Source. (see Fair Source: the next best model for commercial open source?)

Cool Open Source Software - and the people that build them - deserve support and funding.

- For funding, sometimes the answer is Venture Capital. Sometimes it's staying independent and bootstrapping, or using donations or endowments (e.g. OSS Pledge, Open Source Endowment, etc)

- For support, sometimes you just need a free friendly chat for advice. Sometimes you need a consultant, or an advisor. I'm happy to help in any way I can.

I don't claim to be the world's expert, but the few startups that worked with me were glad they did, so I decided to launch a consulting business. If this sounds interesting, check out the Consulting/advisory page for more details.

24 May 2026 3:00pm GMT