09 Jun 2026

Planet Mozilla

Planet Mozilla

Firefox Tooling Announcements: Happy BMO Push Day! (20260609.1)

The following changes have been pushed to bugzilla.mozilla.org:

- Bug 2043429 - Selenium test 1_test_bug_edit.t intermittently fails when attemtping to click on comment reactions

- Bug 1995467 - Show dependency tree on meta bugs by default

- Bug 2043322 - text/html attachments are downloaded instead of viewing in-browser

- Bug 2038298 - Render Phabricator revisions stack fork in the parent's row

- Bug 2045855 - phab-bot attempts to set approval flags for disabled flags

- Bug 2044471 - Add loading indicator to embedded dependency tree

- Bug 2034051 - Update Bugzilla Etiquette with guidance about AI usage

- Bug 2033409 - Pasting a link from the clipboard after using the Link button inserts additional markdown into the link

Discuss these changes in the BMO Matrix Room

1 post - 1 participant

09 Jun 2026 5:28pm GMT

The Mozilla Blog: Browse more privately all summer with Firefox’s free built-in VPN

For a limited time, where the VPN is available, users can get unlimited VPN bandwidth in Firefox - up from the 50 gigabytes monthly limit - plus access to over 25 country locations to browse from. Don't have Firefox yet? Try it now.

Firefox's free built-in VPN usually gives eligible users 50 GB of free bandwidth each month. From now through Aug. 31, we're making that unlimited, so you have more room to browse privately while you travel, work from public Wi-Fi or connect from somewhere new. Not only will users get unlimited bandwidth, but we're also unlocking access to 28 country locations to browse from during this period. The VPN returns to its standard 50 GB monthly limit and a standard location set on Sept. 1.

Whether you're using the airport Wi-Fi, booking a last-minute flight or trying to access websites away from home, here are a few ways Firefox's VPN can help while you travel:

Stay protected on public Wi-Fi at the airport, train station or hotel

When you're traveling, public Wi-Fi is often part of the deal. But those networks can make it easier for others to spy on your traffic and see which websites you're visiting. Firefox's built-in VPN adds another layer of privacy by helping mask your IP address and makes it harder for others on the network to see your browsing activity.

Browse like you're back home

The web can feel a little funky when you go abroad. Sites may load in another language, show a different local version or have trouble recognizing where you usually browse from. Maybe you need to schedule your monthly pharmacy prescription, but the site isn't loading the way it normally does from home. Or maybe you're trying to order a new dress to your apartment but the address isn't registering properly.

With Firefox's built-in VPN, you can switch your browsing location back to your home country so the sites and services you rely on feel a little more familiar while you're away.

Or choose where you browse from

Firefox can recommend a VPN location based on what's fastest and most convenient. But you can also choose from more available locations, whether you want to browse closer to home or see how the web looks somewhere else.

The full set of countries available during this summer period include: Australia, Austria, Belgium, Bulgaria, Canada, Chile, Colombia, Denmark, Finland, France, Germany, Ireland, Italy, Malaysia, Mexico, Netherlands, New Zealand, Portugal, Singapore, Spain, Sweden, Thailand, Norway, South Africa, United Kingdom and United States.

Turn off VPN for specific sites

Some sites don't always work smoothly with VPNs. If one is giving you trouble, you can turn the VPN off for that website right from the panel. You can also add sites to a list in advanced settings if you don't want them to connect through the VPN.

Free VPN for wherever summer takes you

Wherever you're logging on this summer, Firefox's built-in VPN gives you an easy way to add another layer of protection while you browse. More control for summer browsing, right where you need it. As it should be.

Take control of your internet

Download FirefoxThe post Browse more privately all summer with Firefox's free built-in VPN appeared first on The Mozilla Blog.

09 Jun 2026 3:58pm GMT

Niko Matsakis: Only Bounds

only bounds are going to be the most impactful change to Rust that you've never heard of. They are currently being designed and developed by the Arm team (David Wood, Rémy Rakic, et al.) as part of the Sized Hierarchy and Scalable Vector Extension project goal. This post explores the feature and aims to answer a particular question about the design (the scope of bounds, I'll explain). But before I dive in, I want to give a bit of context.

Rust generics have a Sized bound by default today

In today's Rust, every type parameter (except for Self) has a default bound called Sized:

// So this function...

fn identity<T>(t: T) -> T {

t

}

// ...is actually short for

fn identity<T>(t: T) -> T

where

T: Sized, // <-- Added by default!

{

t

}

A type T implements Sized if the compiler can compute the size of a T value at compilation time. This is true for almost every type, with a few notable exceptions. Consider [u32], which refers to "some number of u32 instances". We know that a single u32 is 4 bytes, but without knowing how many u32 there are, you can't know the size of [u32]. This means you can't have a value of type [u32] on the stack (how big should the stack frame be?).

You opt out with ?Sized

However, if you have a function like by_ref, that just takes the value by reference (i.e., by pointer), you shouldn't need to know how big the [u32] value is, because you're not manipulating it directly. You can have a type parameter U that doesn't require Sized, but you have to explicitly "opt out" from the default bound:

fn by_ref<U>(t: &U)

where

U: ?Sized, // <-- Opt out from the default

{ }

As a fun bit of historical trivia, this system was introduced way back in 2014 to accommodate Dynamically Sized Types. Before that, &[u32] was actually a built-in, indivisible type; we even wrote it like [u32]/& for a time.1

But Sized vs ?Sized isn't enough for everything we need

The Sized vs ?Sized design has served us reasonably well but it is also showing its limits. It turns out that "value has a statically computable size" vs "each value has a distinct size computable at runtime" doesn't cover all the things you might want. For example, extern types are types whose values have no known size, even at runtime. And then Arm's Scalable Vector Extension want to describe SIMD types where every value of the type has the same size (unlike str and [T], where each value can have a different length) but where that size is not known until runtime.

A richer Sized hierarchy

Rather than just Sized or ?Sized, what we really want is to have a richer hierarchy. The current plans look something like this:

flowchart TD

subgraph S["Sizedness traits"]

Sized[["Sized (default)"]] -- extends --> MetadataSized

MetadataSized -- extends --> MaybeSized

end

where

trait Sizedmeans that all values have the same size and that size can be computed knowing only the type.trait MetadataSizedmeans that values can have different sizes and that size can be computed given the metadata attached to a reference to the value. Examples include[T]ordyn Trait.trait MaybeSizedis implemented for all values and tells you nothing about the value's size.

Two caveats:

- I'm excluding the way that Arm's Scalable Vector Extension fit into this, because it's orthogonal.

- The trait names aren't settled. I'm using the names I understand the libs-api team to prefer; they're not my favorites, but that's ultimately the team who owns stdlib bikesheds, so I defer to them.2

Problem: ?Sized notation doesn't scale to this hierarchy

But now we have a kind of problem. The ?Sized notation was predicated3 on the idea that users should specify the default bound they are opting out of - i.e., the ? is meant to say "I don't know if this is Sized or not" (unlike the default, where you know it is Sized). But "opting out" from a bound doesn't work so well with a multi-level hierarchy. When you write ?Sized, does that correspond to T: MetadataSized (but not T: Sized)? And what if we want to insert another level in between T: MetadataSized and T: Sized later? Then we either have to change what T: ?Sized means (to refer to the new bound) or we have to have T: ?Sized drop two levels down the hierarchy. Even more annoying, what do we do while that middle rung is unstable? Surely T: ?Sized shouldn't refer to an unstable trait… what if we decide to remove it

Solution: only bounds

The new proposal is to write T: only MetadataSized or T: only UnknownSized instead of T: ?Sized. An only bound combines two things:

- Like any bound, it includes a "minimum requirement" - i.e.,

T: only MetadataSizedmeans thatTmust implement at leastMetadataSized. - It additionally disables some default bounds - i.e., we will not add the default

T: Sizedbound.

The name only comes from the fact that T: Sized implies T: MetadataSized. So the default of T: Sized already means that T: MetadataSized for free; but when you write only MetadataSized, you are saying "I don't need the full hierarchy, just MetadataSized will do".

only bounds work like normal bounds: ask for what you need

A nice feature of only bounds is that they work more like a regular bound. Whereas a ? bound is saying "I don't need this", an only bound is saying what you do need. So e.g. if you are writing a function that just has references to values of type T does not care what their size is, you can write

fn by_ref<U>(u: &U)

where

U: only MaybeSized,

{}

If you are writing a function that does need to compute the size of values of type V, you can ask for that capability:

fn checks_size<V>(v: &V)

where

V: only MetadataSized,

{

std::mem::size_of_val(v)

}

only bounds allow for new levels to be added later

A nice feature of only bounds is that, later on, we can add new levels to the hierarchy, and they work normally. For example, suppose we wish to add something like Aligned where the size is not known at compilation time but the alignment is. We could change the hierarchy to

trait Sized: Aligned

trait Aligned: MetadataSized // <-- new!

trait MetadataSized: MaybeSized

trait MaybeSized

and functions with U: only MaybeSized (like by_ref) and with V: only MetadataSized (with checks_size) would continue to have the same requirements. But new functions could be written with T: only Aligned that would use the new bound. And there is no conflict with stabilization; code that writes T: only Aligned can be considered unstable until that middle hierarchy is finalized.

only bounds compose normally

Like any other bound, only bounds are combined with other bounds to form the overall requirements. So it is possible to write e.g. T: only MetadataSized + Sized. This is equivalent to T: Sized and therefore equivalent to the default and therefore kind of pointless, but you can write it. Similarly, given that trait Clone: Sized, if you write T: only MetadataSized + Clone, that is kind of pointless too: you might as well write T: Clone, which would be equivalent. We plan to have a warn-by-default lint for that.

Scaling only to other "default bound families" (speculative)

The final strength of only bounds is that they allow us to introduce whole new families of default bounds. One example is the idea of introducing a Move bound. Note that this is a distinct feature and is not covered under the current RFC.

All types in Rust today are "movable" and "forgettable", meaning that you can memcpy the value from place to place so long as you stop using the previous location and you can recycle the memory where it is stored without running the value's destructor. There is one notable exception - when you pin a value, you it can no longer be moved, and you must run its destructor before its memory is reused - but otherwise this is a hard-and-fast rule. And that's annoying!

The problem is that not being able to guarantee that a destructor runs blocks a lot of unsafe code patterns. For example, scoped tasks a la rayon depend on a destructor for safety. In sync code, this works because we've decided it's UB to unwind a stack frame without running the destructors of values stored there, and so if you put a local variable on the stack, you can be sure its destructor will run. But that doesn't work in async code! And there are times when unwinding without running destructors would be nice.

The solution is to introduce a second family of default traits. Unlike the Sized family we saw before, this family defines fine-grained capabilities about how values of that type can be used:

flowchart TD

subgraph A["Accessability traits"]

Forget[["Forget (default)"]] -- extends --> Leak

Leak -- extends --> Destruct

Destruct -- extends --> Access

Move[["Move (default)"]] -- extends --> Access

end

Copy -- extends --> Move

The meaning of the traits are as follows:

Forget, the default, says that you can recycle the memory for a value without running its destructor.Leaksays that you can skip running a destructor for a value, but only if you never reuse the memory where the value resides.Destructsays that if you have a value of this type, you can reuse the memory where it resides by running its destructor.Copy, which already exists, says that you can memcpy the place and keep using the original place; it's not really a default, but I included it because it is relevant.Move, another default, says that you can memcpy the value to a new place if you stop using the original.Accessis the root of this family. It indicates a value that can be "accessed in place" (basically, any value at all).

This introduces new checks into the compiler:

- When you move a value (i.e.,

a = bwherebis not used later), we will check that the type implementsMove(whereas today, it is always allowed). - When you exit a scope, we will check that the values in each local variables have either been moved or have a type that implements

Destruct.

Some implications:

- If your function owns a value of type

T: only Destruct, then you must destruct it before your function returns. You can't move it (because you don't know if it implementsMove) and you can't leak or forget it either. - If your function owns a value of type

T: only Move, then the only thing you can do with it is move it somewhere else. You can't drop it (because you don't know if it implementsDestruct). - No function can own a value of type

T: only Access, because you wouldn't be able to move it nor drop it, and hence you could not return. But you could have such a value (say) in astatic.

How only bounds could work in the presence of multiple families

The spur for writing this blog post was a question in a lang team meeting on how only bounds ought to work given the existence of multiple "families" of default traits, as I described above. Although the current RFC is looking only at the Sized traits, we expect to look at the "access family" in a future RFC, so we want to be sure we are not making any decisions that won't scale to cover both.

The way I imagine it working is like this. Each default traits is associated with one or more "families". When you have an only bound, it "opts out" from all default traits in each family that the trait is associated with:

T: only Moveopts out fromForget,Leak,Destruct- but notSized.T: only Destructopts out fromForget,Leak, andMove- but notSized.T: only MetadataSizedopts out fromSized- but notForgetorMove.T: only MaybeSizedopts out fromSized- but notForgetorMove.

You may also want to "opt back in" to some defaults. For example, T: only Move + Destruct is a sensible thing to do. It means values that can be moved and destructed but not leaked or forgotten.

Examples

Option::map requires only Move

map is an example of a function that only needs Move. You need to be able to destructure self (which moves the optional value out into a local variable v and then invoke the closure op, which again moves the wrapped value v:

impl<T: only Move> Option<T> {

fn map<U: only Move>(

self,

op: impl FnOnce(T) -> U,

) -> Option<U> {

match self {

Some(v) => Some(op(v)),

None => None,

}

}

}

One interesting thing is the result type U. Using only the stuff I wrote in this blog post, it needs to be only Move, because the result will be moved into the Some value and so forth. But in-place-init would allow for this definition to omit the U: only Move bound because we could statically guarantee that the Option will be constructed in place and never moved after that.

Option::or requires only Move + Destruct

The a.or(b) method on Option returns a if it is Some and otherwise returns b. This is an interesting one because the value b may not be used and therefore requires only Move + Destruct bounds.

impl<T: only Move> Option<T> {

fn or(

self,

alternate: Option<T>,

) -> Option<T>

where

T: Destruct, // <-- because it may be dropped

{

match self {

Some(v) => Some(v), // drops `alternate`

None => alternate, // moves `alternate`

}

}

}

Rc requires MaybeSized + Leak

The Rc type is an example where we would want to relax bounds from both families:

struct Rc<T: only MaybeSized + only Leak> {}

I believe the proper minimum bounds for Rc are:

only MaybeSizedbecause while it can storeMetadataSizedorSizedthings, it doesn't have to, it can also store things of an non-computable size (although it does raise the question of how they would be freed, but that's an allocator concern).only LeakbecauseRcvalues can form cycles and thus we can't ever guarantee the destructor will be run. Interestingly,Rc<T>can implementForgeteven its contents don't.

Frequently asked questions

What is actually under RFC today?

The post may be a bit confusing here. The current RFC is looking only at the proposed "Sized" traits. The Access family is a speculative future extension that we are exploring but at a much earlier stage.

Can I use only with any trait?

In the beginning, the plan would be that only can only be used for well-known, default traits (e.g., Move, Sized, etc). In the future though there are some thoughts to generalizing it.

Why not opt out from all defaults at once?

An alternative that was proposed is to have the opt-out be per-type-parameter. So you might write something like

fn foo<T: MetadataSized + ?default>

which would "opt out" from all defaulted bounds. Obviously we'd have to bikeshed the syntax, but ignore that for now. The question is whether opting out of all defaults is better than opting out of a single family. I prefer the per-family option for two reasons:

- First, things like

T: only Movedemonstrate that you might very reasonably which to opt out from a single family but retain the defaultSizedbound. I think it's likely that there will be many functions that want to opt out ofSizedorForgetbut not both.- You might think that we could make

Move: Sizedto get the same effect, but I think that would be a mistake. The fact that a value's size must be computed dynamically doesn't inherently mean it can't be moved.

- You might think that we could make

- Second, it makes it harder to introduce new families later, if we decide there are other orthogonal properties of values that we'd like to relax.

Why do you think it's likely that people want to opt out of being Sized xor Forget but not both?

Because the Forget, Move, and similar traits mostly apply to owned values. The examples we saw with Option<T> were quite typical. And when you are moving values of type T around, you need that T to be Sized.

But we saw that Rc wanted to opt out of both families with only Leak + only MetadataSized, right?

Yes, that's true, and I think that particular combo will be common. I don't think that's an argument for the ?default approach on its own, though, particularly since that case would not be much cleaner or shorter…

impl<T: ?default + Leak + MetadataSized> Rc<T> {}

…what I think that argues for is actually trait aliases and shorthands.

Wait, trait aliases and shorthands? Can you elaborate?

Yes! I think that a future RFC could extend only bounds to allow you to define trait aliases with "only bounds" as supertraits:

trait RefCountable = only Leak + only MetadataSized;

// Equivalent to:

// trait RefCountable: only Leak + only MetadataSized {}

// impl<T> RefCountable for T where T: only Leak + only MetadataSized {}

You could then use an only RefCountable bound to define Rc<T>:

impl<T: only Refcountable> Rc<T>

Without the only, T: Refcountable would just be a regular trait bound and would not opt-out from any defaults.

Can we use a "root" trait to opt out of all defaults?

Yes, we could! You could define an alias like Value:

trait Value = only Access + only MaybeSized;

Since Access and MaybeSized are both implemented for all types, this effectively becomes part of both families:

flowchart TD

subgraph All["All default families"]

subgraph A["Access family"]

Forget[["Forget (default)"]] -- extends --> Leak

Leak -- extends --> Destruct

Destruct -- extends --> Access

Move[["Move (default)"]] -- extends --> Access

end

subgraph S["MaybeSized family"]

Sized[["Sized (default)"]] -- extends --> MetadataSized

MetadataSized -- extends --> MaybeSized

end

Access -- extends --> Value

MaybeSized -- extends --> Value

end

Then you can do T: only Value and opt out from both families at once.

If we did that, what would happen if we wanted to add a new family in the future?

Ay, there's the rub. If we wish to add a new family in the future, let's say for values that don't live in the same memory space (T: only Distributed…?), then Value would be "out of date" because code written against Value would still be assuming uni-memory-space values. But we could make Value into an edition-dependent alias or something like that, as has been discussed.

Can we decide whether we want Value later?

Yes! We can introduce a root trait at any time. So we can add the Sized-ness family first, then the Access family, and then see how we feel. Maybe we find people are very commonly opting out of both- in which case, some aliases are useful, or perhaps a Value variant.

The only way we might "regret" it is if, in practice, people usually just opted out of both and then opted back in to what they want specifically. But we already know that T: only Move will be common and clearly T: only Value + Move + Sized is more awkward in that case, so I don't consider that very likely.

Why the name Destruct and not Drop?

That name comes from the const trait RFC. There are a few reasons to move away from Drop. The first is that it is possible to have a destructor even if you don't implement Drop: Drop really refers to user-provided logic in the destructor, but the compiler adds its own logic ("drop glue", it's sometimes called) to drop all the fields in the value. The second reason is that the Drop trait itself needs some revision, so moving away from that name lets us have other ways to specify custom logic (e.g., pinned self, or by-value, etc etc).

How does this interact with const traits anyway?

Quite beautifully! In fact, the proposal from Arm for SVE is to introduce the idea of T: const Sized being "a type whose size can be computed at compilation time", which I find quite elegant. Similarly T: const Destruct was proposed by the const RFC as a way to say that a value has a constant destructor.

It's annoying to write T: only Move + Destruct. Couldn't we have Destruct imply Move so that I can just write T: only Destruct?

My original proposal for introducing linear types had Destruct extending Move. This would mean that the Option::or proposal could simply do U: only Destruct and not U: only Move + Destruct. However, Alice Ryhl and others pointed out that there are immovable types that must nonetheless be destructed, so it doesn't make sense to combine those.

Where can I learn more?

The Project Goal has a lot of details. The latest updates are available on the tracking issue. If you like watching videos, I recommend David Wood's Rust Nation talk.

Conclusion

I want to close with a meta-observation and a big shout-out to the Arm team. I think they are showing how awesome open-source can be. The Arm team's primary motivation is adding support for Scalable Vector Extension. This helps Rust make full use of Arm processors. This is, in and of itself, a laudable goal, and valuable to Rust: One of Rust's assets, in my view, is that it gives you access to all the power your processor has to provide, and that should include unique extensions.

But rather than add the feature as a kind of special-case extension to Rust, the Arm team is going further and driving a general purpose improvement, one that will unlock a bunch of other features (extern types and, to some extent, guaranteed destructors; guaranteed destructores themselves unlock scoped async threads and better Wasm integration). I love that.

-

In fact, I recall that in one of my blog posts I proposed writing

""as the way to spell&str. I kinda wish we had done that just for the sheer wackiness of it (fn foo(name: "")). ↩︎ -

I prefer names that refer to the operations that can be performed on the values, so e.g. instead of

MetadataSizedI would preferSizeOfVal, since it means that you can invoke thestd::mem::size_of_valfunction on it. ↩︎ -

Little logic pun there for you. ↩︎

09 Jun 2026 9:04am GMT

08 Jun 2026

Planet Mozilla

Planet Mozilla

Firefox Tooling Announcements: MozPhab 2.15.2 Released

Bugs resolved in Moz-Phab 2.15.2:

- bug 2004368 moz-phab patch -a here with jj says there is no source tree if jj config is broken

- bug 2035900 Investigate setting up CodSpeed.io for

moz-phab - bug 2044857

patch --rawleaks a global logger level, causing order-dependent test failures

Discuss these changes in #engineering-workflow on Slack or #Conduit Matrix.

1 post - 1 participant

08 Jun 2026 6:41pm GMT

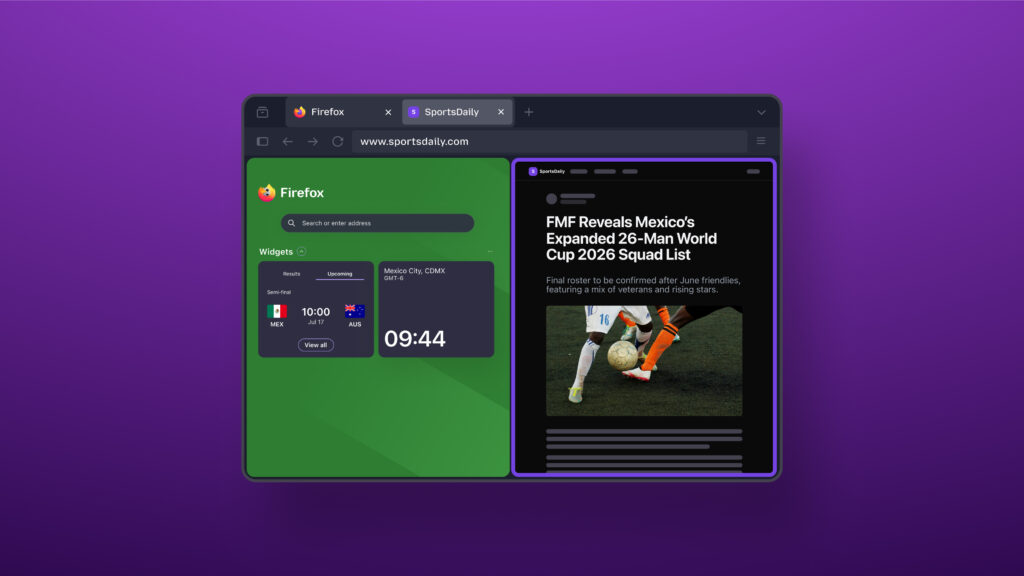

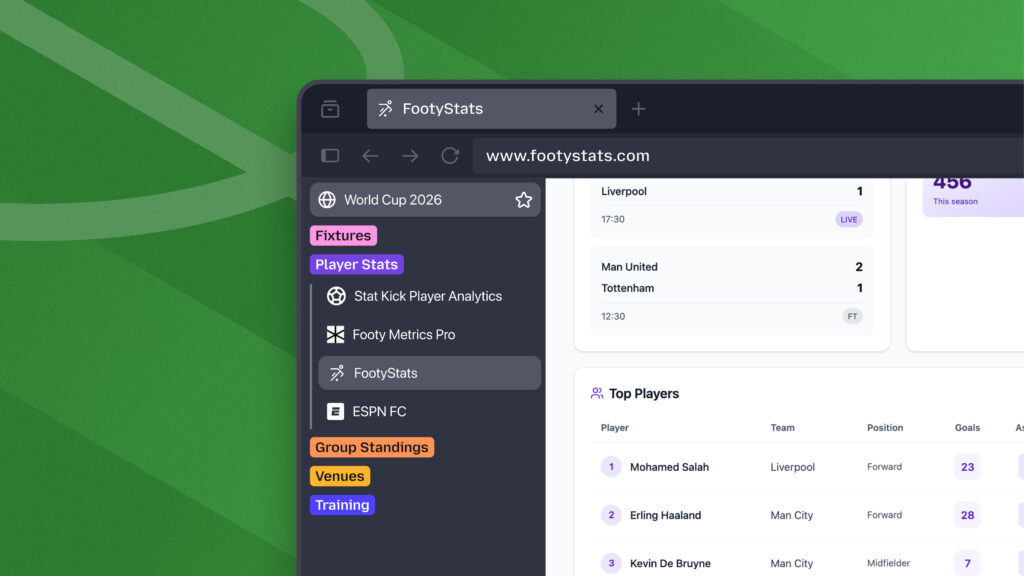

The Mozilla Blog: Make Firefox your World Cup sidekick this summer

Your browser tabs say a lot about your life: work projects, vacation plans, shopping carts and all the rabbit holes in between.

Add the world's biggest soccer tournament to the mix, and your browser is suddenly juggling scores to check, streams to watch, lineups to scan and group chats to keep up with. And since many matches kick off during the workday, there will be lots of temptation to just sneak a peek at the action between meetings.

Firefox is built to be your ultimate second screen. When the tournament is on, keep Firefox open to follow the action, keep up with the conversation, and stay on top of everything else happening online - whether you're watching from the couch or checking in on your mobile device on the go.

You'll find a World Cup widget, custom wallpapers, and game-day multitasking tools. Plus, Firefox is teaming up with Trevor Noah, a soccer superfan, as he hosts live watch parties for the tournament moments everyone will be talking about.

Your second screen for every match

When the action is happening fast, keeping up should be as easy as opening a new tab.

Firefox's World Cup widget gives you the latest tournament updates every time you open a new tab (and you can turn it off anytime). With key match information always within easy reach, it's easy to stay on top of the action without bouncing between apps or having to browse around.

You can follow your favorite teams and even customize Firefox with wallpapers that bring big fan vibes to every new tab.

Game-day pro moves

Pin the picture

With picture-in-picture in Firefox, you can detach a video from its tab and pin it anywhere on your screen so you can keep watching while working on other stuff.

Split the view

Open two tabs side by side in one window with split view. That way, you can keep live updates on one half and stats, searches or chats on the other.

Calm the chaos

Remember what we said about your tabs representing your life? While 99 tabs of fandom can make it feel more chaotic, your browser doesn't have to.

With tab groups in Firefox, you can create separate groups for:

- soccer matches and live scores

- travel plans and itineraries

- work or school projects

- summer shopping and event planning

- weekend inspiration and future adventures

Hang out with Trevor Noah - World Cup and Firefox superfan

Match days are better with good company. This summer, Firefox is teaming up with Trevor Noah to be his second screen sidekick for his World Cup watch party on YouTube.

Hosted live throughout the tournament, the series will feature Trevor alongside some of his best friends plus celebrity guests as they react to matches, highlights and the internet moments coming out of each day's games.

Trevor is a longtime Firefox user whose comedy and commentary have explored how technology shapes everyday life. That makes this collaboration feel especially fitting for Mozilla, a company built around the idea that the internet should work better for everyone.

"Events like this are some of the biggest shared experiences on the internet," said John Solomon, Chief Marketing Officer at Mozilla. "While many people stop their lives for the World Cup, those that can't follow them while working, traveling, connecting with friends and family, and doing everything else they need to do online. Firefox is built for moments like this, and Trevor is a fitting partner. He's a longtime Firefox user who believes, like we do, that technology should work for people, helping them stay connected to the moments, information and communities they care about most."

Make Firefox your World Cup sidekick this summer. Follow the tournament with the World Cup widget, multitask like a pro with picture-in-picture, split view and tab groups, and get into the spirit with custom wallpapers, all in the browser that helps you get more out of every match.

Are you game-day ready?

Download Firefox nowThe post Make Firefox your World Cup sidekick this summer appeared first on The Mozilla Blog.

08 Jun 2026 3:59pm GMT

05 Jun 2026

Planet Mozilla

Planet Mozilla

Will Kahn-Greene: Bleach 6.4.0 releases -- final release

What is it?

Bleach is a Python library for sanitizing and linkifying text from untrusted sources for safe usage in HTML.

Bleach v6.4.0 released!

Bleach 6.4.0 includes two security fixes, a fix to tinycss2 dependency requirements, and some other things.

See the changes here:

https://bleach.readthedocs.io/en/latest/changes.html#version-6-4-0-june-5th-2026

Bleach v6.4.0 is the final release

I haven't used Bleach on a project in years, but I still had some time to maintain it. That changed about a year ago when I got re-orged into a new role and I haven't had time to do any Bleach work since then.

To recap, Bleach sits on top of html5lib which hasn't been actively maintained in years. It is dangerous to maintain Bleach in that context.

We vendored html5lib so we could make adjustments to the library to keep Bleach going. This is not a sustainable approach, but it was ok for the short term.

Over the years, we've talked about other options:

-

find another library to switch to

-

take over html5lib development

-

fork html5lib and vendor and maintain our fork

-

write a new HTML parser

-

etc

None of those are feasible for me.

Bleach has been a solo-maintained project for a while now. The world is crazy and it's much harder to build a team of trusted maintainers now than it was (or at least, it sure feels that way). I don't see any possibility of increasing the maintenance team or passing it to someone else responsibly.

Switching contexts from my regular work to Bleach is really hard. Bleach is complicated, the problem domain is complicated, and there's a lot of nuanced context. I can't just switch gears, spend 15 minutes on Bleach to do something, and then switch back to the rest of my day. I periodically get nag messages about this which are entirely valid, but there's nothing I can do about it. It doesn't feel great.

Then in 2025, Emil, a long-time Bleach contributor, built justhtml which gives us an easy migration path off of Bleach. He even took the time to write a migration guide.

Thoughts and statistics

In 2019, when I stepped down the first time, I wrote a post on stepping down.

In 2023, when I deprecated the project, I wrote a post on Bleach 6.0.0 and deprecation.

-

From the first commit on 2010-02-18 to today's final commit on 2026-06-05, the Bleach project lasted 16 years, 3 months - 5,951 days, or about 16.29 years.

-

There were 64 releases.

-

There were roughly 960 commits.

-

From 80 roughly contributors

-

Top 3:

-

Will Kahn-Greene: 462

-

James Socol: 182

-

Greg Guthe: 133

-

-

-

Roughly 5,040 lines of Python code excluding the vendored html5lib.

-

I was maintainer from October 2015 to now--that's a little under 11 years.

It feels weird to end a project that's outlived many of the Mozilla sites and Python web frameworks it was designed to protect.

What happens now?

This is the end of the project.

Bleach. Last release.

If you're still using Bleach, I think you have three options:

-

End your project. Maybe you don't need to be maintaining your thing anymore? Use Bleach as your reason to exit and do something different with your time on Earth.

-

Switch to the sanitizer API. Rework your project to use the sanitizer API.

-

Swap Bleach out for justhtml. Emil provided a migration guide for switching from Bleach to justhtml.

Good luck with whatever option you choose!

Thanks!

Many thanks to James who created Bleach and gave it a set of first principles that guided our choices for 16 years.

Many thanks to Greg who I worked with on Bleach for a long while and maintained Bleach for several years. Working with Greg was always easy and his reviews were thoughtful and spot-on.

Many thanks to Emil who was a contributor to Bleach for a long while and created justhtml providing Bleach users a migration path.

Many thanks to Jonathan who, over the years, provided a lot of insight into how best to solve some of Bleach's more squirrely problems.

Many thanks to Sam who was an indispensible resource on HTML parsing and sanitizing text in the context of HTML.

Many thanks to all the users and contributors of Bleach!

Where to go for more

For more specifics on this release, see here: https://bleach.readthedocs.io/en/latest/changes.html#version-6-4-0-june-5th-2026

Documentation and quickstart here: https://bleach.readthedocs.io/en/latest/

Source code and issue tracker here: https://github.com/mozilla/bleach/

05 Jun 2026 1:00pm GMT

03 Jun 2026

Planet Mozilla

Planet Mozilla

Firefox Nightly: More Kit, More Control – These Weeks in Firefox: Issue 203

Highlights

- James enabled adaptive autofill in Nightly for testing, which we believe should provide better results in the URL bar when doing autocomplete!

- Jack updated the illustrations shown on some of our error pages to match the latest approved designs, giving users more polished artwork when the browser encounters connection or security errors!

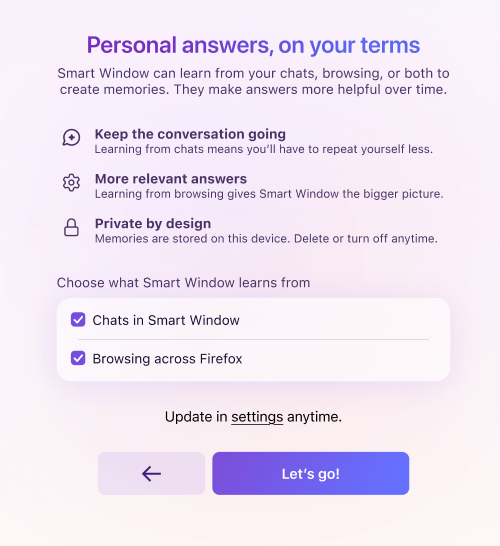

- Controls for the Memories feature can now be set during Smart Window onboarding

- We've disabled the CSS filter implicitly applied to WebExtension pageAction SVG icons across all release channels starting in Firefox 152, completing the deprecation

- NOTE: The blog post published at WebExtensions API changes in Firefox 149-152 provides to extensions developers more details about this deprecation and links to the related MDN docs.

Friends of the Firefox team

Resolved bugs (excluding employees)

Script to find new contributors from bug list

Volunteers that fixed more than one bug

- Amin Amir

- Pranjali Srivastava

- Sam Johnson

New contributors (🌟 = first patch)

- 🌟:23rd: Regression: The new swipe-to-navigation indicator stucks for a moment, when deciding not to navigate the other page

- 🌟Akeem Omosanya: Remove commented-out code in SearchService.sys.mjs

- Amin Amir:

- 🌟Sahaj: Suggest the default target language for translation after changing the detected source language

- 🌟JIANG Zhirui: Breakpad build failed on Windows using VS2026 due to removal of stdext

- John Iweh: Add "Open in New Tab" and "Open in New Container Tab" options to the context menu for Tabs from Other Devices

- Jak: Bookmarks and History - should respect the "When you open a link, image or media in a new tab, switch to it immediately" setting

- 🌟Andy [:rgbcmy]: Autoplayed next video should also be PIP

- konyhéa: "Escape" key should collapse the expanded on hover sidebar launcher even if hover is still active.

- Pranjali Srivastava:

Project Updates

Add-ons / Web Extensions

Addon Manager & about:addons

- Fixed long-standing regression on the autocomplete and datalist popups for extension inline options pages on about:addons (introduced in Firefox 68 by Bug 1532724, fix shipping in Firefox 152) - Bug 1595158

WebExtensions Framework

- Fixed access to web-accessible resources declared with <all_urls> from sandboxed documents (null-principal URLs), restoring extension redirects from the context-menu search flow, starting in Firefox 152 - Bug 2033905

WebExtension APIs

- Added exhaustive test coverage for tabs.move() against additional edge cases related to split-view tabs - Bug 2029092

DevTools

- Andreas Farre improved the Session History tab in the Application panel (still behind devtools.application.sessionHistory.enabled)

- Julian Descottes [:jdescottes] fixed the most frequent DevTools crash we were observing in Telemetry, adding a guard against IDBTransaction errors when retrieving breakpoints in the Debugger (#2030260)

- Nicolas Chevobbe [:nchevobbe] fixed the image preview tooltip for relative URLs images in constructed stylesheet (#2035503)

- Julian Descottes [:jdescottes] reduced the overhead we had because of network requests monitoring by only decoding response content when the user actually want to see the response (#2026228)

WebDriver

- Amin Amir cleaned up an incorrect variable assignment in our browsingContext module.

- Logan Rosen updated stale references and broken links in our documentation about Marionette.

- Sameem improved the Marionette and WebDriver BiDi screenshot commands to enforce maximum allowed dimensions.

- Leo McArdle fixed the regression in the "log.entryAdded" event, which lacked an error message in the "text" field for the messages of type "error".

- Henrik Skupin fixed an issue in Marionette where WebDriver:Navigate and WebDriver:Refresh did not handle errors when the underlying navigation failed.

- Henrik Skupin improved geckodriver to detect an early Firefox exit during startup on Android, avoiding up to 60 seconds of unnecessary connection attempts.

- Henrik Skupin updated the geckodriver CI build job to produce a universal macOS binary supporting both x64 and aarch64.

Lint, Docs and Workflow

- Sylvestre ported some linters (e.g. file-whitespace, test-manifest-toml, license, file-perm, rejected-words & more) to Rust to help improve the runtime of the code review bot.

- Dale has been working on migration to moz-src for customkeys, dom/quota and odom/geolocation

New Tab Page

- We did our first region-specific trainhop on May 11th (just 15% of the US), and turned on HNT Nova (and sometimes Widgets) for those clients to get some advance-data of its behaviour in the wild! A note that HNT Nova gets turned on for everybody when Firefox 151 ships on May 19th.

- We'll be launching a similar experiment in the DE, probably on May 12th, also at 15% population.

- Most of the team is heads down building out a sports-tracking widget, attempting to get that ready in time to be generally available for the upcoming World Cup event.

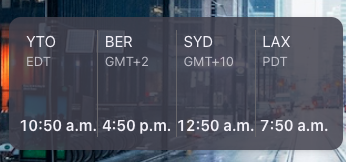

- Dre landed a new world clock widget, which is currently off by default, but pretty snazzy!

Search and Urlbar

- Nova (URL Bar Design Refresh)

- Drew and Daisuke continued their work on Nova styling for the Address bar (input and view).

- Search and Suggest

- Drew finalized two bugs for World Cup and sports suggestions, which were landed and uplifted: one to update the localization string for scheduled games and another to show both teams' icons in suggestions. Drew also landed and uplifted a fix for rich search suggestion icons being forced into a square aspect ratio.

- Standard8 updated Ecosia favicons to the latest branding, including QA testing and publishing.

- Settings Redesign (SRD)

- Stephanie landed a test to ensure search suggestion settings are hidden when quicksuggest is disabled, as well as a patch to resolve TypeScript issues in search.mjs, and is adding test coverage to confirm removed search engines are not displayed in the default engines dropdown.

- General URL Bar and Component Updates

- Daisuke landed implementation of the context menu on URL bar results, and a fix to show the loading URL in the URL bar when starting up with a homepage.

- Marco is working on several tasks, including a PDF download / focus stealing issue and allowing arrays to be bound in Sqlite.sys.mjs. Marco also worked on fixes related to Places, such as avoiding replacing the favicons database if it is not corrupt.

- Standard8 finalized the URL bar test manifest split. Standard8 also upgraded us to TypeScript 6.

- Moritz landed a fix for URL bar abandonment telemetry being recorded when clicking an engine in the unified search button popup (Bug 2032973), which was also uplifted. Moritz also simplified search mode switcher item activation in tests, and made it so that the unified search button popup closes when installing an open search engine.

- Daisuke landed implementation of the context menu on URL bar results, and a fix to show the loading URL in the URL bar when starting up with a homepage.

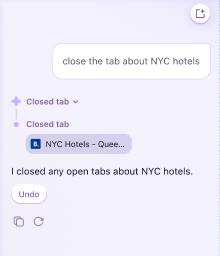

Smart Window

- assistant rendering feedback up/down 2032994 and markdown table 2027029

- nova styling blur 2027877 and suggestions 2026823

- accessibility screen reader 2028676 and keyboard focus 2037565

- optimize conversation starters extra requests 2030005 and caching 2033430

Storybook/Reusable Components/Acorn Design System

- Nova token updates occasionally, focused on SRD

UX Fundamentals

- Added support for the "SEC_ERROR_CA_CERT_INVALID" certificate error to the Felt Privacy error pages. - 2035942

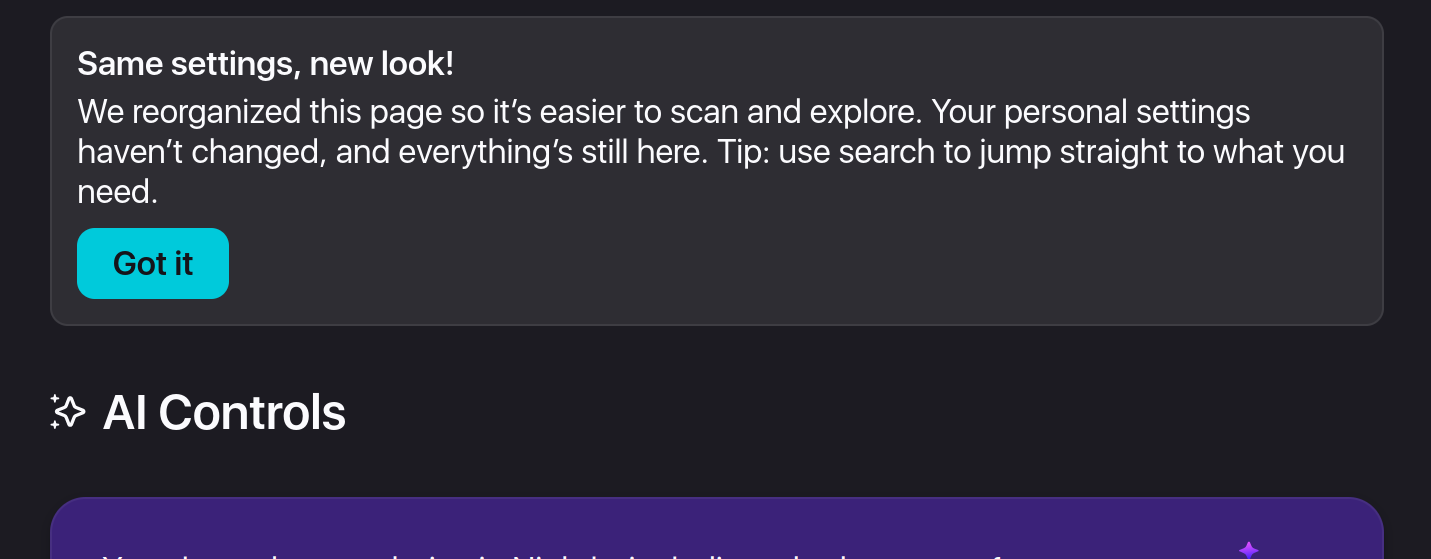

Settings Redesign

- Settings redesign is being tested and will hopefully go out in Firefox 152!

03 Jun 2026 6:27pm GMT

This Week In Rust: This Week in Rust 654

Hello and welcome to another issue of This Week in Rust! Rust is a programming language empowering everyone to build reliable and efficient software. This is a weekly summary of its progress and community. Want something mentioned? Tag us at @thisweekinrust.bsky.social on Bluesky or @ThisWeekinRust on mastodon.social, or send us a pull request. Want to get involved? We love contributions.

This Week in Rust is openly developed on GitHub and archives can be viewed at this-week-in-rust.org. If you find any errors in this week's issue, please submit a PR.

Want TWIR in your inbox? Subscribe here.

Updates from Rust Community

Foundation

Official

Project/Tooling Updates

- One year of Roto, the compiled scripting language for Rust

- xa11y: cross-platform desktop automation via native accessibility APIs

- halloy 2026.7 - now supports IRCv3 reply, redact, metadata, bot mode and more!

- Building a Native Markdown Previewer for AI-Generated Docs with Rust and WebView

- BPF in the agentic era

- gRPC-Rust Roadmap

- Announcing Zstandard in Rust

Observations/Thoughts

- Nine Ways to Do Inheritance in Rust, a Language Without Inheritance

- Async Rust: deep dive into cooperative scheduling and Tokio's architecture

- Memory safety is a matter of life and death | joshlf.com

Rust Walkthroughs

- ZK snarks for Rust developers: R1CS vs Plonkish vs AIR

- Learn Rust Closures By Building a Tiny Rule-Based Linter

- Learn Bevy States, Timers, and Grid Movement by Building Snake

- [video] RustCurious lesson 8: Generics and Monomorphization

- A Grammar-First Approach to Parser Combinators in Rust

Research

Crate of the Week

This week's crate is remyx, a framework for building TUIs on top of Ratatui.

Thanks to Manuel Garcia de la Vega for the self-suggestion!

Please submit your suggestions and votes for next week!

Calls for Testing

An important step for RFC implementation is for people to experiment with the implementation and give feedback, especially before stabilization.

If you are a feature implementer and would like your RFC to appear in this list, add a call-for-testing label to your RFC along with a comment providing testing instructions and/or guidance on which aspect(s) of the feature need testing.

No calls for testing were issued this week by Rust, Cargo, Rustup or Rust language RFCs.

Let us know if you would like your feature to be tracked as a part of this list.

Call for Participation; projects and speakers

CFP - Projects

Always wanted to contribute to open-source projects but did not know where to start? Every week we highlight some tasks from the Rust community for you to pick and get started!

Some of these tasks may also have mentors available, visit the task page for more information.

- MD Preview - Package MD Preview for Homebrew Cask

- OpenSlate - Test Health Check Endpoint

- OpenSlate - Test Login Endpoint

- OpenSlate - Test Notes CRUD Endpoint

- OpenSlate - Test Search Endpoint

- OpenSlate - Test Preference Endpoint

If you are a Rust project owner and are looking for contributors, please submit tasks here or through a PR to TWiR or by reaching out on Bluesky or Mastodon!

CFP - Events

Are you a new or experienced speaker looking for a place to share something cool? This section highlights events that are being planned and are accepting submissions to join their event as a speaker.

- Scientific Computing in Rust 2026| 2026-06-05 | Virtual | 2026-07-08 - 2026-07-10

If you are an event organizer hoping to expand the reach of your event, please submit a link to the website through a PR to TWiR or by reaching out on Bluesky or Mastodon!

Updates from the Rust Project

500 pull requests were merged in the last week

Compiler

Library

- constify Iterator-related methods and functions

- move

IoSliceandIoSliceMuttocore::io - specialize Clone of array IntoIter

- stabilize

Path::is_empty - stop needing an alloca for

catch_unwind

Cargo

diag: Add the'cargo::default'groupdiag: Report summaries forunused_deps- add

--output-format=jsonto cargo doc as an unstable option - add edition for scripts anytime we mutate the manifest

Rustdoc

- avoid ICE when rendering body-less type consts

- correctly propagate cfgs for glob reexports

- deterministic sorting for

doc_cfgbadges - fix ICE on delegated async functions

- optimize impl sorting

- separate the caches for synthetic auto trait & blanket impls

Clippy

- add

unused_async_trait_impllint - add new lint:

for_unbounded_range - added new lint for

map_or(..., identity) redundant_pattern_match: improve suggestions- faster

has_arg - fold all early lint passes into one statically-combined pass

- fold all late lint passes into one statically-combined pass

- memoize

first_node_in_macrofor consecutive queries - skip disabled off-by-default doc reparses

Rust-Analyzer

- always use crates from sysroot in proc-macro-srv

- enable salsa feature for syntax-bridge

- also consider library features internal

- do not fill both

drop()andpin_drop()in the "fill missing members" assist - fix extract variable in token tree replace range

- port block and loop inference from rustc

- try to improve completion ranking

- use add deref in assign instead add

&mutfor value - kill proc-macro-srv processes on shutdown

- remove direct use of make constructor with editor make

- remove make from rename and prettify macro expansion

Rust Compiler Performance Triage

This week we saw nice wins across the board thanks to merging several compiler queries together (#155678), and also substantial improvements in doc performance thanks to doing less work when sorting trait impls (#157179).

Triage done by @Kobzol. Revision range: 783eb8c8..4804ad7e

Summary:

| (instructions:u) | mean | range | count |

|---|---|---|---|

| Regressions ❌ (primary) |

0.3% | [0.1%, 0.7%] | 14 |

| Regressions ❌ (secondary) |

0.4% | [0.1%, 0.9%] | 39 |

| Improvements ✅ (primary) |

-0.9% | [-6.8%, -0.2%] | 111 |

| Improvements ✅ (secondary) |

-1.1% | [-2.9%, -0.1%] | 53 |

| All ❌✅ (primary) | -0.8% | [-6.8%, 0.7%] | 125 |

3 Regressions, 1 Improvement, 2 Mixed; 4 of them in rollups 35 artifact comparisons made in total

Approved RFCs

Changes to Rust follow the Rust RFC (request for comments) process. These are the RFCs that were approved for implementation this week:

- No RFCs were approved this week.

Final Comment Period

Every week, the team announces the 'final comment period' for RFCs and key PRs which are reaching a decision. Express your opinions now.

Tracking Issues & PRs

- Tracking Issue for

strip_circumfix - Tracking issue for CommandExt::show_window

- Tracking Issue for

path_set_times - Tracking Issue for

nonzero_from_str_radix - Tracking Issue for LoongArch CRC Intrinsics

- Tracking Issue for

Vec::from_fn - Add

Step::forward/backward_overflowingto enable RangeInclusive loop optimizations - Stabilize

core::range::{legacy, RangeFull, RangeTo} - Tracking Issue for box_as_ptr

- Tracking Issue for explicit-endian String::from_utf16

- Reduce

unreachable-codechurn aftertodo!() - make repr_transparent_non_zst_fields a hard error

- Tracking Issue for algebraic floating point methods

- riscv: promote d, e, and f target_features to CfgStableToggleUnstable

- Tracking Issue for

PathBuf::into_string

- Desugar async blocks in HIR instead of MIR

- Test new solver and polonius alpha on CI

- Add

-Zllvm-target-feature target*modifier* to directly set LLVM-level target features, and deprecate doing that with-Ctarget-feature - Set requirements for windows-gnu

- Create a new Tier 3 target:

powerpc64le-unknown-none - Add flag to pass MSRV/

package.rust-versionfor use by lints - Optimize

repr(Rust)enums by omitting tags in more cases involving uninhabited variants. - Promote tier 3 riscv32 ESP-IDF targets to tier 2

- Proposal for Adapt Stack Protector for Rust

No Items entered Final Comment Period this week for Rust RFCs, Cargo, Language Team, Language Reference or Leadership Council. Let us know if you would like your PRs, Tracking Issues or RFCs to be tracked as a part of this list.

New and Updated RFCs

Upcoming Events

Rusty Events between 2026-06-03 - 2026-07-01 🦀

Virtual

- 2026-06-03 | Virtual (Indianapolis, IN, US) | Indy Rust

- 2026-06-04 | Virtual (Berlin, DE) | Rust Berlin

- 2026-06-04 | Virtual (Nürnberg, DE) | Rust Nuremberg

- 2026-06-04 | Virtual (Tel Aviv-yafo, IL) | Code Mavens 🦀 - 🐍 - 🐪

- 2026-06-06 | Virtual (Kampala, UG) | Rust Circle Meetup

- 2026-06-07 | Virtual (Dallas, TX, US) | Dallas Rust User Meetup

- 2026-06-08 | Virtual (Cardiff, UK) | Rust and C++ Cardiff

- 2026-06-09 | Virtual (Dallas, TX, US) | Dallas Rust User Meetup

- 2026-06-09 | Virtual (London, UK) | Women in Rust

- 2026-06-10 | Virtual (Girona, ES) | Rust Girona

- 2026-06-16 | Virtual (Washington, DC, US) | Rust DC

- 2026-06-17 | Hybrid (Vancouver, BC, CA) | Vancouver Rust

- 2026-06-17 | Virtual (Girona, ES) | Rust Girona

- 2026-06-18 | Hybrid (Seattle, WA, US) | Seattle Rust User Group

- 2026-06-18 | Virtual (Berlin, DE) | Rust Berlin

- 2026-06-21 | Virtual (Dallas, TX, US) | Dallas Rust User Meetup

- 2026-06-23 | Virtual (Dallas, TX, US) | Dallas Rust User Meetup

- 2026-06-23 | Virtual (London, UK) | Women in Rust

- 2026-07-01 | Virtual (Indianapolis, IN, US) | Indy Rust

Africa

- 2026-06-09 | Johannesburg, ZA | Johannesburg Rust Meetup

Europe

- 2026-06-03 | Dublin, IE | Rust Dublin

- 2026-06-03 | Girona, ES | Rust Girona

- 2026-06-10 | München, DE | Rust Munich

- 2026-06-11 | Switzerland, CH | PostTenebrasLab

- 2026-06-12 - 2026-06-14 | Kraków, PL | Rustmeet

- 2026-06-16 | Leipzig, DE | Rust - Modern Systems Programming in Leipzig

- 2026-06-16 | Milano, IT | Rust Language Milan

- 2026-06-18 | Aarhus, DK | Rust Aarhus

- 2026-06-23 | Paris, FR | Rust Paris

- 2026-06-25 | Berlin, DE | Rust Berlin

North America

- 2026-06-04 | Chicago, IL, US | Chicago Rust Meetup

- 2026-06-04 | Saint Louis, MO, US | STL Rust

- 2026-06-06 | Boston, MA, US | Boston Rust Meetup

- 2026-06-11 | Lehi, UT, US | Utah Rust

- 2026-06-11 | Mountain View, CA, US | Hacker Dojo

- 2026-06-11 | San Diego, CA, US | San Diego Rust

- 2026-06-16 | San Francisco, CA, US | San Francisco Rust Study Group

- 2026-06-16 | San Francisco, CA, US | San Francisco Rust Study Group

- 2026-06-17 | Hybrid (Vancouver, BC, CA) | Vancouver Rust

- 2026-06-18 | Hybrid (Seattle, WA, US) | Seattle Rust User Group

- 2026-06-24 | Austin, TX, US | Rust ATX

- 2026-06-24 | Los Angeles, CA, US | Rust Los Angeles

- 2026-06-25 | Atlanta, GA, US | Rust Atlanta

- 2026-06-26 | New York, NY, US | Rust NYC

Oceania

- 2026-06-25 | Melbourne, AU | Rust Melbourne

South America

- 2026-06-18 | Florianópolis, BR | Rust SC

If you are running a Rust event please add it to the calendar to get it mentioned here. Please remember to add a link to the event too. Email the Rust Community Team for access.

Jobs

Please see the latest Who's Hiring thread on r/rust

Quote of the Week

If memory safety bugs were Waldo (Wally): finding them in C programs is a "Where's Waldo?" game, and Rust's

unsafesimplifies it to "Is this Waldo?"

Thanks to Moy2010 for the suggestion!

Please submit quotes and vote for next week!

This Week in Rust is edited by:

- nellshamrell

- llogiq

- ericseppanen

- extrawurst

- U007D

- mariannegoldin

- bdillo

- opeolluwa

- bnchi

- KannanPalani57

- tzilist

Email list hosting is sponsored by The Rust Foundation

03 Jun 2026 4:00am GMT

02 Jun 2026

Planet Mozilla

Planet Mozilla

The Rust Programming Language Blog: Launching the Rust Foundation Maintainers Fund

If you want to financially support the development of Rust, please consider donating to the Rust Foundation Maintainers Fund.

A few months ago, the Rust Foundation announced the Rust Foundation Maintainers Fund (RFMF). Since then, the Rust Project has been closely cooperating with the Rust Foundation to determine how exactly this fund will be used to support Rust maintainers. This resulted in the acceptance of RFC #3931, which established the Funding team and the Maintainer in Residence program.

The primary goal of the Funding team is to ensure that maintainers who work on Rust and its toolchain will be properly supported. We will talk to Rust Project members to figure out their funding situation, meet Rust team leads to learn about their maintenance needs, approach companies to find opportunities for them to invest into Rust by supporting Rust maintainers, coordinate various funding efforts and ensure that the beneficial effects of funded maintenance are visibly promoted, with the help of the Content team.

Maintainer in Residence is a new program dedicated to financially supporting existing Rust Project maintainers1. Each Maintainer in Residence will be funded to maintain one or more critical parts of Rust, such as the compiler, the standard library, Cargo, Clippy or one of many other projects that the Rust Project develops and maintains. The funded work will include activities such as performing large-scale refactorings, code reviews, unblocking new features, issue triaging, mentoring other contributors and more, and will be split between priorities guided by the teams they are supporting and priorities of their own choosing within the Project. Where applicable, Maintainers in Residence are also encouraged to propose, champion, and drive forward Rust Project Goals.

The goal of this program is to provide stable and long-term funding so that maintainers can focus on important work that ensures the long-term health of Rust. The funding team will select Maintainers in Residence based on funding availability and maintenance needs within the Rust Project, and help ensure that they are successful. We expect that this will usually be a (near) full-time position, but that will depend on the nature of the work and the area of maintenance.

This program extends our existing support for Rust maintainers, such as the program management program and the compiler-ops program. An important development is that we now have a centralized mechanism for gathering donations from both individuals and companies, and a dedicated team that will help direct those funds to specific maintainers. You can find more details about the funding team and the Maintainer in Residence program in the RFC.

We expect to hire the first Maintainer in Residence in the upcoming months and announce it on this blog, so stay tuned!

How to contribute funds

If you are an individual who wants to help Rust succeed and thrive, you can donate to the RFMF through GitHub Sponsors2. Companies who would like to invest in better maintenance of Rust can also donate through GitHub Sponsors or they can contact the Rust Foundation directly.

The important thing is that all proceeds from this fund will be directly used to support Rust Project maintainers. We currently expect that to happen primarily through the Maintainer in Residence program, but it can also be done in the form of smaller-scale grants or other mechanisms, as determined by the Funding team. We will figure this out on the go, as this is also quite new for us.

We really appreciate each donation, however small, because with more money we can hire more maintainers to ensure that we can continue to develop Rust and that important improvements are not blocked on maintenance tasks. This is especially important at this time, where Rust is starting to get used more and more in the industry in various application areas, which increases the need for sustained maintenance. The importance of multiple funding sources is underscored by an unfortunate trend we currently observe, where key Rust maintainers are losing their funding for Rust work due to budget shifts. The Rust Foundation Maintainers Fund is designed to provide stable funding for Rust maintainers that is less dependent on sudden shifts in the job market and the IT industry.

As with most things, there is no one-size-fits-all solution, so there are multiple ways to support Rust financially. The RustNL Maintainers Team recently hired several Rust Project maintainers. Previously, we wrote about how you can support specific individuals working on Rust. And there are also Rust Project Goals in search of funding. We welcome all efforts that can help support Rust Project maintainers, who often do work that is near invisible and thankless, while at the same time incredibly important and necessary, on a volunteer basis.

Thank you for considering sponsoring the development and maintenance of Rust! You can find more information about funding Rust on our Funding page.

-

This program was inspired by the Developer in Residence concept used by the Python Software Foundation (PSF), with which we led several helpful discussions. Thank you, PSF! ↩

-

Note that the fact that GitHub Sponsors is currently enabled on the

rustfoundationGitHub organization, and not therust-langorganization, is an implementation detail that might change in the future. All donations raised on this Sponsors page will be routed to the Rust Foundation Maintainers Fund and will be spent on directly supporting Rust Project maintainers. ↩

02 Jun 2026 12:00am GMT

01 Jun 2026

Planet Mozilla

Planet Mozilla

Firefox Nightly: Backup for a Rainy Day – These Weeks in Firefox: Issue 202

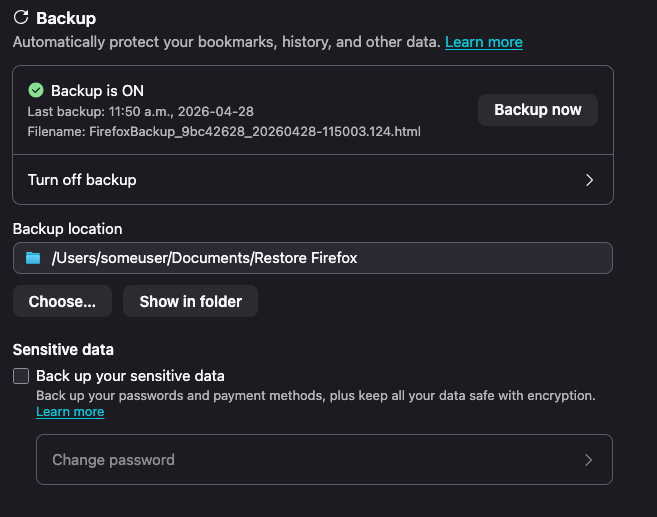

Highlights

- The profile backup mechanism has been enabled by default for all desktop platforms in Nightly, as well as Beta! The current plan is to have this ride out to Firefox 151 for Windows, macOS and Linux on May 18th!

- This feature, when enabled, will create a copy of your profile data in the background and store it in a single file on your file system that you can restore from.

- You will be able to manage this feature in Settings under Sync (for now)

- You can read more about the feature here

- As followups to the recent addition to the WebExtension tabs API to support the new SplitView tabs feature, tabs.group() and tabs.ungroup() have been fixed to work correctly with split view tabs, and fixed split views being prepended instead of appended to tab groups when adopted into a new window - Bug 2029099 / Bug 2029534

- Adaptive autofill has been enabled on Nightly.

- Previously, autofill only completed domains (e.g. typing red autofilled reddit.com). Now it can also complete full URLs for pages you visit often (e.g. red → reddit.com/r/firefox), learning from what you actually click in the address bar. If a suggestion isn't helpful, you can now dismiss it so autofill learns what not to show you too.

- If you run into issues or have feedback, you can file a bug here!

- Previously, autofill only completed domains (e.g. typing red autofilled reddit.com). Now it can also complete full URLs for pages you visit often (e.g. red → reddit.com/r/firefox), learning from what you actually click in the address bar. If a suggestion isn't helpful, you can now dismiss it so autofill learns what not to show you too.

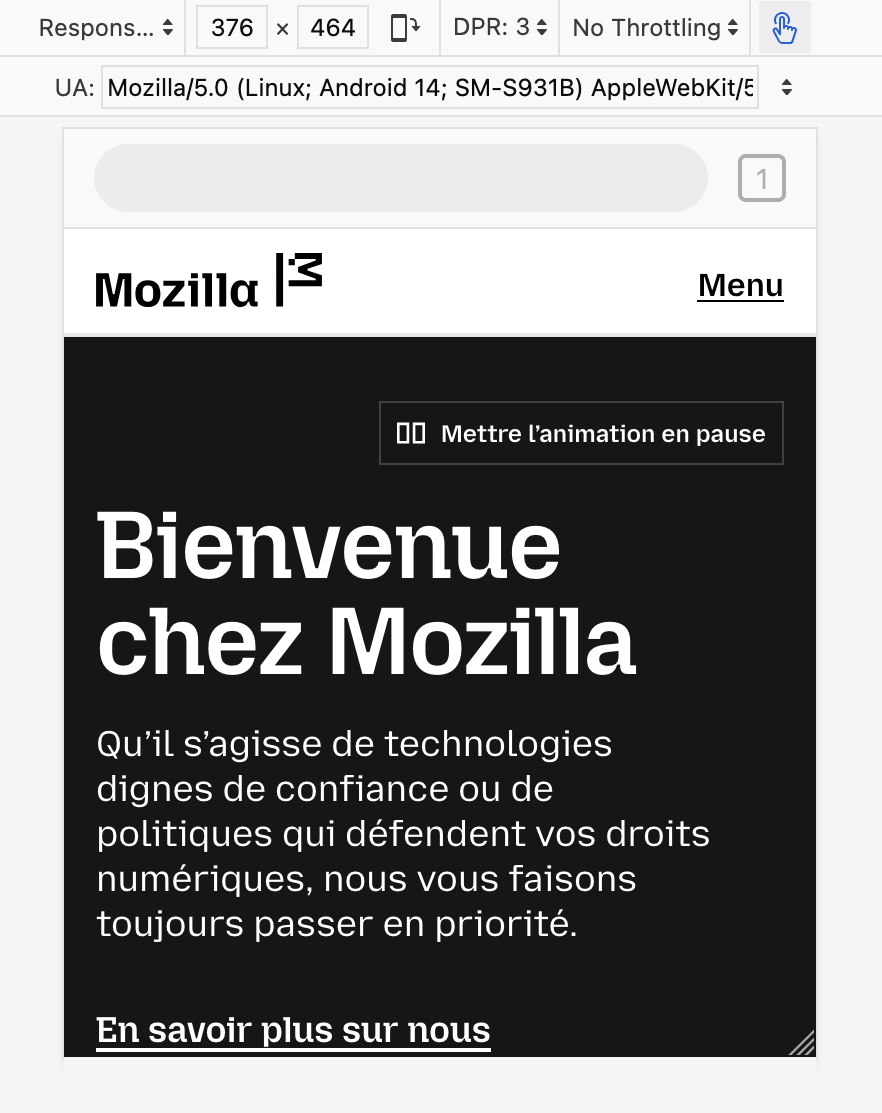

- Markus Stange [:mstange] implemented dynamic toolbar on top in RDM (#1978145), but also implemented some static skeleton UI so it's closer to what we actually have in Firefox for Android

Friends of the Firefox team

Resolved bugs (excluding employees)

Script to find new contributors from bug list

Volunteers that fixed more than one bug

- Amin Amir

- aoia7rz7l

- Chukwuka Rosemary

- DrSeed

- Frédéric Wang Nélar

- japandi

- John Iweh

- jonathancabera

- Josh Aas

- Keji Bakare

- kofoworola shonuyi

- konyhéa

- liz

- Mathew Hodson

- Okhuomon Ajayi

- Oluwatobi

- ROSHAAN

- Sam Johnson

New contributors (🌟 = first patch)

- Anthony Mclamb: Disable the legacy Edge migrator

- Amin Amir

- any1here: install_sig_alt_stack incorrectly checks mmap's return value

- 🌟 Armin Ulrich: Fix MessageHandlerRegistry.sys.mjs calling getExistingMessageHandler with an unused second argument

- japandi

- Nathan Johnson [:narjoDev]: Remove browser.display.use_system_colors pref

- DrSeed

- Keji Bakare:

- 🌟 gotyaoi: Reload toolbar button is active on about:newtab

- Itoro James: [A11y][Keyboard Navigation]Cancelling a note via Keyboard Navigation still saves it

- John Iweh: The notification dot is not displayed if the tab is in a Split View

- 🌟 John Iweh: sidebar-shown attribute remains when sidebar.revamp is false

- 🌟 jonathancabera:

- The Move tab to Split View option is also displayed for the tabs that are within the Split View

- A Note with long text (1003 characters) is saved by pressing ENTER even if the "Save" button is disabled

- Tab group guide line becomes disconnected under certain conditions related to split views in vertical tab mode

- Aloys: Remove logic that forces distribution language packs to be reinstalled when upgrading from Firefoxes older than 67

- liz:

- Mary cathline: Tab Group Label does not respect touch density in vertical tab bar

- 🌟 Brandon Lucier: Popups opened with window.open give window type normal instead of popup

- karan68: [dialog] New Shortcut dialog needs a label/accessible name

- 🌟 Vector: Button does not programmatically indicate that it opens a dialog (Recent activity section > story card > ••• disclosure > Delete from History button)

- 🌟 Osoble: Update font size and weight for synced tabs device name headers in Firefox View

- konyhéa:

- Add test for sync admin disabled to browser_syncedtabs_errors_firefoxview.js

- Check all second paramaters for TestUtils.waitForCondition in Fx View test files

- Recently Closed Tabs, Tabs from Other Devices, and History pages should have Cmd / Ctrl + Click on a link open the link in the new background tab.

- Noble Chinonso: #shouldHandleEvent in SidebarTreeView.js compares event.keyCode to string values, causing Home/End keys to never be handled

- Pranjali Srivastava: Add a test to verify that the space above tabs is consistent across PB, LWT and sizemode (where appropriate)

- Okhuomon Ajayi:

- More spacing is needed between the tab note icon and the close icon on the tab

- The tabs in vertical mode collapsed state are positioned differently in Split View

- Keep vertical split view tabs stacked vertically even when the sidebar is expanded when expand on hover is enabled

- Vertical split view tabs can be too big or small when tabs are overflowing

- 🌟 Rishan: Fix duplicated arrow function in browser_history_sidebar.js

- Chukwuka Rosemary:

- ROSHAAN:

- Sameeksha: Disclosure button expanded/collapsed state not programmatically defined (Customize button)

- kofoworola shonuyi:

- 🌟 Sayd Mateen: Page URL is displayed as tab name when page's contains about:reader?</a></p> <p>

- Oluwatobi:

- Nishchay [:nish]: Unable to add tabs to old closed tab groups (tabGroupState.splitViews is undefined)

Project Updates

Add-ons / Web Extensions

Addon Manager & about:addons

- In preparation for the Project Nova restyling of the about:addons page, we have refactored about:addons into separate per-component ES modules, splitting the monolithic aboutaddons.js and aboutaddons.html into 16 dedicated component files under components/ (with no behavior or UI changes) - Bug 2032014

- NOTE: if you have working on patches with changes to about:addons internals it is very likely you'll need to rebase and solve merge conflicts hit on top of this refactoring, the internals are still largely the same as before but don't hesitate to reach out to the Addons team if you have doubts / questions or need help to figure out how to adapt your patch of top of these changes

WebExtensions Framework

- Fixed exportFunction to preserve the constructibility of the wrapped function instead of unconditionally making all exported functions implicitly as constructors - Bug 2033173

- Thanks to Gregory Pappas for contributing this improvement to the Content Scripts' Xray Wrappers helpers!

- Fixed a Firefox 151 regression where extension content scripts accessing location.ancestorOrigins caused subsequent page script reads of the same property to fail with "Permission denied", breaking sites like Gmail - Bug 2034329

- Thanks to Simon Farre for promptly investigating and fixing this recent regression!

WebExtension APIs

- Updated sessions.getRecentlyClosed() to remove the hardcoded cap when maxResults is omitted - Bug 1392125

- Shoutout to Amine Zroual for contributing this enhancement to the sessions WebExtensions API!

DevTools

- Artem Manushenkov fixed an issue where autosuggestion popup was removing overridden indicators from properties in the Inspector (#1983408)

- Andrea Marchesini [:baku] fix DevTools cookie header serialization for long cookies, which could lead to cookies not being visible in Netmonitor (#2031299)

- Julian Descottes [:jdescottes] fixed a toolbox crash that was happening we couldn't find a localization file (e.g. when using a language pack on Nightly) (#2028930)

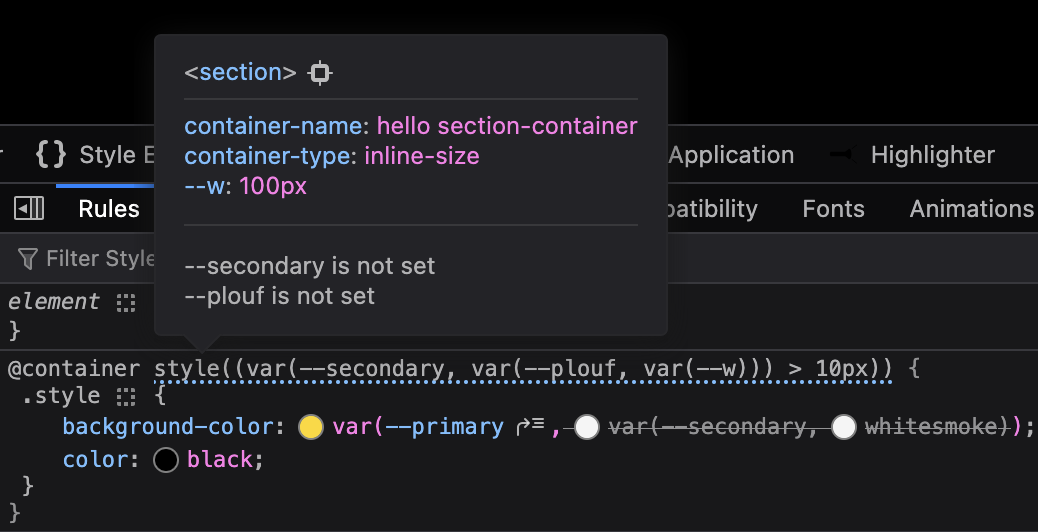

- Nicolas Chevobbe [:nchevobbe] improved @container tooltip so it show the value of variables used in style()(#2030239), has enough contrast in dark mode (#2033782) and contains a link to select the container (#2031688)

- Hubert Boma Manilla (:bomsy) is making good progress on migrating the Console to CodeMirror 6 (#2032758, #2026569)

Fluent

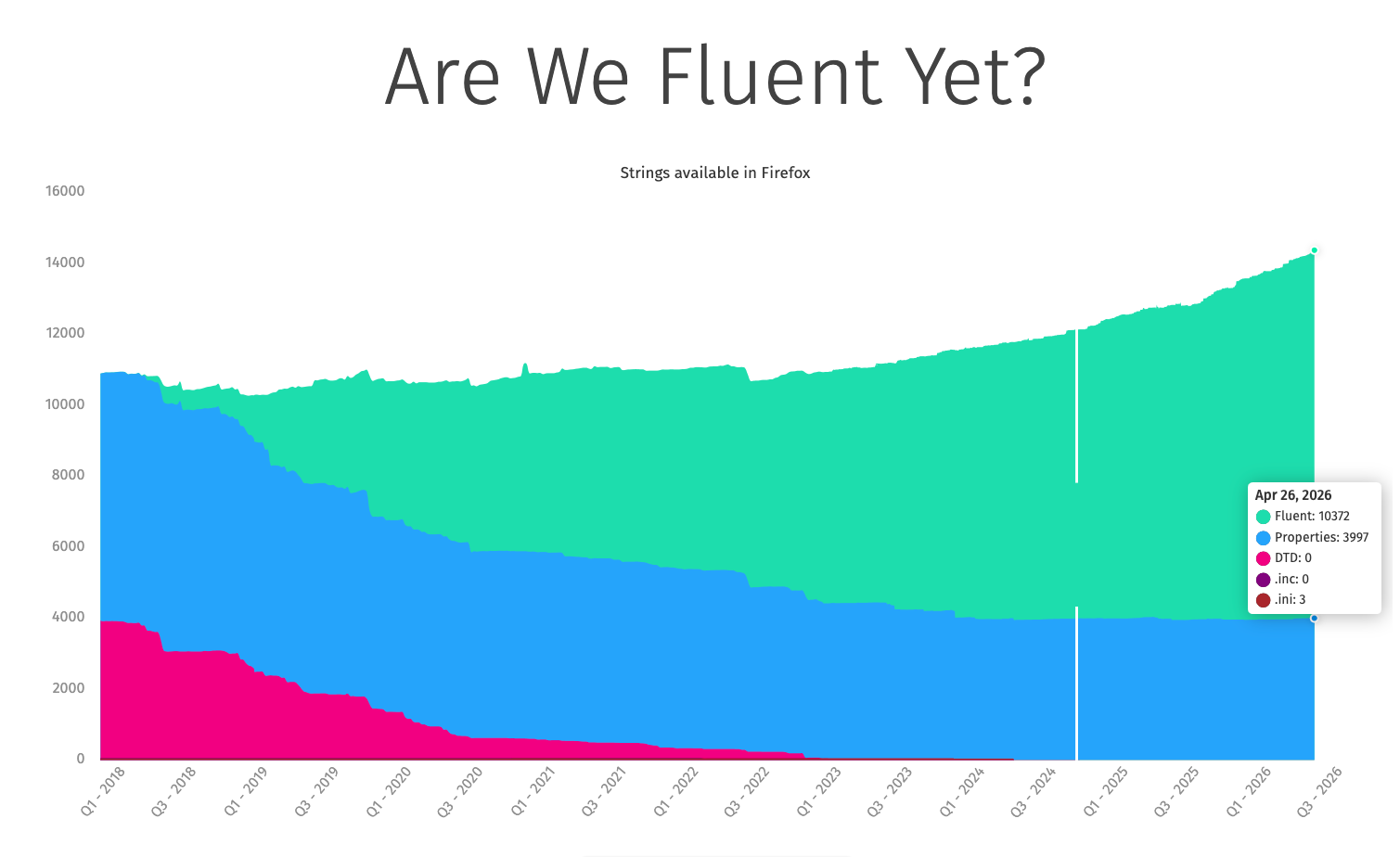

- We're now at over 72% of our strings being Fluent! Got a component still using .properties? Convert when you can!

Migration Improvements

- Thanks to dao for fixing a recent alignment issue in the migration wizard dropdown

- Thanks to volunteer contributor Anthony Mclamb for his patch that disables the legacy EdgeHTML Edge migrator! Once that finishes rolling out, presuming no surprises, we'll go ahead and remove the migrator entirely.

New Tab Page

- Nova for New Tab has ridden the trains to Beta! It will be enabled by default, globally, when Firefox 151 goes out to release on May 19th

- It's possible that we'll do a train-hop coupled with an experiment to enable HNT Nova for a few clients a bit earlier.

- Maxx Crawford enabled Nova designs for New Tab, rolling out the updated layout, widgets, and customization panel behind HNT Nova flags.

- Maxx Crawford fixed the Nova content feed to render the intended four‑column layout by correcting CSS grid breakpoints.

- Maxx Crawford resolved a first‑load failure in the Weather widget by fixing init order and fetch timing, eliminating the "Oops" error.

- Maxx Crawford synchronized the Weather toggle between about:preferences#home and the panel via the shared showWeather pref to prevent desync.

- Maxx Crawford updated Nova grid focus order to align tab flow with visual order for keyboard users.

- Maxx Crawford fixed critical UI issues in Lists and Timer widgets covering overflow, controls, and layout stability.

- Maxx Crawford guarded document.dir access in Nova render paths to avoid startup cache worker errors and improve startup stability.

- Rolf added a new normalization method for the inferred interest vector to stabilize topic relevance across sessions.

- Rolf prevented unnecessary content refreshes during Pocket New Tab experiments, reducing jank and bandwidth.

- Sameeksha defined the Customize button's expanded/collapsed state programmatically using aria-expanded for better a11y.

- liz clarified follow/unfollow/blocked button names with topic context so screen readers announce clear actions.

- Vector marked the Delete from History control as opening a dialog via aria-haspopup=dialog for assistive tech.

- Scott Downe fixed a regression that flipped the Wallpapers pref off, restoring user selections.

- Irene Ni corrected privacy link color and focus styles for contrast and keyboard visibility.

- Reem Hamoui added a wallpaper toggle reset in the Nova customization panel so users can quickly restore default wallpapers without extra steps.

- Reem Hamoui fixed the Customize pencil button to match the Nova spec, aligning placement and iconography for visual consistency.

- Dre updated the 'Fresh new' wallpapers copy to a clearer, localized message for better comprehension.

- Irene Ni fixed Nova privacy link color and focus styles to meet contrast and focus ring guidelines, improving accessibility on New Tab.

- Irene Ni adjusted Sponsored tile character limits to prevent truncation/overflow, yielding cleaner titles across grid and wide tiles.

- Scott Downe fixed a regression that flipped the Wallpapers user pref to false, restoring wallpapers for affected users and preventing unintended disablement.

- Reem Hamoui hooked the wallpaper check into the new toggle logic so the Customization Panel accurately reflects wallpaper availability and state.

- Irene Ni landed Nova UI updates for the Daily Briefing 3-pack card, improving spacing, type scale, and tap targets.

- Reem Hamoui added a wallpaper toggle reset in the Nova customization panel so users can quickly restore default wallpapers without extra steps.

- Reem Hamoui fixed the Customize pencil button to match the Nova spec, aligning placement and iconography for visual consistency.

- Dre updated the 'Fresh new' wallpapers copy to a clearer, localized message for better comprehension.

- Irene Ni fixed Nova privacy link color and focus styles to meet contrast and focus ring guidelines, improving accessibility on New Tab.

- Irene Ni adjusted Sponsored tile character limits to prevent truncation/overflow, yielding cleaner titles across grid and wide tiles.

- Scott Downe fixed a regression that flipped the Wallpapers user pref to false, restoring wallpapers for affected users and preventing unintended disablement.

- Reem Hamoui hooked the wallpaper check into the new toggle logic so the Customization Panel accurately reflects wallpaper availability and state.

- Irene Ni landed Nova UI updates for the Daily Briefing 3-pack card, improving spacing, type scale, and tap targets.

Search and Urlbar

- Marco has fixed a couple of issues with the places databases to try and improve stability. This should help with avoiding users losing bookmarks or favicons.

- Work continues on the new separate search bar to improve the functionality, e.g. allowing middle click to perform a search in a new tab, avoiding performing a search when adding a search engine.

- Work also continues on the new Nova layouts.

Smart Window

- uplifted 10 bugs to 150.0.1 dot release addressing initial user feedback from diary study and Connect

- search engine switching from smart bar 2021973

- Nova styling within smart window 2026794

Storybook/Reusable Components/Acorn Design System

- Dustin converted moz-breadcrumb-group variables into JSON design tokens Bug 2029181 - Convert moz-breadcrumb-group variables into JSON design tokens

- Dustin converted moz-box-* variables into JSON design tokens Bug 2029180 - Convert moz-box-* variables into JSON design tokens

- Dustin converted moz-promo variables to JSON design tokens Bug 2029190 - Convert moz-promo variables into JSON design tokens

- Dustin converted moz-reorderable-list variables to JSON design tokens Bug 2029191 - Convert moz-reorderable-list variables into JSON design tokens

- Dustin converted moz-visual-picker variables to JSON design tokens Bug 2029193 - Convert moz-visual-picker-item variables into JSON design tokens

- Dustin updated browser-shared.css so it passes use-design-tokens Bug 2022985 - Update browser-shared.css so it passes use-design-tokens

- Dustin updated popup.css so it passes use-design-tokens Bug 2022979 - Update popup.css so it passes use-design-tokens

- Jon added opacity tokens and added opacity to use-design-tokens stylelint rule Bug 1955325 - Create opacity tokens

- Jon converted toolbar design tokens to JSON Bug 2017970 - Convert toolbar design tokens to json

- Anna fixed moz-select with panel-list drop-down size inconsistency Bug 2032365 - Applications Action drop-down menus sometimes have a different size when opened

- Anna fixed issue with the disabled state of moz-radio component Bug 2027123 - moz-radio disabled state cannot be changed while the moz-radio-group is disabled

- Anna updated moz-button and moz-box-button components to prevent label corruption when accesskeys are present and the label changes. Bug 2022326 - moz-button with accesskey label becomes corrupted when l10nId updates dynamically

UX Fundamentals

- The error pages shown when a server sends back an invalid response header or an unsupported content encoding now display accurate, context-specific messages. The invalid response header page also gained a helpful list of next steps. - 2027209

- In progress: The error page illustrations are being replaced with new artwork, and the system now supports per-illustration size configuration, giving each image the ability to define its own appropriate dimensions. - 2031837

Settings Redesign

- Tim converted settings related to Accessibility page to config-based pane Bug 1968116 - Convert settings related to Accessibility page to config-based settings

- Benjamin converted Privacy & Security page to the config-based pane Bug 1968112 - Convert settings related to Privacy & Security page to config-based settings

- Finn integrated Firefox Labs page into setting-pane config Bug 2021047 - Integrate Firefox Labs page into setting-pane config

- Anna converted Firefox Updates section to config-based prefs Bug 1990961 - Convert Firefox Updates section to config-based prefs