08 Jun 2026

Planet KDE | English

Planet KDE | English

RIT Unny open source font

E.P. Unny is a notable Indian political cartoonist, who worked/works with famed Shankar's Weekly and new papers such as The Hindu and Indian Express.

Since 2020, all his cartoons (also 2025, 2026 so far) are published - every week - open-access by Sayahna Foundation.

Unny was using a font based on his handwriting style for the cartoons, designed by K.H. Hussain of Rachana. Recently, a new font designed by Varshini KVSS & 'Kandam Collective' is developed by Rachana Institute of Typography to use in the cartoons, and it is released as open source - see the specimen and download links.

The character set of the font is Latin only. There are plenty of alternate glyphs (for upper case and lower cases of i, j, l, g, etc. - for instance check the double 'l' in 'Intelligence' on the specimen above). Such characters are rendered alternately to give a feel of the randomness that handwriting evokes.

The source (and issue tracking) are available at RIT fonts repository.

08 Jun 2026 11:14am GMT

07 Jun 2026

Planet KDE | English

Planet KDE | English

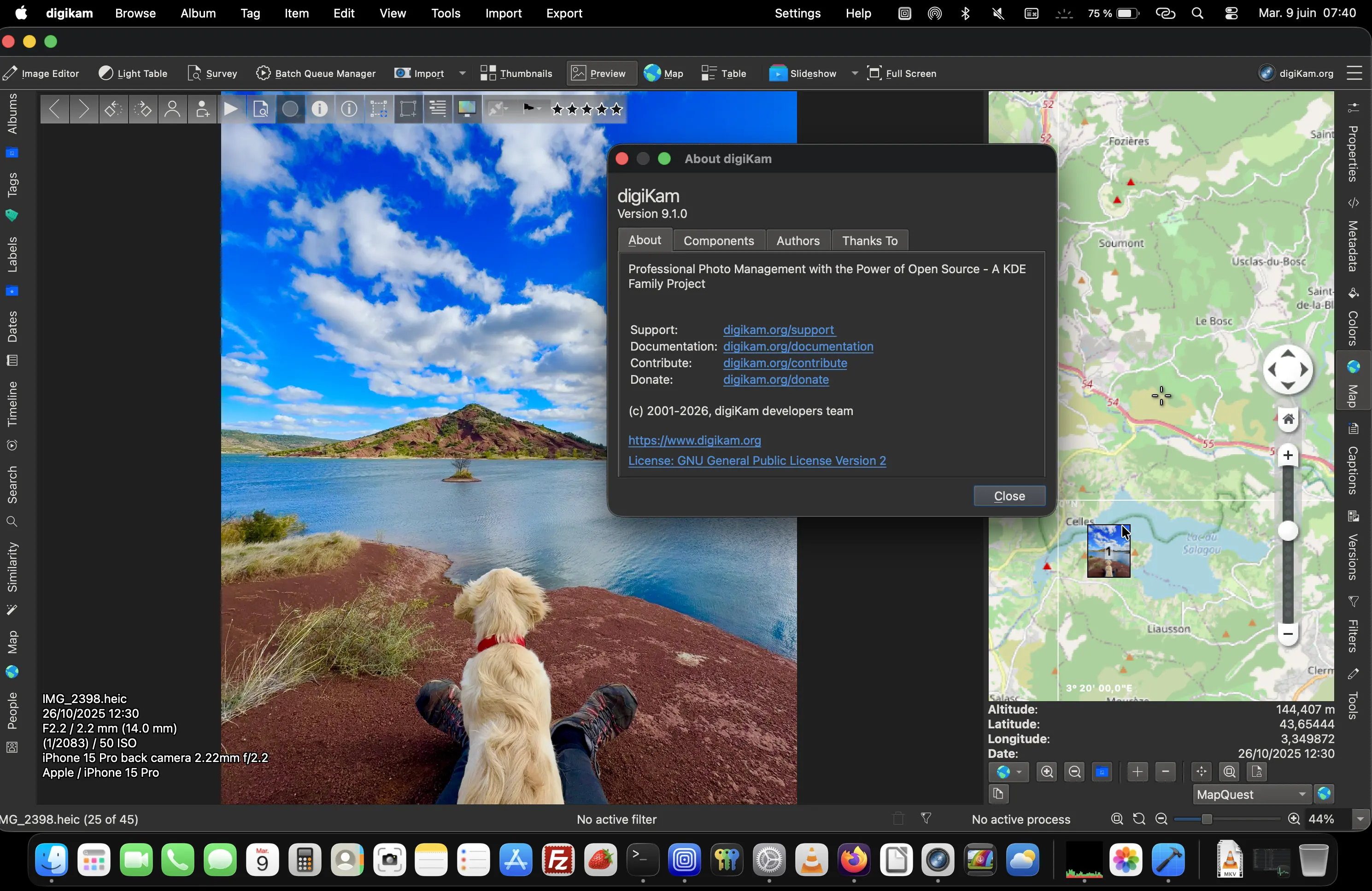

digiKam 9.1.0 is released

Dear digiKam fans and users,

After three months of active development, bug triage, and feature integration, the digiKam team is proud to announce the stable release of digiKam 9.1.0. This version builds on the foundation of 9.0.0, introducing new features, performance improvements, and a significant number of bug fixes to enhance stability, usability, and workflow efficiency. This release focuses on database migration, preview enhancements, advanced search, and usability improvements across the board.

07 Jun 2026 12:00am GMT

06 Jun 2026

Planet KDE | English

Planet KDE | English

Size Maters

So… more Oxygen icons

This weekend I been filling some of the more obvious holes in the icon set and recently these 2 landed.

The first one is for KeepSecret, the application that is replacing the old KWallet.

Security icons are funny. There are only so many ways to draw "keep your stuff safe" before you end up with another lock icon. Or a key. Or a lock pretending not to be a lock.

I ended up going with a small vault door. Which is basically also a lock pretending not to be a lock but at least it looks kinda cool, and more important I think it looks as smart as our users are...

The other one is for generic package managers.

And apparently software still arrives in wooden crates.

I dont know who started this visual convention but after all these years I kinda stopped questioning it. Software packages are boxes. Everybody understands boxes. Lets move on, and debate the real important things such as the relevance of the save icon as a flopy device

One thing that I love about icon design is how different the process becomes depending on size.

At large sizes you can spend hours messing around with materials, reflections, shadows and tiny details.

At small sizes all that disappears and suddenly you are fighting for every pixel. trying to keep the meanigfull details and creating a source svg monster in Oxygen repo

I think icon design is really just the art of deciding what can be removed before the whole thing falls apart.

Talking about things that may or may not fall apart…

I've also been spending some time exploring styling in QML and thinking about possible future directions for O².

Nothing concrete yet.

Mostly experiments.

Some ideas are sensible.

Some ideas are absolutely not sensible. On propose

The main goal is realy to show range of things I would ike to see available on Union.

Those are usually the interesting ones.

Right now I'm more interested in exploring the range of possibilities than deciding what a final result should look like. There are some surprisingly fun things hidden in there.

If all goes well we will soonish publish a video with KDAB showing some of these experiments and crazy ideas.

Or at least the ones that dont completely explode before then

So stay tuned.

And as always, thanks for using Oxygen.

This old project still finding new excuses to keep going.

06 Jun 2026 9:40pm GMT

Kdenlive 26.04.2 released

The second maintenance release of the 26.04 series is out with the usual batch of bug fixes and improvements for workflow and stability. This update comes with fixes to rendering, timeline editing, project file handling, and Windows, MacOS, AppImage and Flatpak packaging.

One noteworthy bug closed in this version is a fix on Windows to finally allow exporting your videos to a network drive, closing a 4-year-old bug.

Head to our download section to get the latest binaries, or check the updates from your package manager. Please note that for Linux only AppImage and Flatpak are supported by the Kdenlive team.

For the full changelog continue reading on kdenlive.org.

06 Jun 2026 12:45pm GMT

This Week in Plasma: Fixing all the things

Welcome to a new issue of This Week in Plasma!

This week the team continued polishing Plasma 6.7 for its release later in the month. As such, this week saw mostly bug fixing.

Notable UI improvements

Plasma 6.7

Hovering over a partially-visible item in the Kickoff Application Launcher widget no longer unexpectedly automatically scrolls the view to show the entire item. (Christoph Wolk, KDE Bugzilla #426015)

Spectacle's feature to automatically copy the screenshot to the clipboard is now temporarily turned off while extracting text via OCR, so that the extracted text makes it onto the clipboard instead. (Tobias Fella, KDE Bugzilla #520758)

When launching an app from a terminal window, the animated launch feedback effect now ends after the app launches, as expected. (Nicolas Fella, Vlad Zahorodnii, and Akseli Lahtinen, KDE Bugzilla #459986)

Plasma 6.8

Notifications about connected devices having low battery power are now shown in full-screen apps so you won't miss them, just like notifications for the internal battery being low. (Jan Bidler, plasma-workspace MR #6623)

The Peek at Desktop and Window Aperture effects now respect the system-wide animation speed setting. (Sudip Datta, KDE Bugzilla #490531)

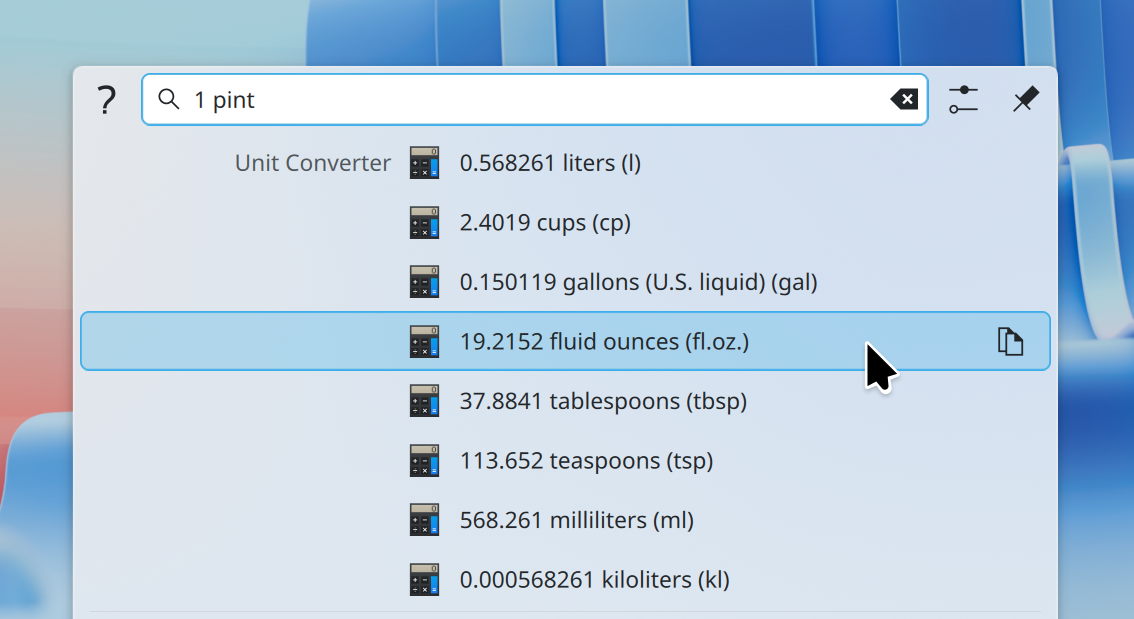

Frameworks 6.27

KRunner now assumes you mean US pints rather than Imperial pints when you convert to or from them, since pints are still official in the USA. (Nate Graham, kunitconversion MR #86)

Disk sizes displayed in various places now fully respect your preference regarding storage units. (Tobias Fella, KDE Bugzilla #518493)

Notable bug fixes

Plasma 6.6.6

Fixed a case where Plasma could crash while refreshing the list of nearby wireless networks. (David Edmundson, KDE Bugzilla #519739)

Fixed an issue that reset custom tiling layouts on portrait-rotated monitors after restarting the system. (Hynek Schlindenbuch, KDE Bugzilla #514355)

Searching for an app in the Kicker Application Menu widget and launching one that isn't the first item in the search results list no longer launches it twice. (Christoph Wolk, KDE Bugzilla #521001)

Plasma 6.7

Fixed a regression in VNC-based screen sharing that prevented the Ctrl and Alt modifier from being sent to the machine being controlled. (David Edmundson, KDE Bugzilla #519690)

Fixed an issue that could make Plasma freeze if you quickly switch months in the Digital Clock widget by dragging on the calendar view multiple times in quick succession. (Sander Wolswijk, KDE Bugzilla #511028)

Made the Task Manager Widget's "Move to Desktop" feature work on grouped tasks. (Hynek Schlindenbuch, KDE Bugzilla #520777)

Fixed two issues with the Grouping widget: not re-appearing instantly after undoing deleting it, and a phantom header remaining visible after removing the last app from it. (Tobias Fella, KDE Bugzilla #517044 and KDE Bugzilla #517043)

Fixed a variety of issues with icons or list items not properly reversing themselves when using a right-to-left language like Arabic or Hebrew. (Tobias Fella and Akseli Lahtinen, KDE Bugzilla #518932, KDE Bugzilla #518909, KDE Bugzilla #518935, KDE Bugzilla #518835, and KDE Bugzilla #518837)

Made System Settings raise and focus its window as expected when launched using a higher-than-default level of focus stealing prevention. (Kai Uwe Broulik, systemsettings MR #408)

Frameworks 6.27

Switching between light and dark Global Themes no longer sometimes results in various UI elements in Plasma only changing their colors halfway. (Marco Martin, KDE Bugzilla #503671)

Fixed an issue saving and retrieving config data in cases where a setting has been given a system-wide default value by the OS vendor that differs from KDE's own default value. (Nicolas Fella, KDE Bugzilla #509416)

Fixed various related issues with network shares auto-mounted using systemd, including the trash not working properly, and appearing strangely in GUI apps. (Oliver Schramm, KDE Bugzilla #518012)

Fixed an issue that made it impossible to rename files on the desktop via the "Suggest new name" feature in the overwrite dialog. (Akseli Lahtinen, KDE Bugzilla #509461)

How you can help

KDE has become important in the world, and your time and contributions have helped us get there. As we grow, we need your support to keep KDE sustainable.

Would you like to help put together this weekly report? Introduce yourself in the Matrix room and join the team!

Beyond that, you can help KDE by directly getting involved in any other projects. Donating time is actually more impactful than donating money. Each contributor makes a huge difference in KDE - you are not a number or a cog in a machine! You don't have to be a programmer, either; many other opportunities exist.

You can also help out by making a donation! This helps cover operational costs, salaries, travel expenses for contributors, and in general just keeps KDE bringing Free Software to the world.

To get a new Plasma feature or a bug fix mentioned here

Push a commit to the relevant merge request on invent.kde.org.

06 Jun 2026 12:00am GMT

05 Jun 2026

Planet KDE | English

Planet KDE | English

Web Review, Week 2026-23

Let's go for my web review for the week 2026-23.

"But it happened."

Tags: tech, google, attention-economy, business

Good point, the booing on Eric Schimidt's commencement speech is likely not just about him talking about AI at some point. You see, the man has very heavy baggage… He's one of the architects of the current dystopia but won't acknowledge it.

https://www.youtube.com/watch?v=tlQ7EoJDTQY

AI didn't break the web. The dotcons did - AI just turned up the volume

Tags: tech, copyright, commons, web, ai, machine-learning, gpt, enclosure

Indeed the trend wasn't new. It's "just" the icing on the cake from the enclosure point of view.

https://hamishcampbell.com/ai-didnt-break-the-web-the-dotcons-did-ai-just-turned-up-the-volume/

Unlawful by design: Exposing the human rights costs of generative AI

Tags: tech, ai, machine-learning, gpt, ethics, law

When Amnesty International feels like it has to publish a 44 pages briefing pointing out what's wrong with your approach and business… it'd be nice to pay attention.

https://www.amnesty.org/en/documents/pol40/0996/2026/en/

About rsync slopocalypse

Tags: tech, ai, machine-learning, copilot, quality

Indeed, if the rsync maintainer can't handle a coding assistant properly… who can?

https://teh.entar.net/@spacehobo/116659545246426837

When Other Games Chased Polygons, Blade Runner Chased Atmosphere

Tags: tech, game, graphics, 2d, 3d

There was an era of hybrid techniques in video games before it mostly went full real-time 3D. It gave interesting results, here is an example.

https://gardinerbryant.com/when-other-games-chased-polygons/

Avoid using "<![CDATA[ ... ]]>" in RSS

Tags: tech, blog, rss

Good point indeed, need to review my own feed next time I get the chance.

https://waspdev.com/articles/2026-05-11/avoid-using-cdata-in-rss

You Don't Love systemd Timers Enough

Tags: tech, linux, systemd, time

Good primer on systemd timers. Indeed it's really one of the nice systemd features.

https://blog.tjll.net/you-dont-love-systemd-timers-enough/

5 PostgreSQL locking behaviors that trip people up

Tags: tech, postgresql, databases, distributed

Mind those traps when dealing with such a database. There are locks you don't necessarily expect.

https://dev.to/shinyakato_/5-postgresql-locking-behaviors-that-trip-people-up-4k7n

You probably don't need Yocto, and that's fine

Tags: tech, linux, embedded, yocto, debian

Indeed, teams reach out to Yocto by default a bit too much. It's good to have an idea on when you really needed and when you can go for simpler options.

https://sigma-star.at/blog/2026/05/you-probably-dont-need-yocto-and-thats-fine/

Nine Ways to Do Inheritance in Rust, a Language Without Inheritance

Tags: tech, rust, type-systems, object-oriented

Some of the examples lean on macro trickery. Still this gives a good example of the flexibility you get with the trait system.

The C++ Standard Library Has Been Walking Itself Back for Fifteen Years, and the Receipts Are Public

Tags: tech, c++, standard, culture

Cold and harsh look at how the C++ standard library evolves. There's indeed a problem in the fact that nothing gets removed ever.

You Must Fix Your Asserts

Tags: tech, reliability, failure, debugging

Good point, disabling asserts in production is not the best default position to have.

https://kristoff.it/blog/fix-your-asserts/

What (almost) Everyone Gets Wrong About TDD & BDD

Tags: tech, tdd, atdd, history

Good summary of how the terms evolved. They are more tied to each other than most people think.

https://antonymarcano.substack.com/p/what-almost-everyone-gets-wrong-about-c05

normalize patience

Tags: tech, culture, patience, time, productivity, attention-economy

Things which matter take time. The calls to productivity and technology pushing us toward faster response on everything is killing what makes our humanity.

https://rnotte.art/normalize-patience/

Bye for now!

05 Jun 2026 4:39pm GMT

04 Jun 2026

Planet KDE | English

Planet KDE | English

Week 2 — Porting KeepSecret to the Kirigami ActionCollection API

This week, I completed the port of KeepSecret's actions to the new org.kde.kirigami.actioncollection API from kirigami-app-components, a recently introduced library developed by Marco Martin (notmart).

Before working on this task, actions were defined separately throughout the UI. With ActionCollection, I could define each action once in a central place and then reuse it wherever it was needed. This makes the code easier to maintain and also integrates KeepSecret with KDE's standard shortcut system, allowing users to configure shortcuts through the usual KDE interface.

The main change was creating a dedicated Actions.qml file with AC.ActionCollectionManager as the root element. Inside it, actions are now centrally defined across three collections - one for the wallet list, one for wallet contents, and one for item details. These include actions such as New Wallet, New Entry, Search, Lock, Unlock, Copy Password, Save, Revert, and Delete.

During review, additional actions were added, dependencies were simplified, and Flatpak build issues were addressed. Since kirigami-app-components is still very new, some CI and packaging adjustments were needed before everything built correctly.

The merge request !29 has now been reviewed, approved, and merged into KeepSecret.

Next up is the Import/Export feature - I'll be studying KeepSecret's wallet data structures and designing the file format this week.

04 Jun 2026 7:54pm GMT

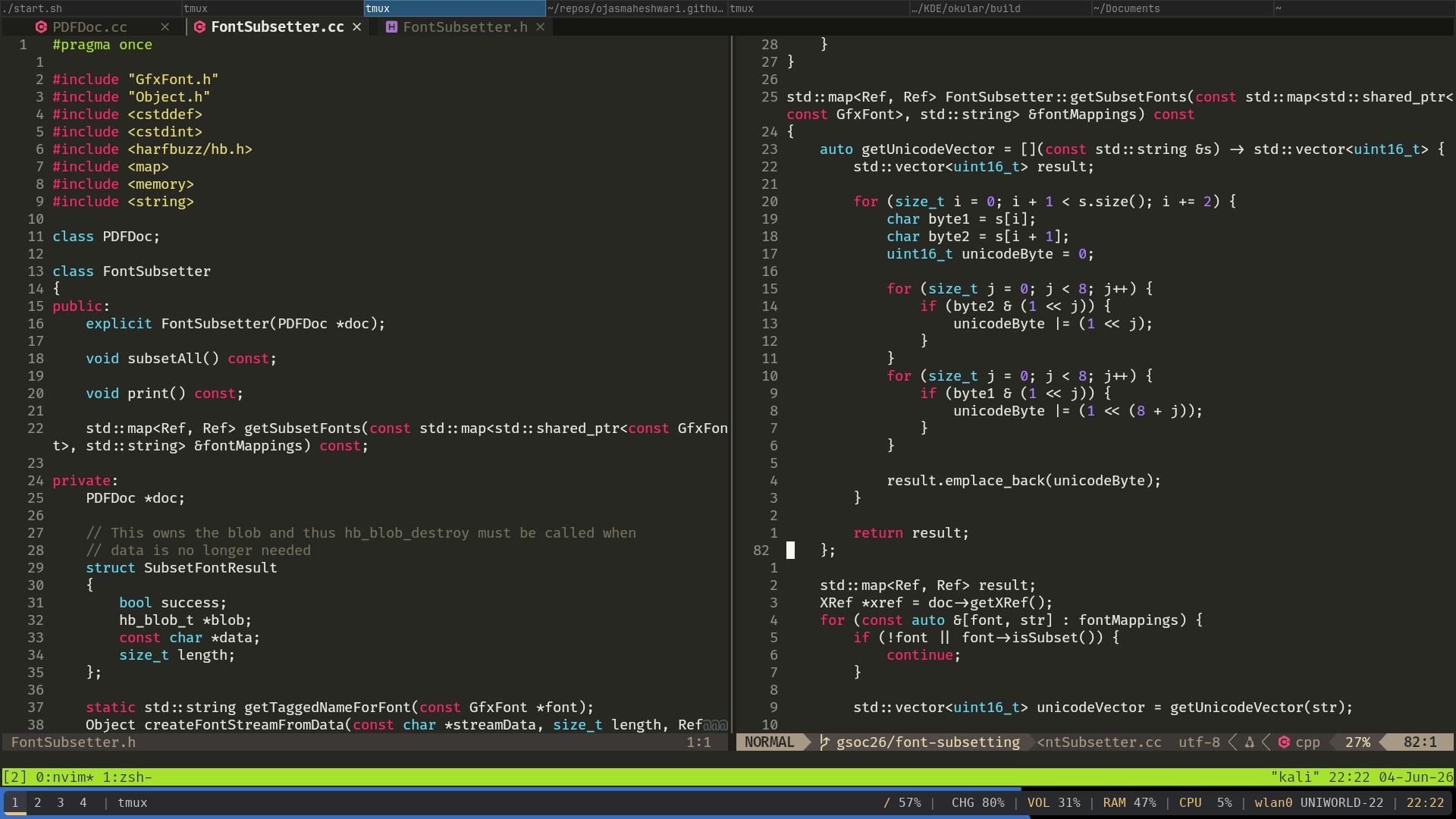

Community Bonding + Week 1 Status Update | GSoC '26

Hello Reader!

In case you don't know me (quite likely 😆), I am Ojas Maheshwari (@the_epicman:matrix.org) and I am currently working in the Google Summer of Code program for the KDE community on a project about Font Subsetting in Poppler under my mentor Albert Astals Cid.

Community Bonding

If I am being honest, I had already bonded with the Poppler community before GSoC started 😅

I had a lot of conversations with Albert, Sune, lbaudin and ats, and they already helped me a lot with my technical problems with my contributions prior to GSoC :D

However, I also managed to bond with other fellow GSoC contributors this summer so it's a win in my book :D

Week 1

I learnt a lot about how PDF files work this week.

Technical terms like Indirect Objects, Default Appearance, Appearance Streams which used to sound confusing to me before finally clicked.

I got more comfortable with traversing and using PDF data structures such as Objects, Arrays, Dicts, Streams etc.

The Happy News is that I got the Font Subsetting to work for FreeText Annotations.

I have raised a Draft MR which should be ready-to-review by end of this week and my plan is to get it fully correct and merged first and then move on to doing the same thing for forms.

Code screenshot to make the blog look cooler

How it works

If you are interested to know what my current approach is:

-

The whole subsetting runs only when the user saves the document.

-

We go over all resources (for now, just FreeText annotations) that were modified, and call a

subsetFontsmethod on them. -

This is a method implemented by every class that needs subsetting, and inside this method we pass the data on what "font" renders what part of the "text" to our

FontSubsetter::getSubsetFonts.

std::map<Ref, Ref> FontSubsetter::getSubsetFonts(const std::map<std::shared_ptr<const GfxFont>, std::string> &fontMappings) const

-

The function generates the subset font objects and adds them to the PDF and returns mappings of old font refs and newer font refs. The subsetting happens using a library called "Harfbuzz".

-

Then the

subsetFontsfunction simply replaces the older refs with the newer ones inside the font subdictionary of the appearance stream of the annotation.

Thanks for reading

Although this blog might look small, it took me more than 1.5 hours to write this because I kept writing and deleting things. I am hoping I get better at writing blogs in the future.

But anyways, thanks for reading!

And have a great day !

Byee 😃!

04 Jun 2026 5:39pm GMT

Lessons Learnt from Our BI Journey: From Open Source to SDV Products Development

Luis Cañas Díaz and I shared lessons from our BI journey at the Eclipse Foundation SDV webinar - from open source to automotive. Methodology, real use cases, and hard-won lessons on data, metrics, and insights.

04 Jun 2026 5:15pm GMT

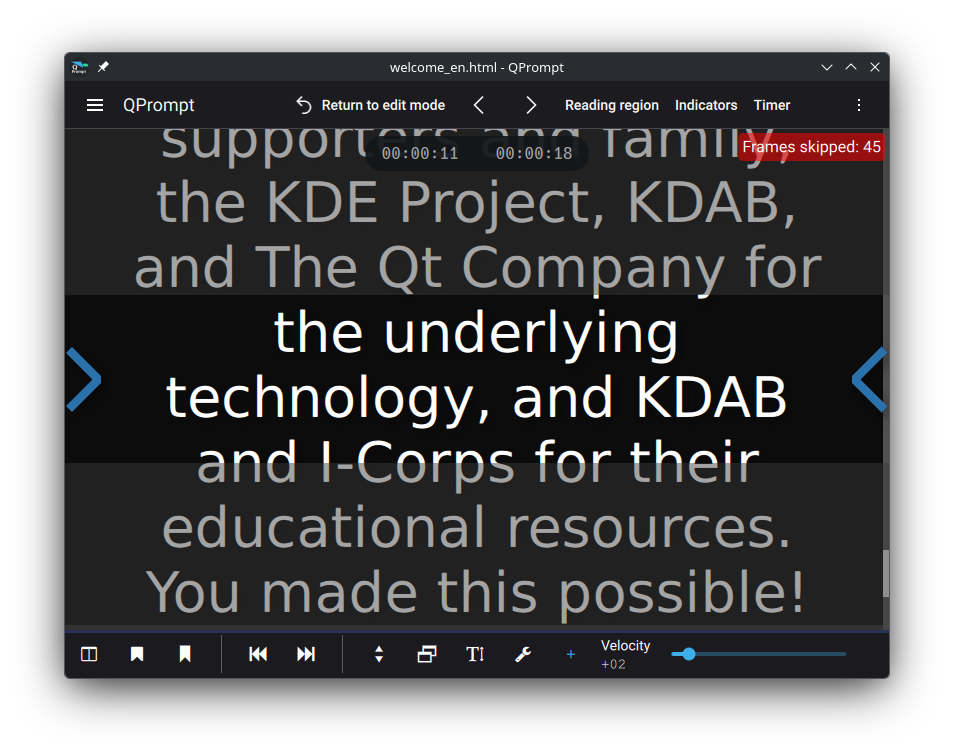

How long does it take for an Item to become visible?

How long does it take for an Item to become visible?

Frames skipped counter in application

How long does it take for an Item that you've just loaded to become visible? Answering this question allows for a way to detect what some users would perceive as "frame drops". I write that in quotes because Qt Quick only draws frames when needed, meaning it doesn't drop frames; but it can show them later than one would expect. That is what we would like to identify: When components are drawn late, and by how many milliseconds or frames are they late?

I've come up with a simple solution - code below the article - on how to measure this. Items being measured must inherit a class based of QQuickItem that has a connection on QQuickItem::visibleChanged. Its visible property should be false by default. When visible becomes true, a slot will start measuring elapsed time and create a direct connection to QQuickWindow::afterFrameEnd. Once the scene graph has submitted a frame, the slot will take the measurement and disconnect the connection that triggered it to prevent further frames from triggering this event.

That alone isn't enough, however. If there were other elements on the scene being animated (say from the render thread via an Animator), those would trigger a frame swap before our item would have had a chance to be drawn, causing our measurement to be taken prematurely.

We need a way of knowing when the frame that draws our component is the one that got swapped into view. Enter QQuickItem::ensurePolished. Calling this function ensures that QQuickItem::updatePolish will be called when the scene graph is ready to render our item. We override QQuickItem::updatePolish and use it to set a flag that'll tell us that the next frame to come be displayed will correspond to the component we're measuring. Lastly, we read this flag during the next call to QQuickWindow::afterFrameEnd, effectively using it to trigger the elapsed time measurement only when our item is swapped onto the screen.

There is a variable amount of time between the last user interaction and the moment a frame can be rendered; because of that, a measurement in milliseconds is only accurate to the average time that it takes for one frame to be rendered immediately after the previous frame. That turns out to be 1 second divided by the display's refresh rate. We can use Qscreen::refreshRate, which gives us this value in hertz. For a 60hz display, a frame's time (T) would be 1000 ms / 60 hz ≃ 16 ms. Any time measured that is between zero and T (16 ms) would mean an instant frame swap. If we divide the measured time by T, and apply a floor function to the result, we get the number of frames dropped while making the component visible, which is a more consistent measurement than the number of milliseconds passed. For a well optimized program the output would be zero, one, or a positive integer very close to that. For more information about the rendering process, you can read scene graph and rendering from Qt's documentation.

Make C++ items visible during their instantiation, or they won't show up on screen. This QQuickItem subclass is different from its parent in that the Item is not visible by default. We set visible to false from the C++ constructor because the order in which initial properties are evaluated and assigned in QML differs depending on the approach used to instantiate the Item and assign its initial properties. You may set the initial visible property of an item in QML to false, then make it true during its instantiation as a delegate somewhere, only for the QML Engine to optimally evaluate its initial value solely to true, causing the visibleChanged signal to never be emitted because there was, effectively, no change to the visible property. Setting the visibility to false from the constructor in C++ is a simple way to guarantee that visibleChanged will be triggered upon any initialization of the visible property in QML.

The code for the QQuickItem subclass described in this article is documented below. Hope you find it useful. Reach out to us if you need help profiling software, would like to receive our training courses, or need help developing tools such as this.

Best regards, Javier

Code

#include <QQuickItem>

#include <QQuickWindow>

class TimedItem : public QQuickItem

{

Q_OBJECT

QML_ELEMENT

Q_PROPERTY(qint64 timeToDisplay READ timeToDisplay NOTIFY timeToDisplayChanged FINAL)

public:

TimedItem(QQuickItem* parent = nullptr) : QQuickItem(parent),

m_elapsedTimer(new QElapsedTimer())

{

setVisible(false);

// When made visible, measure time to display

QObject::connect(this, &QQuickItem::visibleChanged, this, &TimedItem::startMeasuringTimeToDisplay, Qt::DirectConnection);

};

qint64 timeToDisplay() {

return m_timeToDisplay;

};

signals:

void timeToDisplayChanged();

private:

void startMeasuringTimeToDisplay()

{

if (isVisible())

{

// Reset

m_frameReady = false;

// Attempt to take measurement after frame swaps

QObject::connect(window(), &QQuickWindow::afterFrameEnd, this, &TimedItem::measure,

static_cast<Qt::ConnectionType>(Qt::DirectConnection | Qt::UniqueConnection));

// Force polish, ensuring elapsed measurement is taken on the right frame

ensurePolished();

// Take initial measurement

m_elapsedTimer->start();

}

}

void updatePolish()

{

// The frame for this component will be rendered after this

m_frameReady = true;

}

void measure()

{

// This will be called for every frame until the right frame has been rendered

if (m_frameReady)

{

// Measure elapsed time

m_timeToDisplay = m_elapsedTimer->elapsed();

// Prevent measuring further frame

QObject::disconnect(window(), &QQuickWindow::afterFrameEnd, this, &TimedItem::measure);

// Propagate measured time

emit timeToDisplayChanged();

}

}

private:

qint64 m_timeToDisplay = 0;

QElapsedTimer *m_elapsedTimer;

bool m_frameReady = false;

};The post How long does it take for an Item to become visible? appeared first on KDAB.

04 Jun 2026 2:18pm GMT

Qt Creator 20 RC released

We are happy to announce the release of Qt Creator 20 RC!

![]()

04 Jun 2026 9:41am GMT

KDE Gear 26.04.2

Over 180 individual programs plus dozens of programmer libraries and feature plugins are released simultaneously as part of KDE Gear.

Today they all get new bugfix source releases with updated translations, including:

- akregator: Fix a hang on launch on arm64 (Commit)

- ksanecore: Fix crash on skanlite startup (Commit, fixes bug #517465)

- koko: Fix move to trash action overriding image editor delete actions (Commit, fixes bug #519784)

Distro and app store packagers should update their application packages.

- 26.04 release notes for information on tarballs and known issues.

- Package download wiki page

- 26.04.2 source info page

- 26.04.2 full changelog

04 Jun 2026 12:00am GMT

02 Jun 2026

Planet KDE | English

Planet KDE | English

EX-11: Prepping for Plasma’s Last X11-Supported Release

When we first announced the transition to Plasma Wayland, one of Martin's slides from stated, "It's done when it's done!"

That talk was 15 years ago!

Nothing in software is never truly "done", but as announced previously we are finally at a point where we're ready to retire the X11 and put all our focus on the future.

As of today, the Plasma X11 session you can log into has been officially removed, and we will start a mass cleanup of X11-specific code soon.

When does it take effect?

This change will be included in Plasma 6.8, which will be released in around five months.

What's Changed?

In Plasma 6.8, there will be no X11 session in the login screen. There will only be a Wayland session available to log into.

In 6.8, all X11-specific code paths in Plasma for Plasma Shell, System Settings, and device configuration will be gone.

What's stayed the same?

XWayland support remains present. You can keep using your X11 applications, and our XWayland application support is second-to-none.

If you use KDE applications on another desktop environment, this change will have no effect. KDE applications will continue to work in X11 for the foreseeable future.

Plasma Login Manager will continue to be able to log you into X11 sessions of other desktop environments.

What's Next?

The possibilities this opens up are very exciting. Until now, on the desktop side, we've had to target the lowest common denominator or be stuck trying to maintain two conflicting code paths. It was absolutely the right choice to do a gradual transition and approach things this way, but that approach has its limits.

Moving forward with a single code path going through Wayland is going to allow us to bring new performance improvements, memory optimisations, and brand new exciting features throughout Plasma.

How Ready Are We?

Our internal metrics within KDE show that over 95% of users of Plasma 6.6 are on Wayland, with a gradual increase every release. The metrics also show that basically no one is testing or developing Plasma on X11 anymore. The platform was already, for all intents and purposes, abandoned by KDE contributors.

We have every reason to trust this metric data, as it is exactly in-line with what Sentry (our automatic crash reporting tool) reports for newly-encountered crashes shows.

For transparency, the one caveat in all of the above is that I've deliberately always focused on people using the latest Plasma release. We do still have a sizable chunk of users on X11 still using Plasma 5.27. Including them, the total Wayland adoption rate is about 76%. But back then, Wayland wasn't the default session type, so it's hardly a surprise those users are still on X11. Things have come a massively long way in the three years since Plasma 5.27 was released.

Anyone still using Plasma 5.27 - or any release older than Plasma 6.8 - won't be affected by what we do in Plasma 6.8, and nothing will be applied retroactively.

Still Have Issues with Wayland on 6.7?

Whilst we have had full confidence since Plasma 6.0 that our Wayland session provides the better overall experience, we are aware that things don't behave exactly the same. Not everything works the same especially in specialised areas.

We are not expecting a completely seamless transition for everyone. Custom scripts, tools used and even workflows might have to change. But we are aiming to offer a transition where there is still a way to accomplish all your day-to-day tasks.

Plasma 6.7 is the last release that will include an X11 session, and it's coming out in just a few days. If you still have issues that force you back to X11 we would love to hear from you.

We can't promise to get everything fixed in time for 6.8, but we can promise to listen and be aware. People's remaining pain point are and will be on our radar, so please take this time to communicate them.

02 Jun 2026 12:38pm GMT

Krita 5.3.2.1 Released!

Hot on the heels of Krita 5.3.2, we're releasing Krita 5.3.2.1. 5.3.2 had a bug with the layer docker that was very pervasive, and could cause anything from unsynced layers to crashes to groups not behaving as they should. 5.3.2.1 fixes this. Furthermore, we also had some issues where the Windows packages weren't signed. This too should now be fixed.

⚠️ Warning

We consider Krita 5.3.2.1 suitable for productive work; 6.0.2.1 is, because of the many changes from Qt5 to Qt6 more experimental.

Download 5.3.2.1

Windows

If you're using the portable zip files, just open the zip file in Explorer and drag the folder somewhere convenient, then double-click on the Krita icon in the folder. This will not impact an installed version of Krita, though it will share your settings and custom resources with your regular installed version of Krita. For reporting crashes, also get the debug symbols folder.

ⓘ Note

We are no longer making 32-bit Windows builds.

-

64 bits Windows Installer: krita-x64-5.3.2.1-setup.exe

-

Portable 64 bits Windows: krita-x64-5.3.2.1.zip

Linux

Note: starting with recent releases, the minimum supported distro versions may change.

⚠️ Warning

Starting with recent AppImage runtime updates, some AppImageLauncher versions may be incompatible. See AppImage runtime docs for troubleshooting.

- 64 bits Linux: krita-5.3.2.1-x86_64.AppImage

MacOS

Note: minimum supported MacOS may change between releases.

- MacOS disk image: krita-5.3.2.1-signed.dmg

Android

Krita on Android is still beta; tablets only.

Source code

Sources are the same as 6.0.2.1

md5sum

For all downloads, visit https://download.kde.org/stable/krita/5.3.2.1/ and click on "Details" to get the hashes.

Key

See the 6.0.2.1 key section.

Download 6.0.2.1

Windows

If you're using the portable zip files, just open the zip file in Explorer and drag the folder somewhere convenient, then double-click on the Krita icon in the folder. This will not impact an installed version of Krita, though it will share your settings and custom resources with your regular installed version of Krita. For reporting crashes, also get the debug symbols folder.

ⓘ Note

We are no longer making 32-bit Windows builds.

-

64 bits Windows Installer: krita-x64-6.0.2.1-setup.exe

-

Portable 64 bits Windows: krita-x64-6.0.2.1.zip

Linux

Note: starting with recent releases, the minimum supported distro versions may change.

⚠️ Warning

Starting with recent AppImage runtime updates, some AppImageLauncher versions may be incompatible. See AppImage runtime docs for troubleshooting.

- 64 bits Linux: krita-6.0.2.1-x86_64.AppImage

MacOS

Note: minimum supported MacOS may change between releases.

- MacOS disk image: krita-6.0.2.1-signed.dmg

Android

Krita 6.0.2 is not yet functional on Android, so we are not making APK's available for sideloading.

Source code

md5sum

For all downloads, visit https://download.kde.org/stable/krita/6.0.2.1/ and click on "Details" to get the hashes.

Key

The Linux AppImage and the source tarballs are signed. You can retrieve the public key here. The signatures are here (filenames ending in .sig).

02 Jun 2026 12:00am GMT

01 Jun 2026

Planet KDE | English

Planet KDE | English

KStars 3.8.3 Released

KStars v3.8.3 is released on 2026.06.01 for Windows, Linux, and MacOS.

For Linux users, it's highly recommended to use the official KStars Flatpak hosted at Flathub.

This release brings major improvements to the Mount Modeler with artificial horizon filtering and uniform point distribution, significant connection speed optimizations, better guide streaming integration, and enhanced rotator handling. We've also fixed several scheduler and stability issues reported by the community. Here are the highlights:

Alignment & Mount Modeler

- Christian Kemper added Artificial Horizon filtering to the Mount Modeler wizard, allowing generated alignment points below the active horizon to be automatically filtered out. Candidate coordinate points (both generated AltAz coordinates and snapped catalog objects) are now checked and rejected if they fall into active artificial horizon regions.

- New Uniform Distribution mode generates points spread evenly across the visible sky using a Halton sequence, sampling in AltAz space to guarantee every point is above the configured minimum altitude. Points whose declination exceeds +/-80 degrees after conversion are rejected. The Any Stars, Named Stars, and Any Object modes now adopt this distribution internally and snap each generated position to the nearest qualifying object.

- Auto-sorted wizard output: points added by the wizard are automatically sorted in nearest-neighbour slew order, minimizing total slew distance. Users no longer need to click Sort after running the wizard. Clicking Sort during a run reorders only the remaining unvisited points, leaving completed rows undisturbed.

- The wizard now automatically configures the solver for each alignment point. Blind solves are used by default because no pointing model exists yet at the start of a run, so the mount's reported position may be significantly off. The GOTO mode is forced to Sync so the mount is updated after each successful solve. The original settings are saved and restored when the run finishes, is aborted, or is reset.

- Refactored point generation logic to eliminate duplicated generation and conversion math in the test suite, improving generator efficiency using a stateful sequence

- Toni Schriber fixed activation of the rotator button in the align module

- Fixed effective focal length calculation to use radians in the DSLR branch

Guide

- Thanks to Andreas Ruthner, guide streaming now correctly handles video stream window interaction. The video window no longer pops up when guide streaming starts, displays frames correctly when opened from the Capture module after a guide session, and now renders 16-bit mono stream frames (previously only 8-bit mono and RGB were handled).

- Andreas also fixed frame, binning, gain and exposure sync for streaming mode. When starting guide streaming, the module now applies the same frame settings that captureOneFrame() applies for single-frame captures. Previously, streaming mode skipped this setup entirely, causing stale values from other modules to remain active in the driver.

- Frame ROI and binning now sync on stream start, live gain updates apply immediately when the user changes the spinner during active streaming, and binning/exposure changes automatically stop and restart the stream

- Added 0.001-0.01s exposure values to the guide exposure spinner for fast streaming and daytime testing

Rotator

- Fixed several issues with Reversed rotator state not taken into account in various rotator operations

- Rotation now aborts if the Position Angle error keeps increasing due perhaps to reversed rotator behavior

Ekos Profiles & Connection

- Significantly cut down time to connect to INDI web manager by skipping DNS resolution if we already have an IP address specified for the remote host

- Fixed rare crash due to dangling clientManager pointer with test to verify the fix

Capture & Livestacking

- Use OpenCV debayering by default. Enforce even ROI selection to ensure bayer boundaries are respected.

- Always sync from INDI to overwrite shadow states in the Camera process

- Account for STREAM_FULL_DEPTH when streaming

- John Evans added support for Live Stacker Alt/AZ data via Seestar S30 Pro. It should support other telescopes in Alt/AZ mode.

- John added a gradient removal option to post processing in Live Stacker.

FITS Viewer & File Handling

- Use standard gzip compress instead of Qt own compression algorithm

- Support loading .fits.gz and .xisf.gz in the viewer

Scheduler & Observatory Automation

- Fixed scheduler freezes when loading ESL referencing many ESQ files (patch by Tomas). BUGS:519294

- Autopark should work over multiple nights now

- If observatory is not started, skip shutdown procedure

- Fixed issue where post-shutdown script run in infinite loop

- Fixed scheduler and capture scripts not running inside flatpak

Optical Trains & DBus API

- Added DBus call to set and get Pictures directory

- Fixed warning of missing devices when we already selected alternative devices in the optical train

Build & Infrastructure

- Use KSUtil to consolidate all calls to external executable so they can run correctly within a flatpak as well

- Within flatpak, run the external scripts on the host system since it may require libraries that are only available on the host system

- Christian Kemper fixed macOS iconutil failure by adding 256px and 512px icon sizes rendered from the existing SVG source, which are required by iconutil on macOS 15 (Sequoia)

- Wolfgang Reissenberger updated the Dockerfile to be based on installation scripts for all steps: INDI, StellarSolver, PHD2, GSC, openCV. All scripts are built uniformly such that existing packages or installations are preferred. If the package is missing, first installing the appropriate package is tried. If this fails, the package is built from sources

Stability & Bug Fixes

- Fixed crash reported in crash-reports.kde.org regarding invalid base device or message text. The check for message text now occurs earlier in the process to protect against this crash.

- Milhan Kim fixed test deadlock by replacing QTest::mouseClick with animateClick(), which posts the click through the event loop and prevents tests from hanging indefinitely on QDialog::exec() loops

- Fixed an issue where frequent temperature updates can cause the dark cover check to run indefinitely

- Hy Murveit fixed green lines display issue

- Fixed solution assignment

- Fixed crash and distorted artifacts in video streaming

- Replaced all abs and fabs with std::abs for consistency

- Modernized signals and slots

Many thanks to Christian Kemper, Andreas Ruthner, Toni Schriber, Hy Murveit, John Evans, Milhan Kim, Wolfgang Reissenberger, Tomas, and all others who contributed fixes and improvements to this release! Your work makes KStars better for everyone.

Download KStars v3.8.3 today and enjoy improved mount modeling, faster connections, and more reliable guiding!

01 Jun 2026 10:36am GMT

31 May 2026

Planet KDE | English

Planet KDE | English

What Even is Ocean???

Throughout this new process at KDE, I believe I have failed to clearly state what Ocean is and what it means for the future for Plasma user interface and experience.

In this post, I will try to shed some light into this and hopefully it's easier for new people interested in this project.

Why the Confusion?

I think a lot of the confusion primarily comes from my part in showing graphics first and interface later.

Graphics tend to attract a lot of attention. So much that it swallows other narratives about what Ocean is. It's natural for our users to want to know more and accept that the graphics are the whole story.

Spoiler… it's not just graphics

How Should We Think of Ocean?

Ocean is many things, let me list them out:

- Ocean Design System

- Ocean Widget Style

- Ocean Font

- Ocean Icons(Icon Pack)

- Ocean Plasma Style

- Ocean Color Scheme

I think this is another reason why there is confusion out there. I have given a few talks on this as well, but I also see how it's confusing.

In simple terms, Ocean is a new graphic design platform for the Plasma Desktop.

This new platform aims, first, to organize the way graphic design for Plasma is achieved. As you may know, designers like me, tend to be pretty creative and unbound. We give free range to creativity and we like to break norms.

This is a problem, because if we want to make graphics for a computer system, such as Plasma, we need to organize our creativity in way that developers can understand.

For this reason, in recent years, a modern way to organize creativity for computers was invented by applications like Sketch, Figma, and Adobe XD.

In these applications SVG is the graphic of choice. SVG is a set of coordinates that tell the system how to draw shapes on the screen. It's versatile enough that SVG code can be read and tweaked by designers and developers alike. It can be stretched without losing quality, yadah, yadah, yadah… You know the rest.

This new wave of applications work with SVG as collections or repeatable graphics interconnected with each other. They use systems of "Components" and "Variants". Given that computer UI is generally very repetitive, these components save designers time in building more copies of the same graphic with only slight modifications, let's say for states such as default, hover, selected, etc.

These design systems help bring the world of UI development into the graphic design applications. Many developers are very used to working with graphical components, but only until recently were designers able to work with them in a flexible graphical way.

KDE Plasma has never had a system like this to organize design around the UI. Because of this, designers haven't really made a ton of inroads into the system and this limits users in the way that we can deliver design for them. In essence, we designers, were never organized enough to provide a proper, development-ready, graphic design that could be used for Plasma.

This is where Ocean comes in. We took up the idea of creating a design system for Plasma that accounts for most, if not all, of the necessary graphical building blocks that developers could use, that preserve consistency between graphics and code, and deliver a cohesive experience for users.

By doing this, our hope is that graphic designers that are used to working with design systems can join our team and help us go even further.

On the developer side, a design system is a much more clear way of communicating component organization. Developers can more easily understand how buttons are made, what colors are used, what typography levels are on screen, etc. We do this by creating a series of graphic tokens that describe their use in more detail.

CSS is also involved, even though we may not support it, applications like Figma and Penpot have the ability to represent component code in CSS terms that others can read. In addition to these tokens, we create a series of foundational tokens where we declare our colors, typography, shadow levels and composition, blur levels, etc. Everything that users would need to see on the screen.

Ok, But What About the Graphics You Keep Showing?

During the first part of creating a design system, we noticed something pretty meaningful. Breeze and other previous themes, while they work, they don't have any reflection in a design system. Therefore, designers have a hard time completing the puzzle for a good design system that accounts for Breeze. With any attempt, we would be completing so much of a missing puzzle that we would create something new anyway, just to replicate a style in Penpot, for example.

Because of this, we decided to create a new style called Ocean and any style is composed of many parts, listed above.

Because of this, we created:

- Ocean Icons (In progress)

- Ocean Plasma Style (Complete in Penpot)

- Ocean Font (In progress)

- Ocean Style (Complete in Penpot)

- Ocean Color Scheme (Complete-ish, needs more testing)

And one little important detail, it doesn't matter so much how Ocean style looks. Why?

Because through a design system for graphic designers, we have the ability to distribute our system for free to anyone that wants to use it. Graphic designers can tweak the design tokens that Plasma can understand and by doing that, they can more easily build a Plasma Style on their own in a way that is cohesive, thoughtful, complete. We then give those elements to the developer team that would help us execute the design. Hence why I say that Ocean is a design platform.

Still, we created a new style in graphical form and we are working with the developer team to execute this style, using the design system tokens and components, in the same way that the designer intended.

Our current focus is on icons, particularly application icons. This is just one of the many parts that compose Ocean design. A few months ago we completed a round of design that created monochrome Ocean icons. These icons are functional in nature and much easier to put together. However, we knew that app icons take longer because they are colorful and require lots of time and styling.

Icons

My recent posts showcase the progress on these icons. To be more clear about how we are doing this icon design element, here is a process:

- Formalize the visual system

- Define strict rules for perspective, lighting, shadows, gloss, depth, materials, and color usage so all icons feel like one coherent family.

- Strengthen semantic readability

- Ensure each icon immediately communicates the app's purpose, especially at small sizes. Avoid abstraction that weakens recognition.

- Improve visual hierarchy

- Reduce competing elements and make each icon have one clear focal point with cleaner foreground/background separation.

- Tighten color discipline

- Use fewer competing colors, control saturation more carefully, and keep palettes more consistent across the set.

- Be more selective with stylistic quirks

- Avoid asymmetry, misalignment, or unusual perspectives unless they clearly improve recognition or composition.

- Standardize depth and rendering behavior

- Keep extrusion, internal shadows, dimensionality, and lighting logic consistent across all icons.

- Shift from per-icon experimentation to system-level art direction

- Prioritize cohesion and consistency over making every icon individually novel or visually surprising.

…and we are in step 3 of the process for these icons. We are moving them from rough mockups and sketches into more formalized shapes. At this stage, you should not expect a lot of color or shape cohesion. After this pass comes a time of definition. We restrict our colors even more, simplify shapes for impact, remove or redo icons, etc. It's a major review. We expect to do this along with the community.

We also decided to not create icons for third parties. This makes the amount of app icons to make much smaller. This also makes it easier to think about what our icons should look like going forward.

All in all, Ocean is a platform composed of many parts. Our current design focus is set on completing the icon pack while the rest of the style is preparing for development.

I hope this makes it easier to understand and I am happy to answer any questions.

31 May 2026 9:14pm GMT